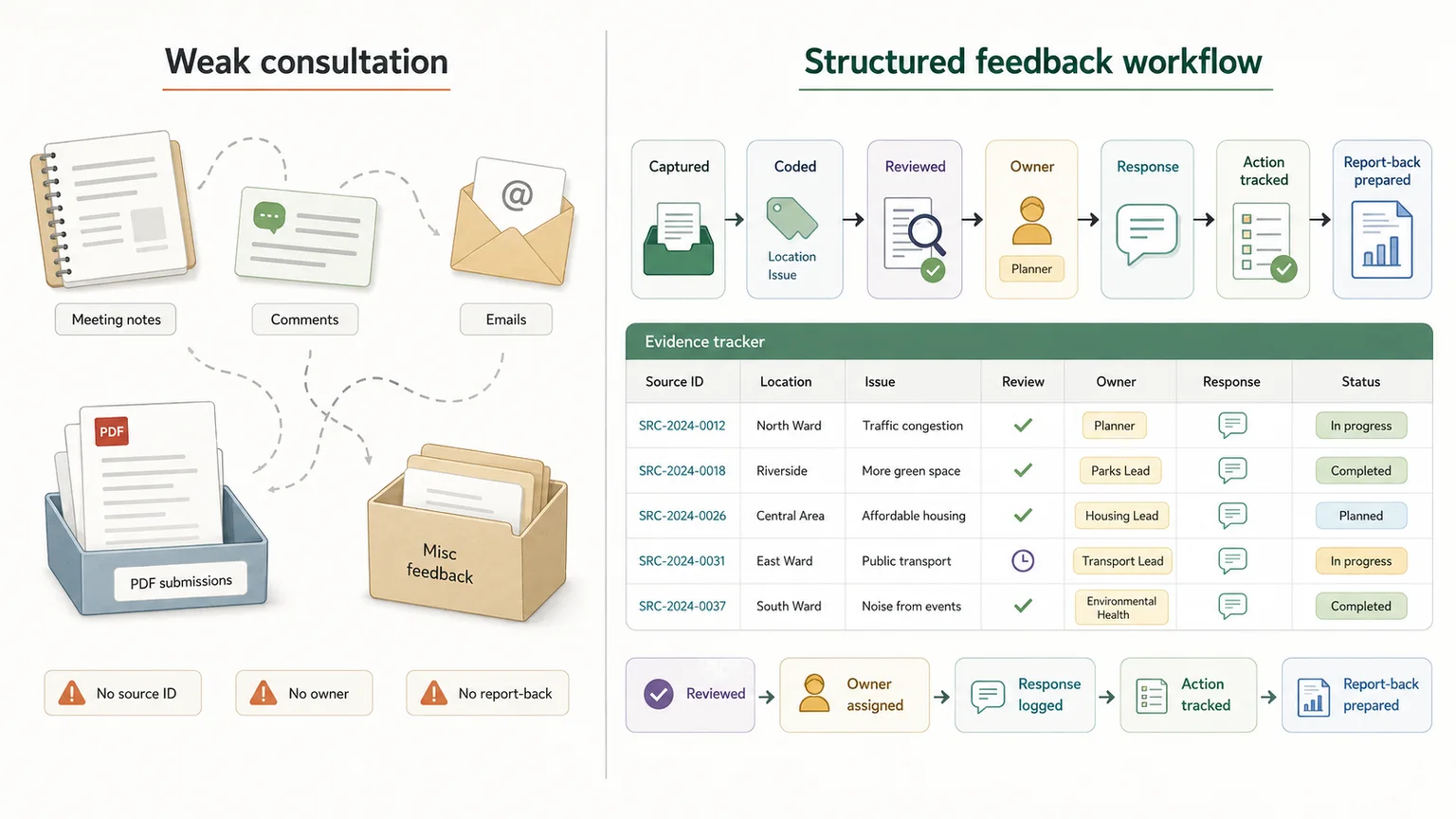

Many public participation processes collect comments, run meetings, publish draft documents and invite feedback. The problem is what happens after that.

Community members may raise the same issues again and again, but the feedback disappears into meeting notes, inboxes, PDF submissions, spreadsheets or one-off summaries. When there is no structured way to code, review, respond to and monitor those inputs, consultation starts to feel performative.

Community-Led Monitoring offers a stronger model. Affected communities help define what should be monitored, collect or validate evidence, review whether services are improving, and use that evidence to push for action.

But the value does not come from feedback alone.

Community voice does not help much if it disappears into inboxes, PDFs, meeting notes or dashboards no one acts on. The value comes when feedback becomes structured evidence that can be reviewed, responded to and used to monitor whether implementation is actually changing what communities experience.

This guide explains how Community-Led Monitoring and public feedback systems can support South African NPOs, public-sector teams, policy projects and donor-funded programmes — and why the real value depends on the evidence workflow behind the consultation.

Quick answer

Community-Led Monitoring is a recurring feedback and accountability process. It works best when public input is captured, coded, reviewed, responded to, and tracked through implementation.

Who this guide is for

South African NPOs, public-sector teams, policy projects and donor-funded programmes that need public feedback turned into usable evidence

What is Community-Led Monitoring?

Community-Led Monitoring, often shortened to CLM, is a recurring process where affected communities help monitor services, identify problems, interpret evidence, engage duty-bearers and push for change.

The ITPC CLM Hub describes CLM as a process where communities take the lead in routinely monitoring an issue that matters to them, then work with policymakers to co-create solutions to the problems they identify. (CLM Hub) UNAIDS describes CLM as a cyclical process where people affected by health inequities systematically monitor services, analyse the data they collect and conduct evidence-driven advocacy to improve service delivery and support community-defined priorities. (UNAIDS)

CLM is not:

- a once-off survey

- a suggestion box

- a donor reporting exercise

- a dashboard with no response process

- a public comment process with no report-back

A practical CLM cycle looks more like this:

- Define the issue

- collect evidence

- analyse patterns

- validate findings

- engage duty-bearers

- track action

- report back

- repeat

Strong CLM should be community-led, transparent and designed so affected communities have real influence over what is monitored, how findings are interpreted and how evidence is used. At the same time, the evidence still needs structure, review and traceability.

Why normal consultation often fails

Consultation does not usually fail because no one asked for input. It often fails because the input was not turned into a usable evidence system.

Common problems include:

- communities are asked for input but never hear back

- feedback is captured in inconsistent formats

- public submissions are summarised too broadly

- minority or place-specific concerns get lost

- repeated issues are not tracked over time

- public platforms become complaint dumps without review logic

- there is no clear link between feedback and implementation

- no one can show which comments influenced the final decision

- officials receive too many comments without a coding structure

This creates consultation fatigue. People attend meetings, complete surveys or submit comments, but they do not see what changed. Over time, they stop believing the process matters.

The failure is not always that consultation did not happen. The failure is that feedback was not captured, structured, reviewed, responded to and monitored over time.

A stronger workflow looks like this:

- Capture

- structure

- code

- review

- respond

- act

- report back

- monitor again

That is where consultation starts becoming accountability.

CLM, public participation and the South African local government context

South Africa already has a strong legal basis for public participation, especially in local government.

The Municipal Systems Act requires municipalities to develop participatory governance and create conditions for local communities to participate in municipal affairs, including IDPs, performance management systems, monitoring and review of municipal performance, budgets and strategic service delivery decisions. (Law Library)

The same Act also requires municipalities to create mechanisms for petitions and complaints, public comment procedures, public meetings and hearings, consultative sessions and report-back to communities. (Law Library)

The legal framework supports participation. The operational challenge is building the system that receives, organises, reviews, responds to and learns from that participation.

This is where many public-sector, NPO and donor-funded teams struggle. The meeting may happen. The submissions may arrive. The dashboard may be published. But if there is no structured feedback workflow behind the process, the evidence becomes hard to use.

CLM versus traditional M&E versus public consultation

Community-Led Monitoring, traditional M&E and public consultation are connected, but they are not the same thing.

| Feature | Traditional M&E | Public consultation | Community-Led Monitoring |

|---|---|---|---|

| Main purpose | Track programme performance | Gather input on a plan, policy or decision | Monitor lived experience and push for service improvement |

| Timing | Periodic or project-based | Often linked to a draft or decision point | Recurring |

| Who defines the questions? | Implementer, funder or evaluator | Usually the institution consulting | Community and affected groups should shape priorities |

| Data type | Indicators, reports, surveys | Written comments, meetings, submissions | Community evidence, service experience, qualitative and quantitative data |

| Main risk | Upward reporting only | Performative participation | Advocacy without enough structure or response discipline |

| Best use | Programme management and reporting | Policy or design legitimacy | Accountability and implementation improvement |

These are not enemies. A strong system can combine all three.

Formal M&E can track programme indicators. Public consultation can gather input at key decision points. CLM can help show whether services, policies or implementation plans are working for the people affected by them.

The issue is not which method sounds better. The issue is whether feedback becomes evidence that can be reviewed, acted on and tracked over time.

What the White Paper process shows about public feedback loops

A national White Paper process gives a useful example of why public feedback needs an evidence workflow.

The Local Government White Paper review is not the same as a full Community-Led Monitoring system. It is better understood as a public participation and feedback-loop example. But it shows the same underlying problem: if public input is not structured, coded, reviewed and linked to decisions, it becomes noise or performative consultation.

As of May 2026, CoGTA had gazetted the Reviewed Draft White Paper on Local Government for public comment, with written submissions invited from members of the public, civil society, organised local government, traditional leadership, business, labour and other stakeholders, and a submission deadline of 28 May 2026. (Cooperative Governance Affairs)

In a process like this, public input can happen at more than one stage.

- Round 1: public and stakeholder input on what is wrong, missing, broken or needed

- Evidence synthesis and drafting support

- Draft White Paper released for public comment

- Round 2: public and stakeholder feedback on whether the draft responds properly

- Coded review-comments database and finalisation support

- Future implementation monitoring and public communication loop

The first round of submissions helps identify what stakeholders, communities and institutions see as the main problems. Those inputs can shape themes, policy issues and drafting priorities.

The second round, after a draft is published, gives the public and stakeholders a chance to test whether the draft understood the problem properly, missed important issues, overemphasised others or failed to respond to realities on the ground.

In the Local Government White Paper evidence workflow, mixed-format submissions, specialist inputs and review comments needed to remain traceable from source to theme, claim, drafting note and later review response. That required controlled intake, source IDs, claims coding, theme coding, quote fields, review flags and a coded review-comments database.

Public input is not a vote, and it is not automatically representative. Some comments may reflect narrow interests, local frustrations, political positions or personal demands. But that does not make them useless. It means they need to be coded, compared, grouped and reviewed carefully.

The same logic applies after implementation starts.

Once a policy, programme or reform roadmap is being rolled out, communities need a way to say:

You say this is improving, but this is what we are seeing in our municipality, clinic, school, grant office or ward.

That is where public participation starts becoming implementation monitoring.

From public comment to implementation monitoring

Consultation should not end when the policy is adopted.

A stronger public feedback system should create a loop between official implementation updates and grounded public feedback. Government, implementing partners or programme teams can publish what is supposed to be happening. Communities, service users and stakeholders can then submit evidence on what they are experiencing.

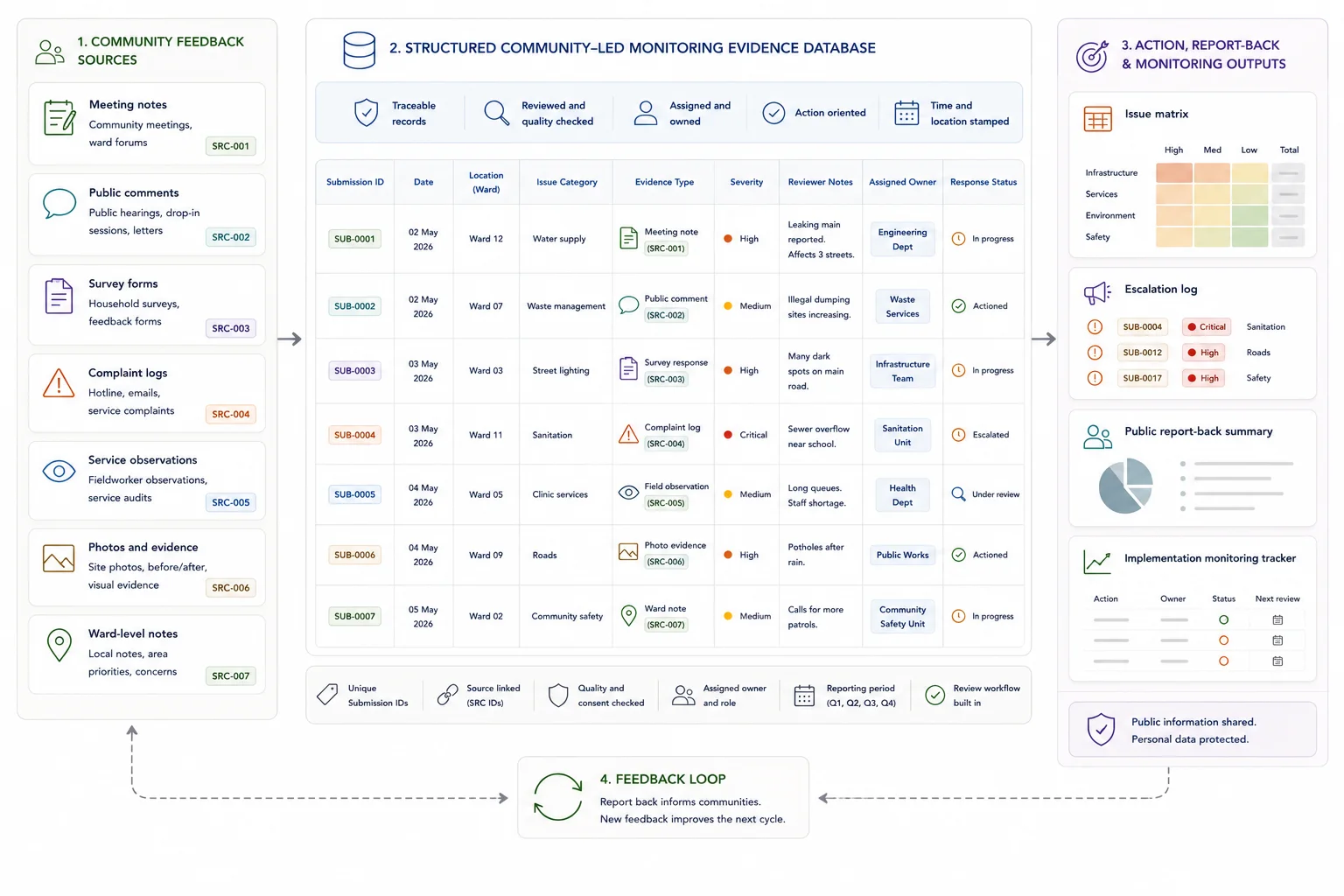

A practical implementation feedback workflow might look like this:

- Implementation update published

- Community feedback submitted

- Feedback tagged by place, theme, reform area and issue type

- Evidence reviewed and deduplicated

- Patterns identified

- Responsible unit assigned

- Response or action logged

- Progress update reported back

- Cycle repeats

This is where public participation becomes implementation intelligence.

A two-way system can help teams see which reforms are landing, where implementation is weak, which municipalities or service points are struggling, and which issues are being raised repeatedly.

For example, comments could be tagged by municipality, ward, theme, service issue, implementation pillar or reform area. Recurring issues could become monitoring signals. Officials could see where implementation is failing on the ground. The public could receive report-back on what was done.

This requires more than a contact form. It requires a structured feedback database.

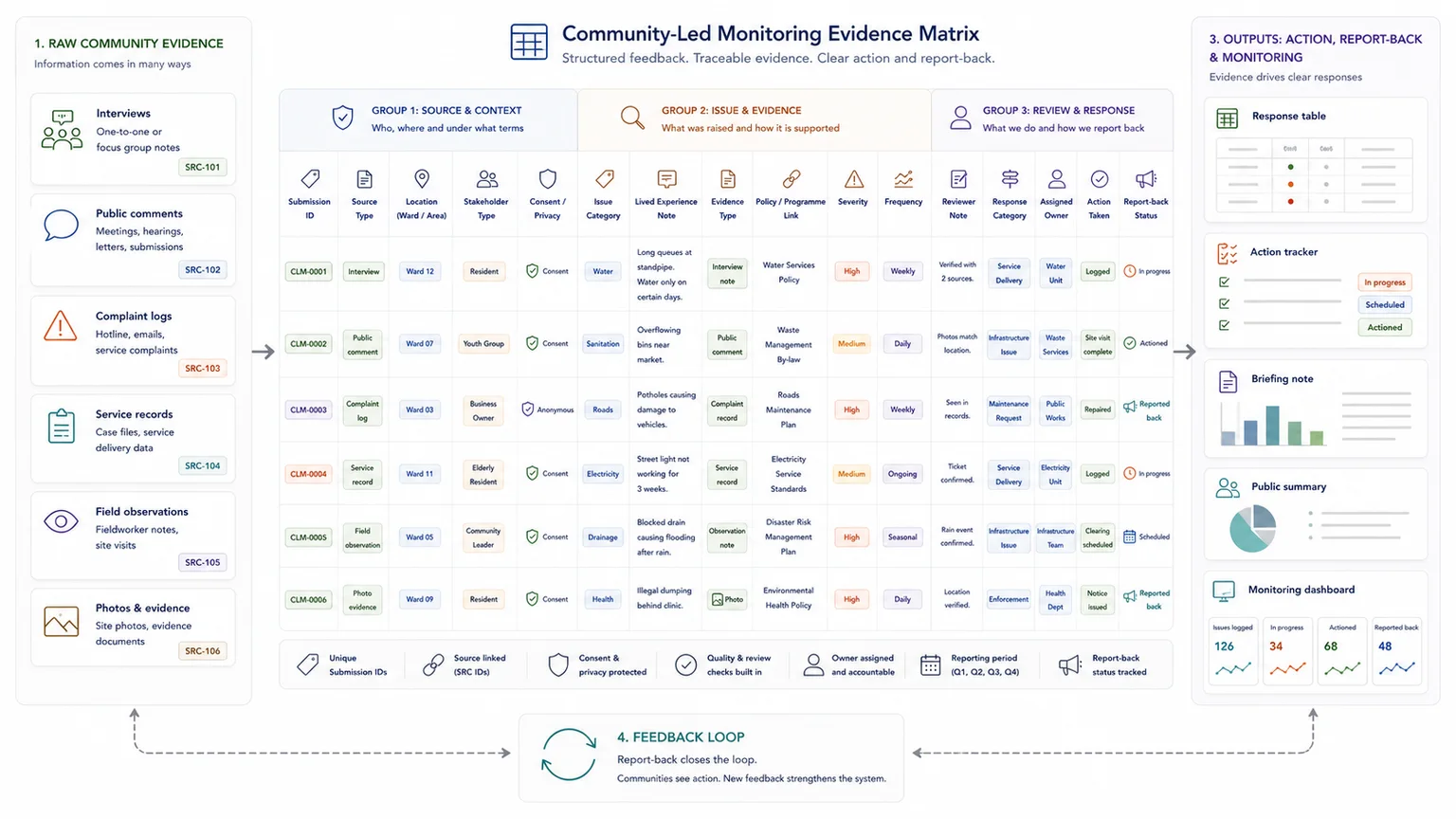

Why a CLM or public feedback platform needs a structured database

The form is not the system. The system is what happens after the form.

A public-facing platform may collect submissions, complaints, service ratings, comments, photos or community observations. But the real value comes from how that information is stored, coded, reviewed, escalated and reported back.

A useful feedback database might include:

| Field | Purpose |

|---|---|

| Submission ID | Unique record for each submission |

| Source type | Community member, organisation, official, service user or partner |

| Consent and privacy status | Shows whether and how the information can be used |

| Geographic fields | Municipality, ward, district, facility or service area |

| Stakeholder type | Broad category of person or organisation submitting |

| Issue category | Service delivery, governance, access, safety, finance or infrastructure |

| Service area | Clinic, school, grant office, ward service, municipal service or programme area |

| Severity or urgency | Low, medium, high or urgent |

| Theme | Main issue or coded topic |

| Related policy section | Chapter, reform area, pillar or implementation commitment |

| Evidence type | Comment, interview, photo, survey, observation or document |

| Quote or excerpt | Selected text for review or reporting |

| Duplicate marker | Shows whether the issue repeats another submission |

| Reviewer notes | Interpretation, caution or evidence quality notes |

| Response status | New, reviewed, accepted, escalated, closed or pending |

| Assigned owner | Person, unit or institution responsible for follow-up |

| Action taken | What was done in response |

| Report-back status | Whether the community or stakeholder was updated |

| Public/private visibility | What can be shown publicly and what must remain internal |

This structure helps teams build a structured feedback database, rather than leaving comments scattered across email inboxes, PDFs and spreadsheets.

It also gives public-sector and programme teams a fairer way to handle feedback. The team can preserve the original comment, code the issue, identify wider patterns, separate individual requests from systemic concerns and decide what needs a drafting response, implementation response or further investigation.

How to handle biased, narrow or conflicting public comments

Community feedback is essential, but it still needs review.

Some comments may be personal, duplicated, inaccurate, contradictory or outside the mandate of the project. Some may reflect narrow self-interest. Others may represent a serious but low-volume concern from a group that is usually underrepresented.

A good evidence workflow does not accept every comment uncritically. It helps teams preserve the comment, code it fairly, identify wider patterns and decide how it should be handled.

Common issues include:

- duplicate campaigns

- narrow self-interest

- misinformation

- contradictory views

- unrealistic demands

- politically motivated submissions

- comments outside the project mandate

- local issues presented as national issues

- emotionally charged but evidence-light claims

- under-representation of quiet or marginalised groups

The answer is not to dismiss public feedback. The answer is to handle it with discipline.

A good review process should:

- keep the original source intact

- code the issue, not only the sentiment

- separate lived experience from policy preference

- tag whether the comment is individual, organisational, technical, community-based or institutional

- track frequency without treating frequency as the only measure of importance

- flag evidence gaps

- use reviewer notes

- preserve minority concerns

- distinguish “needs response” from “needs adoption”

- show why something was accepted, partially accepted, deferred or not taken forward

Useful response categories might include:

| Response category | When it helps |

|---|---|

| Accepted | The comment leads to a clear change |

| Partially accepted | Part of the comment is taken forward |

| Already addressed | The issue is already covered in the draft, plan or policy |

| Requires implementation action | The issue is not a drafting problem but needs follow-up during implementation |

| Requires further evidence | The issue is important but needs more information |

| Outside current scope | The issue is real but not within the current mandate |

| Not adopted, with reason | The comment is reviewed but not taken forward |

| Duplicate or merged | The issue repeats another coded comment |

This is especially important in public consultation analysis, where teams must turn public feedback into themes, findings and review-ready outputs without losing source traceability.

South African examples of CLM and community accountability

South Africa already has useful examples of community monitoring, social accountability and public evidence being used to push for service improvement.

Ritshidze

Ritshidze is one of South Africa’s clearest Community-Led Monitoring examples. Its monitoring takes place quarterly at more than 450 clinics and community healthcare centres across 25 districts in eight provinces. (Ritshidze)

Ritshidze’s model shows how community data can be collected at scale, analysed and used for advocacy around HIV and TB service delivery. It also shows why CLM needs both community ownership and a strong data system behind it.

Black Sash

Black Sash provides a broader community-based monitoring example beyond health. It says its Community Based Monitoring model recognises communities, citizens and public service users as rights holders rather than passive users of public services, and that independent monitoring provides tangible feedback to government departments to improve service delivery. (blacksash.org.za)

This matters because community monitoring should not only collect complaints. It should help communities gather and use evidence from the service user’s point of view. (Community-Based Monitoring)

Social Justice Coalition

The Social Justice Coalition’s sanitation work in Khayelitsha shows how community evidence can be used for accountability.

International Budget Partnership material describes how the SJC and residents used a social audit of chemical toilets in Khayelitsha to show that communities can participate directly in monitoring service delivery and holding leaders accountable. (International Budget Partnership) Later reporting on a sanitation social audit found that poor planning, management and monitoring undermined janitorial services affecting thousands of toilets across informal settlement communities. (The Mail & Guardian)

These examples are different, but the common thread is clear: community evidence becomes stronger when it is collected systematically, reviewed carefully and linked to action.

Designing a CLM or public feedback workflow

A CLM or public feedback workflow should be designed before the platform, survey or dashboard is built.

1. Define the issue and decision context

Start with the question: what is being monitored?

Examples include:

- clinic access

- grant office service

- municipal service delivery

- policy implementation

- ward-level infrastructure

- school support services

- public consultation response

- White Paper implementation roadmap

The team should know whether the process is meant to inform drafting, identify implementation gaps, support advocacy, guide resource allocation, improve services or report back to communities.

2. Co-design the monitoring questions

The community should help define what matters.

Ask:

- What does the community experience?

- What does the policy or programme promise?

- What evidence would show progress?

- What should be escalated?

- What can be resolved locally?

- What should be monitored repeatedly?

Without this step, the system may measure what institutions want to report, not what communities need to see changed.

3. Build the data structure before the form

Do not start with a form builder. Start with fields, categories, review rules and output needs.

The team should define:

- what must be captured

- which categories will be used

- which fields are required

- what should remain private

- what can be shown publicly

- who reviews submissions

- how issues are escalated

- what reports need to be produced

This is where a system can be designed for decisions, not only data collection.

4. Collect mixed evidence

Public feedback is not limited to one format.

Useful sources can include:

- surveys

- structured forms

- interviews

- public comments

- community meetings

- observation checklists

- complaint logs

- ward-level notes

- service records

- photos, where appropriate and ethical

The method should fit the context, the sensitivity of the issue and the capacity of the team.

5. Code and review the evidence

Raw feedback needs structure before it can support action.

Submissions can be tagged by:

- geography

- theme

- service issue

- severity

- source type

- stakeholder group

- policy section

- implementation pillar

- response status

This allows the team to identify repeated issues, compare feedback across locations and prepare response tables.

6. Feed findings back to decision-makers

Evidence should be turned into practical outputs.

These might include:

- issue matrices

- dashboards

- response tables

- briefing notes

- recommendation lists

- escalation logs

- public report-back summaries

This is where teams can identify patterns, risks and implementation priorities, rather than only describing what people said.

7. Track action and report back

The cycle is not complete until people know what happened with the feedback.

Report-back can include:

- what was heard

- what was accepted

- what was changed

- what still needs investigation

- what falls outside the mandate

- what will be monitored next

Without report-back, even a well-run consultation process can damage trust.

What a CLM evidence matrix should include

A CLM evidence matrix gives the team a consistent way to move from raw feedback to decisions.

| Field | Purpose |

|---|---|

| Submission ID | Unique record |

| Source type | Community member, organisation, official or service user |

| Location | Municipality, ward, district or facility |

| Issue category | Service delivery, governance, access, safety, finance or infrastructure |

| Lived experience note | What the person says is happening |

| Evidence type | Comment, interview, photo, survey, observation or document |

| Policy or programme link | Which plan, chapter, reform area or service standard it relates to |

| Severity | Low, medium, high or urgent |

| Frequency | One-off, recurring or widespread |

| Reviewer note | Interpretation and evidence quality |

| Response category | Accepted, partially accepted, outside scope or needs further evidence |

| Assigned owner | Who should respond or investigate |

| Action taken | What changed |

| Report-back status | Whether the community has been updated |

This type of matrix makes public feedback easier to analyse, but it also protects the integrity of the process.

It allows teams to trace a finding back to source material, check how comments were coded, review why a response category was chosen and prepare public-facing summaries.

Once the evidence is reviewed, teams can turn evidence into public-facing reporting outputs that show what was heard, what changed and what still needs attention.

Common mistakes to avoid

Treating consultation as a once-off event

A public meeting or comment period may be useful, but accountability depends on what happens after the feedback is received.

Collecting comments without a coding structure

If comments are not coded by theme, location, service issue or decision area, they become difficult to review fairly.

Publishing a dashboard without a response process

A dashboard can show problems, but it does not solve them. Someone still needs to review, assign, respond and track action.

Treating all comments as equally representative

One comment does not automatically represent a whole community. Frequency, source type, evidence quality and context all matter.

Ignoring minority concerns because they are low-volume

Low-volume feedback can still reveal serious issues, especially for marginalised groups.

Failing to distinguish complaints from evidence

A complaint may contain evidence, but it still needs review. The workflow should preserve the original comment while coding the issue fairly.

Not linking feedback to policy sections or implementation pillars

Feedback is easier to act on when it is connected to a specific chapter, service standard, reform area or delivery commitment.

Letting comments disappear after the draft is finalised

If public input is only used during drafting, the process loses a chance to monitor implementation.

Using AI to summarise feedback without source traceability

AI can help group comments, detect themes and prepare first-pass summaries. It should not replace source-linked review. Teams that use AI should use AI carefully for retrieval and review support, with clear coding rules and human checks.

Failing to report back to the public

Report-back is not an extra step. It is part of the accountability loop.

Before a major public consultation or implementation feedback process, teams can check whether their evidence trail is strong enough.

When to get external help

External support is useful when public feedback is too large, sensitive or mixed-format to manage manually.

That often happens when:

- submissions arrive in PDFs, emails, spreadsheets and meeting notes

- comments need to be coded by theme, issue, chapter or reform area

- public feedback needs to be linked to drafting or implementation decisions

- the team needs a response matrix

- there is too much material to review manually

- source traceability matters

- the public will comment more than once

- implementation feedback must be tracked over time

- the team wants AI support but needs review guardrails

I help public-sector, policy, research and donor-funded teams build the evidence workflow behind public feedback. That can include submission registers, claims databases, issue matrices, response matrices, source locators, coded review-comment databases, AI-supported retrieval, synthesis packs and reporting outputs. For formal public-comment volumes, the Public Submission Analysis System is the closest productised fit.

For large consultation processes, it may also help to estimate whether your team can handle the volume of submissions before the deadline arrives.

FAQ

What is Community-Led Monitoring?

Community-Led Monitoring is a recurring process where affected communities help monitor services, identify problems, analyse evidence, engage duty-bearers and push for action.

How is CLM different from normal M&E?

Traditional M&E often focuses on programme reporting. CLM focuses on community experience, social accountability and whether services are working for the people who use them.

Is public consultation the same as CLM?

No. Public consultation usually gathers input at a specific point in a policy or programme process. CLM is more continuous. But both need structured systems to capture, review, respond to and report back on public input.

How can CLM help policy implementation?

CLM can help show whether policy promises are being experienced on the ground. It gives communities a way to report implementation gaps and gives decision-makers a clearer evidence base for follow-up.

Should every public comment be accepted?

No. Public comments should be preserved and reviewed, but not every comment should be adopted. A good evidence workflow helps distinguish repeated concerns, narrow demands, evidence-backed issues, implementation gaps and comments that fall outside the mandate.

Can AI help analyse public feedback?

Yes, but only with guardrails. AI can help group comments, detect themes and prepare first-pass summaries. Human review, source traceability and clear coding rules must stay in the workflow.

Public feedback should become usable evidence

Community-Led Monitoring is not only community engagement. It is a structured feedback and accountability system.

The same principle applies to public consultation, policy review, implementation monitoring and social accountability work. Feedback only becomes useful when it is captured, structured, coded, reviewed, responded to and monitored over time.

For South African NPOs, public-sector teams and donor-funded programmes, the practical question is not only “Did we ask people?” It is also:

- Can we show what people said?

- Can we trace the feedback back to source material?

- Can we identify patterns across places and groups?

- Can we show how comments were reviewed?

- Can we explain what changed?

- Can we report back?

That is what turns public voice into evidence and action.

Need to turn public feedback into a usable evidence workflow?

I help public-sector, policy, research and donor-funded teams structure submissions, comments, community feedback and implementation evidence so they can be reviewed, traced, synthesised and turned into clearer reporting outputs.

Send a short project brief, or start by checking whether your current submission process can handle the volume and complexity of public input.

Data Synthesis

Combine and interpret inputs from multiple sources into integrated findings.