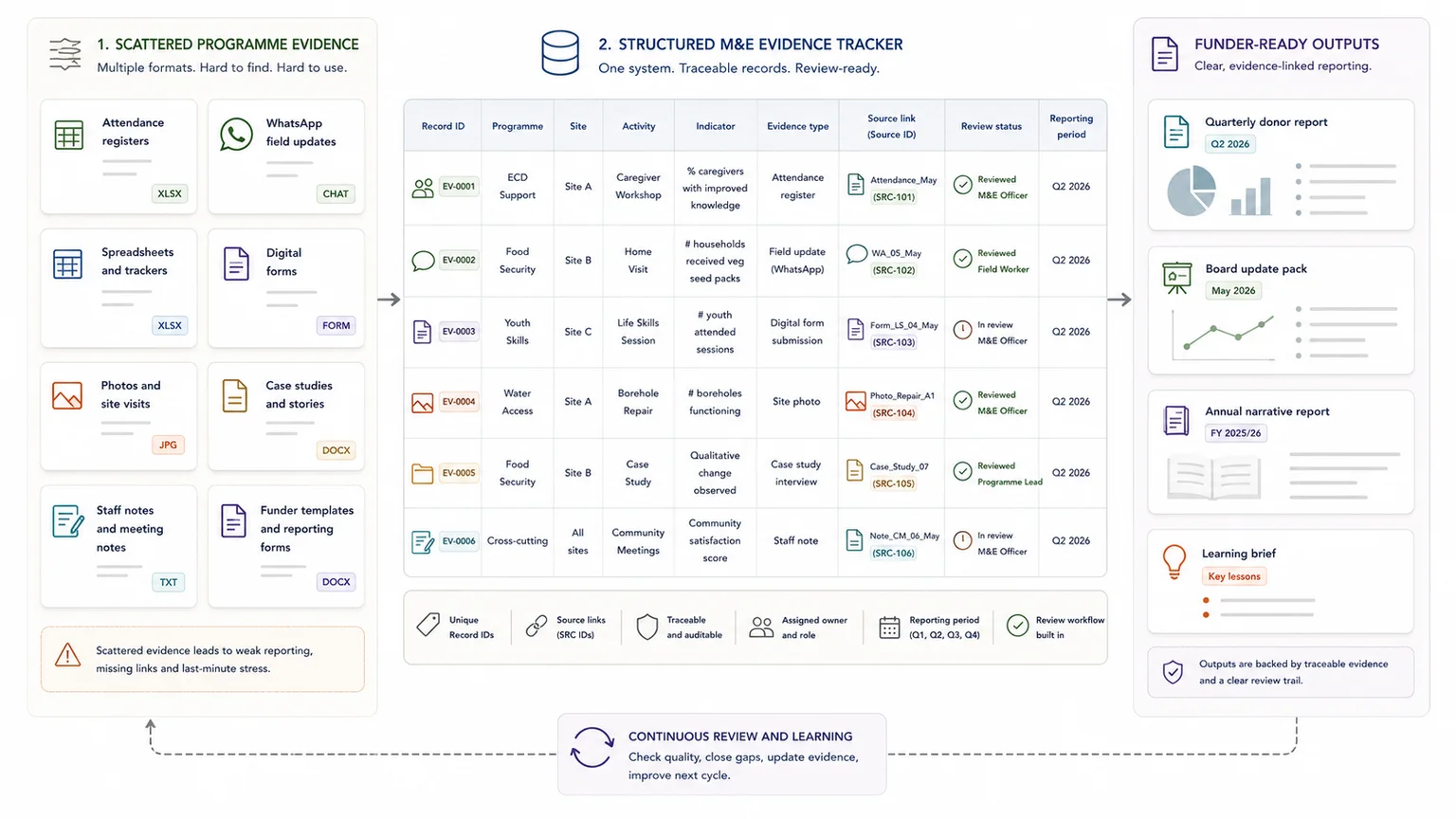

Many South African NPOs do collect monitoring information. The problem is that the information often sits across attendance registers, spreadsheets, WhatsApp updates, forms, photos, case studies, staff notes, funder templates, and annual reports. When reporting time arrives, the team has to rebuild the story manually.

This is where M&E reporting workflows for South African NPOs become practical. Monitoring and evaluation should help teams understand what is happening, what is changing, what evidence supports those changes, and what needs to be reported.

A useful M&E setup is more than a list of indicators. It is a working route from programme activity to evidence, review, reporting, learning, and accountability.

This guide explains how South African NPOs, NGO programme teams, and donor-funded organisations can think about M&E as a reporting workflow, not a last-minute reporting task.

Quick answer

M&E reporting workflows help NPOs move from scattered monitoring data, field notes, case studies, and programme records into traceable evidence and funder-ready reports. The goal is not more data; it is a usable route from activity to evidence, review, reporting, and learning.

Who this guide is for

South African NPOs, NGO programme teams and donor-funded organisations that need monitoring data and field evidence to support clearer reporting

Why M&E matters for South African NPOs

Monitoring and evaluation for NPOs in South Africa is often linked to funder reporting, but it should support more than donor compliance.

A practical M&E workflow helps an organisation account to communities, boards, funders, management teams, partners, and regulators. It also helps staff learn what is working, what is not working, and where a programme needs adjustment.

For many teams, the biggest gain is practical. A clear programme reporting system reduces the scramble before quarterly donor reports, funding renewals, board packs, annual narrative reports, or impact summaries. The team does not need to search through old registers, folders, emails, and WhatsApp messages every time a report is due.

Registered NPOs in South Africa must submit annual reports within nine months after financial year-end, and the South African Government says the annual report should consist of a narrative report and a financial report. (Government of South Africa) DSD guidance also refers to a narrative report, annual financial statement, and accounting officer’s report, and says non-compliance after notice can lead to cancellation of registration status. (Department of Social Development)

South Africa also has an active monitoring and evaluation ecosystem. SAMEA describes itself as a voluntary organisation that promotes monitoring and evaluation as a discipline and instrument for equitable and sustainable development in South Africa and beyond. (SAMEA)

M&E is not only something an NPO does after a project. It should shape how the project collects, stores, reviews, and uses evidence from the start.

Monitoring, evaluation, and reporting are connected but different

Monitoring

Monitoring is the ongoing tracking of what happens during implementation.

For an NPO, this can include:

- attendance records

- number of participants reached

- number of sessions delivered

- services provided

- referrals made

- follow-up visits completed

- issues reported by field staff

- beneficiary feedback collected during the programme

The DPME National Evaluation Policy Framework describes monitoring as the continuous collecting, analysing, and reporting of data in a way that supports effective management and gives managers regular feedback on implementation and results.

Put simply, monitoring tells you what is happening while the work continues.

Evaluation

Evaluation asks deeper questions about the value and results of the work.

For example:

- Did the programme reach the right people?

- Did the intervention contribute to the intended change?

- What worked, for whom, and under what conditions?

- What should change before the next phase?

- Were resources used well?

- Are the results likely to last?

DPME defines evaluation as the systematic collection and objective analysis of evidence on policies, programmes, projects, functions, or organisations to assess issues such as relevance, performance, value for money, impact, and sustainability.

For donor-funded programmes, the OECD DAC criteria can be useful lenses: relevance, coherence, effectiveness, efficiency, impact, and sustainability. The OECD is clear that these criteria are not a methodology; they are questions to help assess an intervention and should be used with judgement. (OECD)

Reporting

Reporting turns monitoring and evaluation evidence into outputs for funders, boards, management, communities, partners, or regulators.

These outputs can include:

- donor reports

- annual narrative reports

- board reports

- impact reports

- programme learning briefs

- case study packs

- funding renewal evidence

- dashboards or summary tables

A reporting problem usually starts earlier than the report. It often starts when data is captured inconsistently, saved in the wrong place, or disconnected from the indicators and outcomes it is meant to support.

The real M&E problem is often the workflow

Many NPOs do not struggle because they have no information. They struggle because the information is scattered.

Attendance registers are saved as PDFs or photos. Beneficiary stories sit in Word documents. Fieldworker notes are in WhatsApp. Donor indicators live in one spreadsheet. Programme photos sit in Google Drive. Case studies are written after the fact from memory. Quarterly reports are rebuilt from scratch. The same statistics are copied into different templates.

Then, when someone asks where a claim came from, the team has to hunt for the original record.

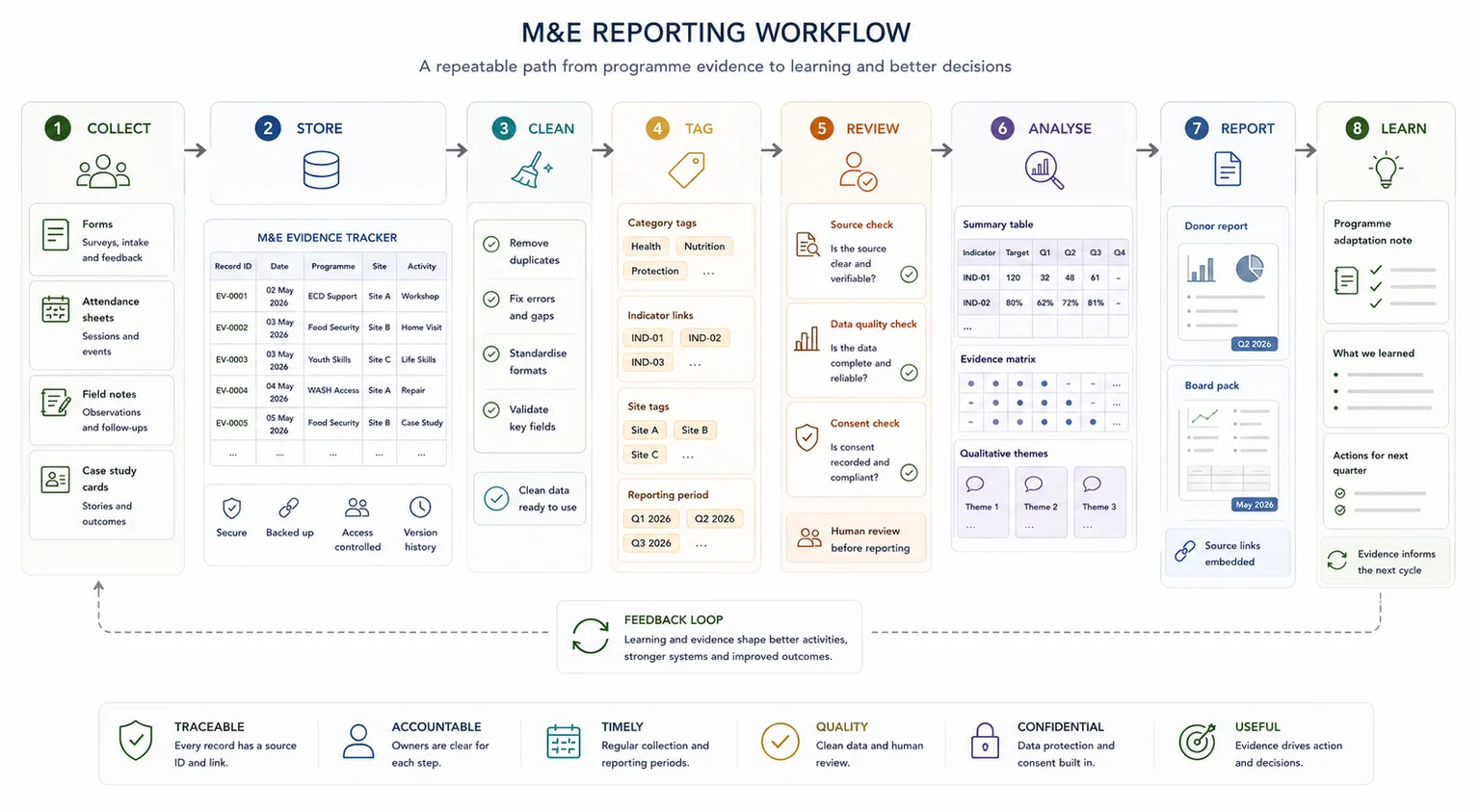

A better evidence workflow for donor reporting should move through a clear sequence:

- Collect

- Store

- Clean

- Tag

- Review

- Analyse

- Report

- Learn

This does not mean every NPO needs a large platform. In many cases, a well-designed spreadsheet, standard forms, clean folders, source links, review fields, and reporting templates can solve the first reporting bottleneck.

The workflow matters because reporting is not only about writing. It is about being able to find, check, summarise, and explain the evidence behind the report.

What a useful M&E reporting workflow should include

1. A clear programme logic

A basic programme logic and Theory of Change helps the team connect programme activity to reporting evidence:

- Inputs

- Activities

- Outputs

- Outcomes

- Impact

The CDC explains that logic models show the connection between programme activities and intended outcomes, with common elements including activities and outcomes, and related terms such as inputs and outputs. (CDC)

For example:

- Inputs: staff, volunteers, funding, training materials, venues, transport

- Activities: workshops, home visits, counselling, food parcels, mentoring sessions

- Outputs: number of sessions delivered, people reached, materials distributed

- Outcomes: improved knowledge, behaviour change, better access to services

- Impact: broader long-term change, which is harder to prove and often needs deeper evaluation

This helps the team avoid mixing up outputs, outcomes, and impact in reports.

2. A small set of useful indicators

Indicators should be specific enough to collect and useful enough to report.

Too many indicators can make reporting heavier without improving the evidence. Too few can leave the team unable to explain what changed.

Useful indicators might include:

- number of participants enrolled

- attendance rate

- number of referrals completed

- percentage of participants completing the programme

- number of households reached

- number of follow-up contacts completed

- beneficiary satisfaction rating

- change in knowledge or confidence before and after training

- number of case studies with verified source notes

The test is simple: can the team collect the indicator consistently, and will it help the organisation explain what happened?

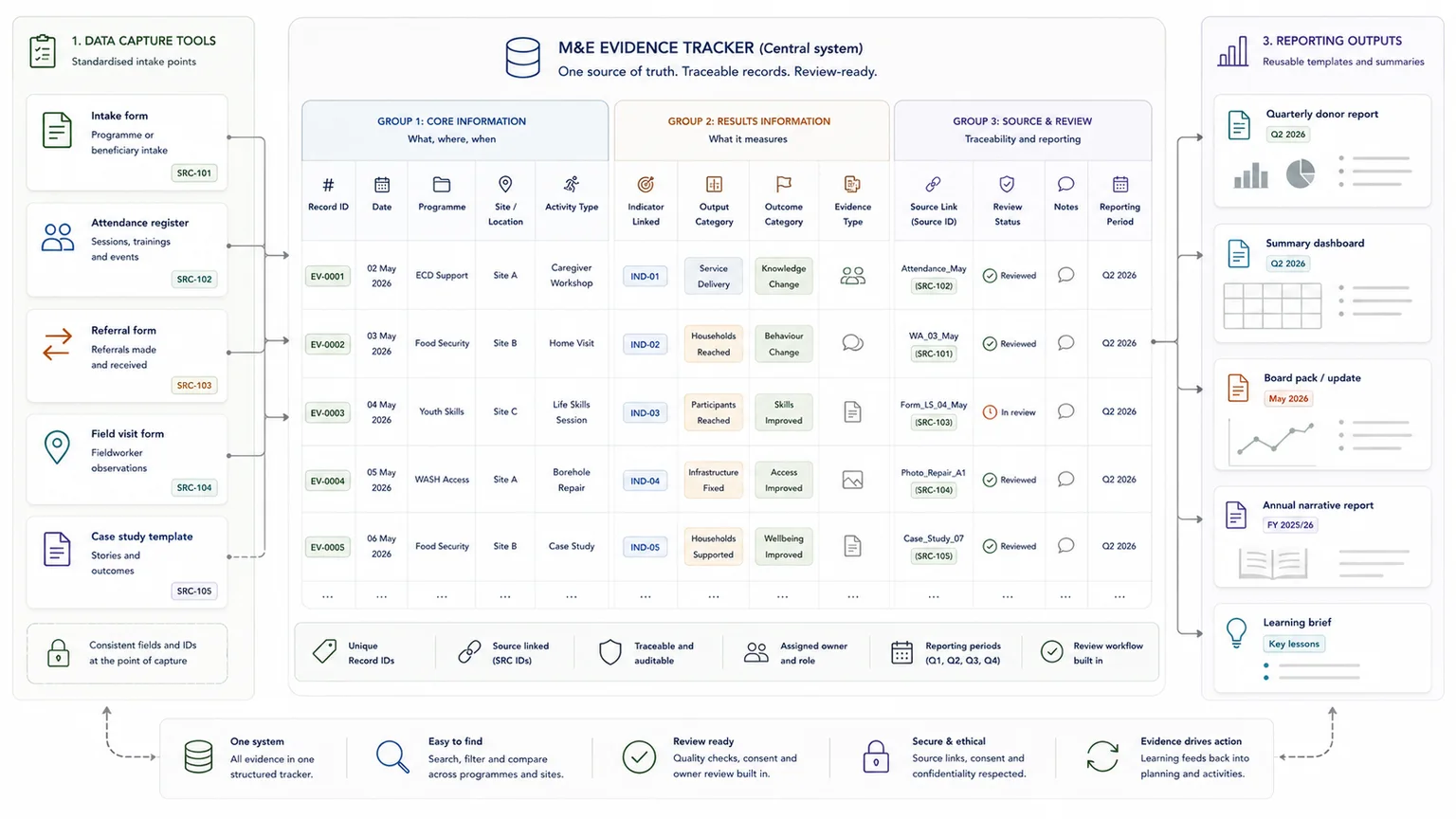

3. Standard data capture

Every recurring activity should have a standard way to capture information.

This might include an attendance form, intake form, referral form, field visit form, post-session feedback form, case study template, issue log, or partner reporting template.

For some teams, Google Forms, Microsoft Forms, Excel, or Google Sheets will be enough. For field teams working in low-connectivity settings, KoboToolbox can be useful. KoboToolbox supports browser-based web forms and KoboCollect, and both methods support offline data collection. (KoboToolbox Support)

The tool matters less than the structure. A form should capture the fields the report will need later: date, site, activity, indicator, participant group, evidence type, source link, and review status.

4. One structured database or tracker

M&E data management breaks down when records land in inboxes, folders, and separate spreadsheets with no shared structure.

A useful tracker should include fields such as:

- record ID

- date

- programme or project

- site or location

- activity type

- participant or household ID, where appropriate and ethical

- indicator linked

- output category

- outcome category

- evidence type

- source document or file link

- review status

- notes

- reporting period

A spreadsheet can be a proper system if the fields, status rules, source links, and outputs are designed properly.

The goal is not to make the database complicated. The goal is to make it easy for staff to find, check, summarise, and report the evidence.

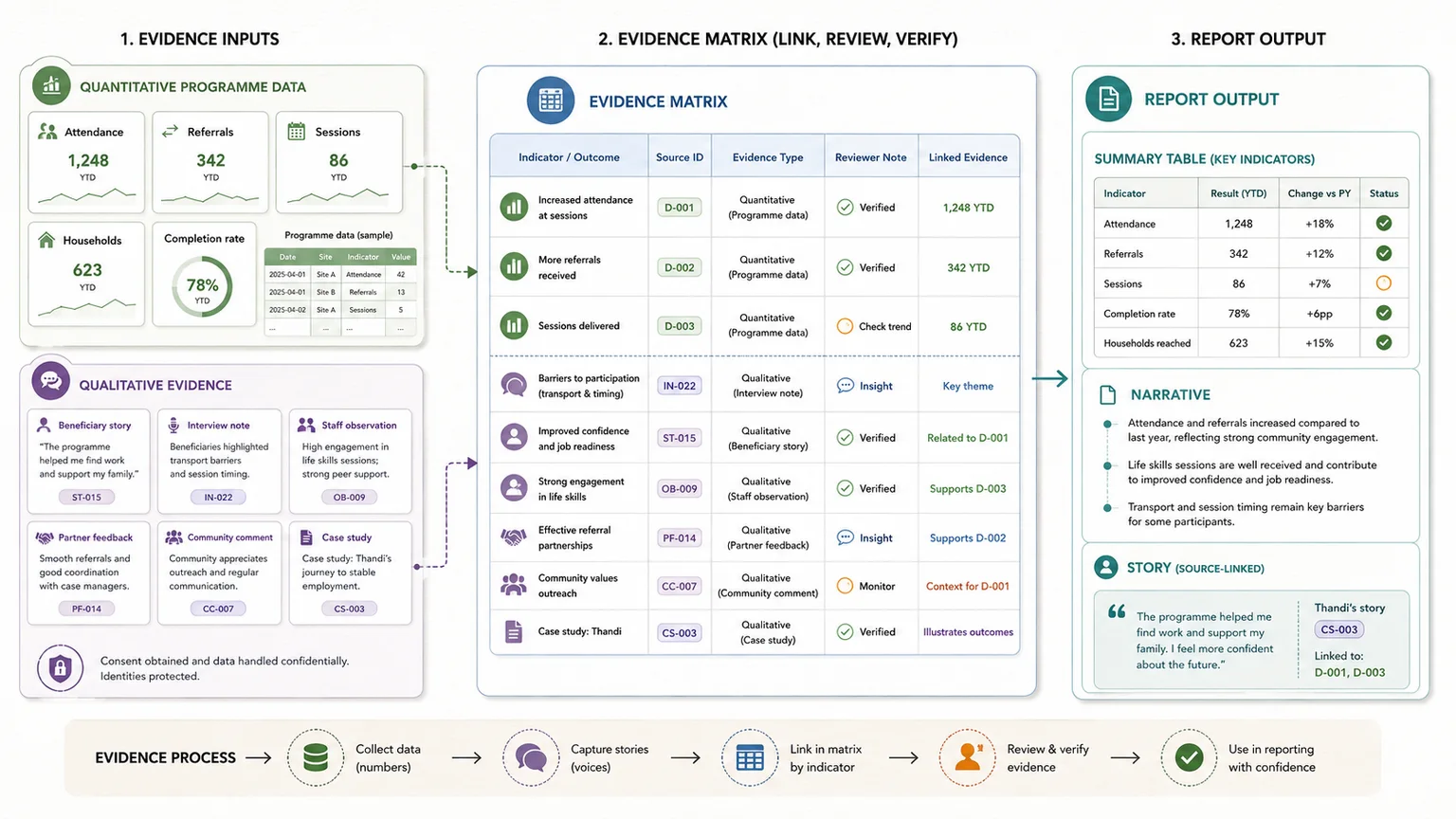

5. Qualitative evidence, not only numbers

NPO reports often need both numbers and stories.

Numbers can show reach, attendance, completion, referrals, and service delivery. Qualitative evidence can explain what changed, what participants experienced, what barriers remained, and what staff observed.

Qualitative evidence can include:

- beneficiary stories

- interview notes

- focus group notes

- staff observations

- photos with captions and consent records

- partner feedback

- community comments

- case studies

- open-ended survey answers

This material should not sit as loose stories. It should be structured with fields such as source ID, quote or excerpt, respondent type, location, theme, outcome area, consent status, report use, and reviewer note.

This is where teams can turn field notes, case studies, and programme records into structured findings instead of trying to write reports from scattered notes.

6. Review and source traceability

Source traceability for reports means the team can show where a claim came from.

A weak claim might read:

The programme improved household wellbeing.

A stronger reporting workflow would link that claim to attendance records, post-session feedback, fieldworker notes, and coded beneficiary case studies.

If the report makes a claim, the team should be able to find the source behind that claim.

This improves internal review, reduces reporting risk, and helps funders see that evidence has been checked. It also protects the team from relying on memory when a report is due.

Before a major donor report, annual report, or funding renewal, teams can check whether their evidence trail is strong enough.

7. Reporting templates

NPOs should not rebuild every report from scratch.

A reusable reporting template can pull from summary tables, coded evidence, reviewed case studies, and standard narrative sections.

Useful templates include:

- monthly programme update

- quarterly donor report

- annual narrative report preparation pack

- board report

- case study summary

- indicator table

- outcome summary

- lessons learned section

- risks and blockers section

This is where structured evidence can build report-ready outputs from structured evidence, instead of leaving the team to copy and paste under pressure.

What South African NPOs need for annual narrative reporting

Programme evidence is useful for funders, but it also supports annual reporting.

Registered NPOs must submit annual reports within nine months after financial year-end. The South African Government says the annual report should consist of a narrative report and financial report. (Government of South Africa) DSD guidance also refers to the narrative report, annual financial statement, accounting officer’s report, and updates to the organisation’s constitution, address, and office bearers where relevant. (Department of Social Development)

The NPO regulations state that an annual narrative report must specify the period under review and describe the organisation’s major projects, including details on activities, benefits, beneficiaries, and links to the organisation’s objectives. (SAFLII)

A better M&E workflow can make this easier because the team already has records of:

- major projects

- activities delivered

- beneficiaries reached

- benefits or outcomes

- objectives linked to the constitution or programme plan

- governance and meeting records

- office bearer or administrative changes

- supporting evidence for what happened

This article does not replace DSD guidance, legal advice, or accounting advice. Registered NPOs should check the latest DSD guidance and work with their accounting officer or adviser where needed.

Common M&E workflow problems in South African NPOs

Data is collected but not structured

The team collects information, but no one can use it easily later.

The fix is usually not more data. It is better structure: standard forms, required fields, clean trackers, unique IDs, and source links.

Indicators are not linked to the evidence

Reports list indicators, but the source evidence sits elsewhere.

A better setup uses indicator mapping, source IDs, evidence tables, and reporting-period fields. This helps the team connect what was reported to the records behind it.

Qualitative evidence is collected too late

Case studies are often written at the end from memory.

A stronger workflow captures case stories, quotes, consent, source notes, and theme tags while implementation is still happening.

Reports are rebuilt manually

Each donor report becomes a copy-and-paste task.

Reporting templates, reusable tables, summary tabs, standard narrative sections, and review trackers can reduce this repeat work. Teams can also estimate the cost of slow reporting when reporting delays are taking staff away from programme work.

The team cannot prove where a claim came from

This weakens review, donor confidence, and internal learning.

Source links, quote IDs, reviewer notes, and evidence-to-claim mapping help the team trace claims back to source material.

Tools are chosen before the workflow is clear

Some teams try a platform before defining the workflow.

This usually creates more admin, not less. Map the process first. Then choose tools staff can maintain.

Simple tool stack options for NPO M&E workflows

This should not become a software review. Tool choice should follow the workflow.

Basic setup

For a smaller NPO, a basic setup might include:

- Google Forms or Microsoft Forms

- Google Sheets or Excel

- Google Drive or OneDrive

- simple folder naming

- a monthly reporting template

This can work well when the reporting needs are clear and the team is disciplined about using the same structure.

Fieldwork setup

For field teams or offline environments, the stack might include:

- KoboToolbox

- KoboCollect on Android

- spreadsheet exports

- a structured review sheet

- a source folder

KoboToolbox says both web forms and KoboCollect support offline data collection, with KoboCollect allowing enumerators to download blank forms, complete them offline, save drafts, finalise submissions, and send them later. (KoboToolbox Support)

Reporting workflow setup

For recurring donor reports, the system might include:

- a Google Sheets or Excel evidence database

- reporting templates in Google Docs or Word

- summary tabs

- review status fields

- source links

- document generation

- controlled AI summaries, where appropriate

AI can help with summaries, grouping, classification, and first-pass drafting, but only when the source structure, prompt, and review process are clear. Human review stays part of the workflow.

More advanced setup

Larger programmes may need:

- Airtable or a structured relational database

- dashboards

- role-based access

- automated reminders

- folder creation

- an evidence database

- an AI knowledge base around approved project material

The starting point is still the same: define what must be collected, where it goes, how it is checked, and how it becomes a report.

A practical example workflow

A youth development NPO runs after-school sessions across three sites. Each site captures attendance, session notes, referrals, participant feedback, and case stories. At the end of the quarter, the team needs to report to a funder on reach, completion, risks, outcomes, and beneficiary stories.

There are two ways this kind of workflow might develop.

Example 1: A structured reporting workflow without AI

In a simpler setup, the team could improve its reporting process by creating a clear route from field data to quarterly reports.

The workflow might look like this:

1. Each session is captured through a standard form. 2. Each submission goes into a structured database or spreadsheet. 3. The database assigns site, date, activity, indicator, and reporting period. 4. Case stories are captured with consent and source notes. 5. Summary tabs show outputs by site and month. 6. Review fields show which records have been checked. 7. The quarterly report template pulls from the summary tables. 8. Quotes and stories link back to the original source. 9. The team uses the same structure for the next quarter.

This kind of workflow does not remove the need for judgement. It gives the team a cleaner base for review, analysis, and reporting.

For many NPOs, this is already a major improvement. The team can find records faster, reduce copy-and-paste reporting, and check where important claims came from.

Example 2: An AI-supported evidence and learning workflow

A more advanced setup could build on the same structure, but add an AI knowledge base around approved programme material.

In this version, the team still starts with structured source material. Attendance records, field notes, case studies, interview notes, reports, and review comments are collected and organised first. The AI layer is added only once the evidence base is clean enough to use.

The workflow might look like this:

1. Programme data is captured through standard forms, spreadsheets, and source folders. 2. Qualitative material is cleaned, tagged, and linked to source records. 3. Reviewed reports, case studies, findings, and learning notes are added to an approved knowledge base. 4. The AI system is limited to the organisation’s own verified material. 5. Staff can ask questions such as: * What themes appeared across this quarter’s case studies? * Which barriers were reported most often by field staff? * What evidence do we have for improved attendance? * Which findings should be considered in the next programme cycle? 6. The system returns source-linked answers that can be checked by the team. 7. Programme managers use those answers to support reporting, reflection, planning, and learning. 8. Human review remains part of the process before anything is used in a donor report or board update.

This is where M&E becomes more than reporting. The organisation is not only collecting information for compliance or funders. It is building a reusable evidence base that helps the team learn from its own work.

The important point is that AI should not be added to a messy reporting process and expected to fix it. The source material still needs structure, review, and traceability. When that foundation is in place, an AI-supported knowledge base can help teams find patterns, retrieve past evidence, prepare summaries, and ask better learning questions.

For larger evidence bases, it can also help to see how a large qualitative evidence base was structured for reporting.

When an NPO should get outside help

An NPO or programme team may need support when:

- reporting takes too long every month or quarter

- data is collected but not easy to use

- donor reports are rebuilt manually

- staff use different spreadsheets or templates

- qualitative evidence is hard to code or summarise

- the team cannot link report claims back to evidence

- the team wants to use AI but does not trust the outputs

- a deadline is close and the evidence base is messy

- the organisation wants a system staff can keep using after handover

Romanos helps teams build the working route from scattered programme information to structured evidence, clearer analysis, and reporting outputs.

Depending on the problem, that might mean helping the team design a cleaner programme evidence database, structure qualitative material, or create report-ready outputs from reviewed source material.

Practical checklist for an NPO M&E reporting workflow

Use this checklist to find gaps in your current workflow:

- Do we know what each programme is trying to change?

- Do we have a simple logic model?

- Are our indicators clear and collectable?

- Do we have standard forms for recurring activities?

- Does each record go into one structured database or tracker?

- Can we separate outputs from outcomes?

- Do we collect qualitative evidence during implementation, not only at the end?

- Can we trace report claims back to source material?

- Do we have a standard reporting template?

- Do we know who reviews the data before it goes into reports?

- Can the team reuse the workflow next month or quarter?

FAQ

What is the difference between monitoring and evaluation?

Monitoring is ongoing tracking during implementation. Evaluation is a deeper assessment of relevance, effectiveness, efficiency, impact, sustainability, and what should change next.

What should an NPO M&E system include?

A practical NPO M&E system should include clear indicators, standard data capture, a structured database or tracker, qualitative evidence, source links, review steps, and reporting templates.

Do South African NPOs have to submit annual reports?

Registered NPOs must submit annual reports within nine months after financial year-end. The South African Government says the annual report should consist of a narrative report and financial report. (Government of South Africa)

What tools can NPOs use for M&E data collection?

Simple teams can use Google Forms, Microsoft Forms, Excel, or Google Sheets. Field teams may use tools such as KoboToolbox, which supports browser-based forms and KoboCollect, including offline data collection. (KoboToolbox Support)

Can AI help with M&E reporting?

Yes, but only when the source material is structured and the outputs are reviewed. AI can help summarise, group, classify, and draft first-pass notes, but human review remains necessary.

When should an NPO get help with its reporting workflow?

Get help when reports take too long, evidence is hard to trace, qualitative material is scattered, donor reports are rebuilt manually, or the team cannot turn programme records into clear reporting outputs.

M&E works best as a workflow

M&E becomes more useful when it is treated as a workflow, not a reporting burden.

South African NPOs do not always need a large M&E platform. Many teams first need a clearer route from activity records, field notes, case studies, forms, and spreadsheets into evidence that can be checked, summarised, and reported.

Once that structure exists, funder reports, annual narrative reports, board updates, and learning reviews become easier to prepare.

Need a clearer route from programme data to funder-ready reports?

I help NPOs, donor-funded teams, and programme organisations turn scattered forms, spreadsheets, field notes, case studies, and reports into structured evidence workflows and clearer reporting outputs.

Send a short project brief, or start by checking where your reporting workflow is slowing down.

Report Writing

Develop clear, structured outputs from evidence, data, and synthesised information.