- Interviews, case studies, field notes, survey comments, or source documents are sitting in different formats.

- There is no consistent coding framework or thematic matrix.

- Report writers cannot easily see which evidence supports each finding.

- Useful quotes and examples are buried in long notes or transcripts.

- The team needs report-ready outputs without losing source links or review judgement.

Research data synthesis support for qualitative evidence and report outputs

The research may already be collected, but the findings are not yet usable. I help turn interviews, case studies, field notes, survey comments, source documents, and focus group material into structured evidence that writers and project leads can work from.

The research is collected, but the findings are not yet usable.

This offer is for teams with qualitative material that is too large, uneven, or inconsistent to turn into findings quickly.

Research teams that need structured synthesis support

The strongest fit is a research or evaluation team that has collected material and now needs a clearer route to findings and writing.

Research firms and evaluation teams

For teams handling qualitative datasets, mixed source material, or case study evidence that needs to become findings, tables, and report-ready summaries.

UNICEF and donor-funded contractors

For lead consultants and report teams working under donor standards, review deadlines, and source traceability expectations.

Lead consultants and report writers

For teams that need synthesis outputs they can draft from without rereading every source manually.

This offer sits under Data Synthesis

The page is a focused buying route for qualitative material. Related product pages cover broader evidence-to-report and AI retrieval needs.

Data Synthesis

The parent service for turning multiple evidence streams into themes, findings, quote banks, tables, and report-ready outputs.

View Data SynthesisReport Writing

Useful when synthesis outputs also need to move into findings sections, recommendations, summaries, or draft report content.

View Report WritingDatabase Architecture

Useful when the material first needs a source tracker, coding structure, data dictionary, or evidence table.

View Database ArchitectureEvidence, Insight & Reporting Engine

Use this broader route when the project needs synthesis, recommendations, report sections, QA, and source checking in one workflow.

View the reporting engineAI Knowledge Base Build

Use this when the synthesis workflow also needs controlled retrieval, custom GPT support, or source-linked querying.

View AI Knowledge Base BuildCommon inputs for research synthesis

The source material can be messy. The work creates a structure that can survive review and support writing.

Qualitative material

- Interviews

- Focus groups

- Case studies

- Field notes

Open-ended responses

- Survey comments

- Stakeholder inputs

- Workshop notes

- Consultation material

Supporting source documents

- Reports

- Briefing notes

- Existing evidence tables

- Draft findings

Structured synthesis outputs for researchers and writers

The work can include a source tracker, coding framework, evidence table, quote bank, thematic matrix, cross-case synthesis, grouped themes, finding summaries, gap notes, recommendations support table, report-ready outputs, and QA notes.

AI can support parts of the process where appropriate, but it does not replace the research lead, subject-matter judgement, or review of claims against source material.

Bring multiple evidence streams into one coherent view.

Best when interviews, studies, notes, and submissions need to be compared, grouped, and turned into findings.

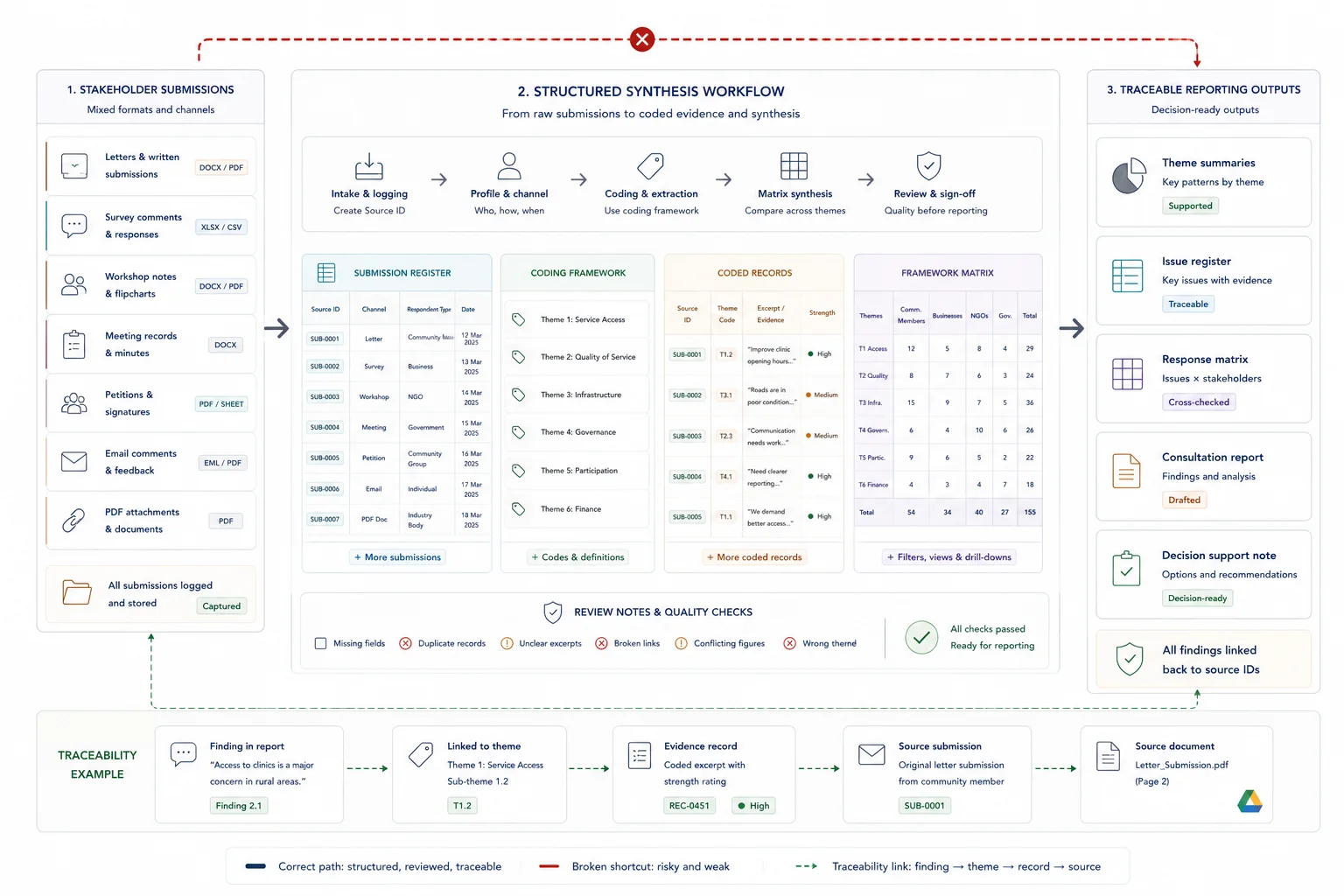

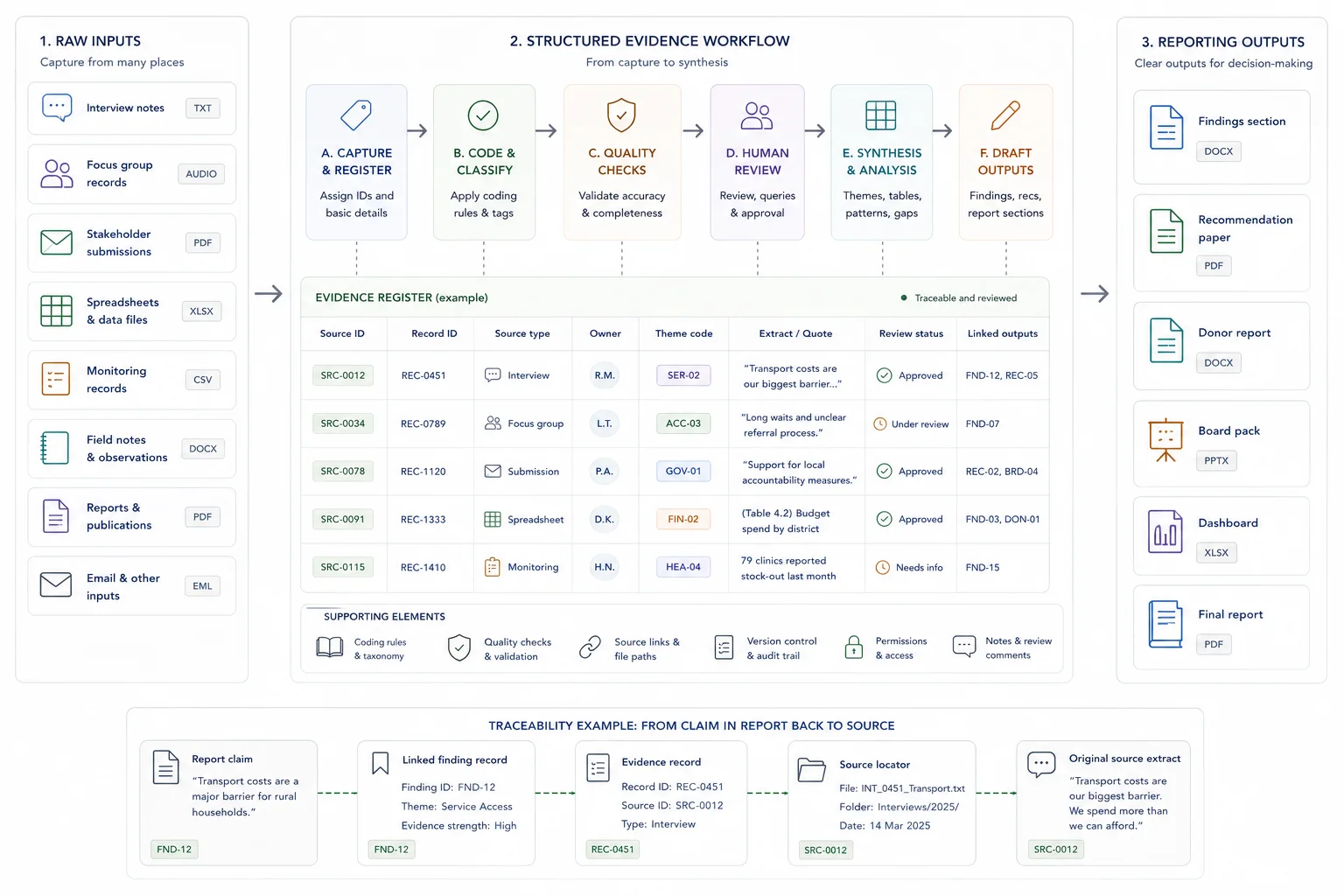

The workflow turns raw qualitative material into report-ready evidence

The structure is designed around the research questions, coding depth, reporting deadline, and outputs the writers need.

Review the questions and source material

I review the research questions, material types, rough volume, report outline, priority themes, and deadlines.

Set the coding and evidence structure

I create or refine the coding framework, source tracker, evidence table, quote fields, theme labels, and review notes.

Synthesize themes and findings

I organise the material by theme, case, location, group, question, or issue, then prepare finding summaries, quote banks, and comparison tables.

Prepare outputs for writing and review

I prepare report-ready tables, draft findings support, recommendations notes, gap notes, QA notes, and handover material.

The deliverables help the team move from collected material to usable findings

The exact mix depends on how much material exists, how deeply it must be coded, and what the report needs to contain.

- Source tracker

- Coding framework

- Evidence table

- Quote bank

- Thematic matrix

- Cross-case synthesis

- Finding summaries

- Grouped themes and gap notes

- Recommendations support table

- Report-ready outputs

- QA and review notes

Where synthesis support fits

This is a focused route for teams that already have the data and need help making it usable for analysis and writing.

UNICEF and donor-funded reports

Turn case studies, interviews, notes, and supporting documents into source-linked findings, tables, and draft-ready outputs.

Evaluation and situation analysis work

Create a structured evidence base that helps teams compare themes, service issues, respondent groups, locations, and recommendations.

Qualitative research overflow

Support research teams when the amount of material is too large for the current team to code, compare, and summarise within deadline.

How AI can support synthesis without replacing judgement

AI can reduce some manual handling, but the synthesis still needs coding discipline, source links, and review.

First-pass organisation

AI can help with first-pass summaries, classification, comparison, and retrieval when the source IDs and coding framework are clear.

Human review remains central

Claims, findings, quotes, gaps, and recommendations should still be reviewed by people who understand the research context.

Source traceability is built in

The workflow keeps source IDs, quote fields, theme labels, and review notes visible so writers can check where a finding came from.

What the client needs to provide

A short scoping call is easier when the team can show the material and the output it needs to support.

Research material

Interviews, focus groups, field notes, case studies, survey comments, workshop notes, source documents, and existing coding notes.

Research and reporting context

Research questions, methodology requirements, report outline, donor or client standards, priority themes, and review comments.

Delivery constraints

Deadline, review process, required output formats, tool environment, confidentiality requirements, and team roles.

Related case studies

These case studies show research synthesis under donor-funded and public-sector reporting conditions, with evidence structures that support writing and review.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Do you replace the research lead?

No. I support the evidence structure, synthesis workflow, findings material, and report-ready outputs. The research lead and subject-matter experts remain responsible for judgement and sign-off.

Can you work from messy notes?

Yes, but the quality of the output depends on the quality and completeness of the notes. Messy material can be structured, but missing information should be flagged rather than hidden.

Can this feed into report writing?

Yes. The synthesis can be designed to produce finding summaries, tables, quote banks, recommendations support, and draft-ready sections for report writers.

Can AI help with qualitative synthesis?

AI can support first-pass organisation, summaries, comparison, and retrieval where appropriate. It still needs coding rules, source traceability, and human review.

What should I send before a scoping call?

Send the source types, rough volume, research questions, reporting deadline, current coding structure if one exists, and the output the synthesis needs to support.

Send the research material, questions, and output needed

If the team has collected interviews, case studies, notes, or documents but needs help turning them into themes, findings, tables, and report-ready outputs, send the source types, volume, deadline, and report context.