- The source material is too scattered for reliable retrieval.

- The AI tool does not know which evidence is approved or current.

- Users ask broad questions and get answers that are hard to check.

- Prompts and output formats change from person to person.

- Drafting support is useful, but claims still need source checks and review.

Custom AI building for evidence, documents, and internal retrieval

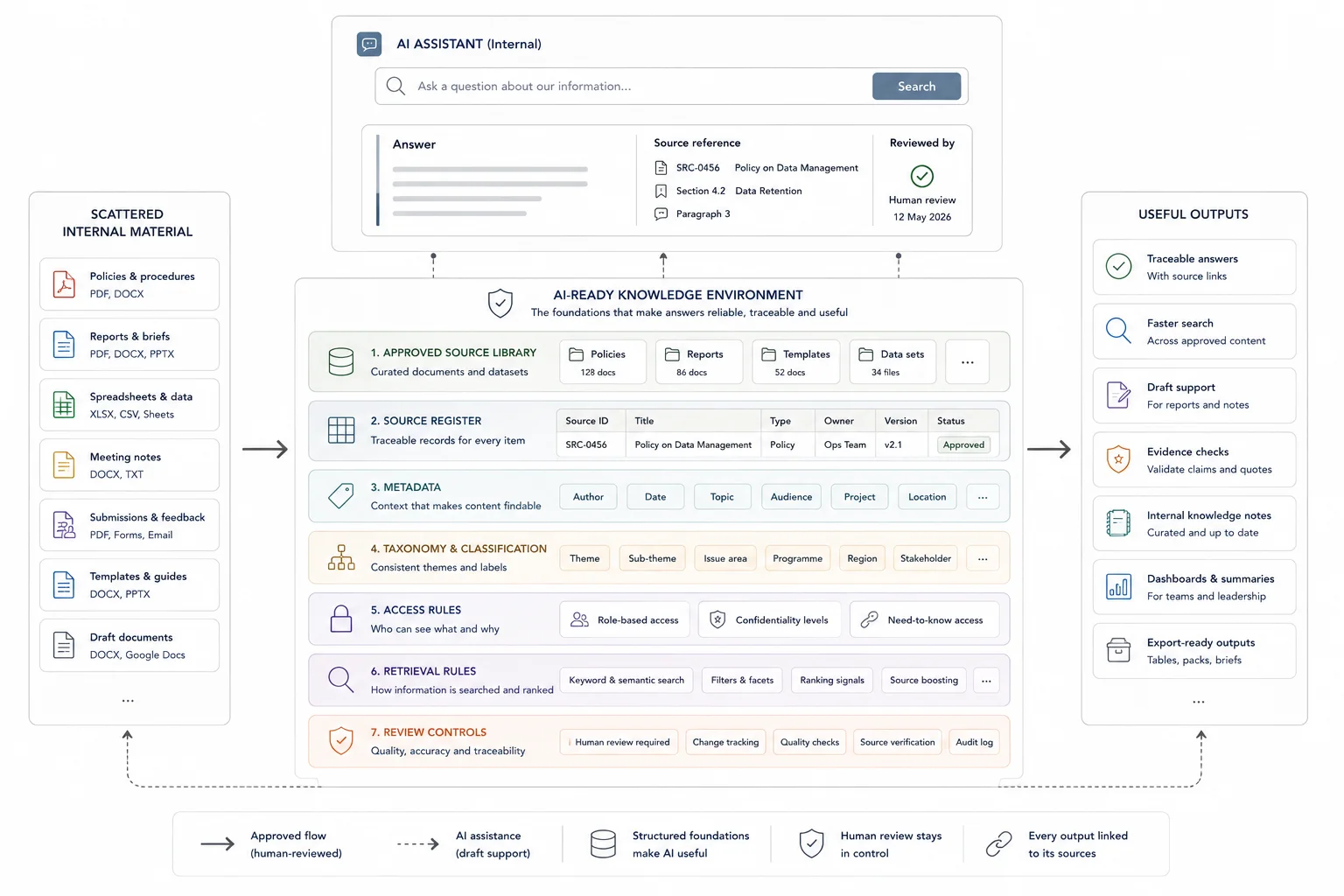

AI is only useful when the source base is clear and the review route is visible. I build custom AI assistants, retrieval workflows, and prompt systems around approved material so teams can find, compare, summarise, and draft with more control.

The team wants AI, but does not trust the answers yet

Most AI problems are source and workflow problems first. The build needs boundaries, traceability, prompt discipline, and human review.

An AI layer tied to the team's real information and review process

The build may include a custom GPT, AI knowledge base, prompt library, retrieval workflow, spreadsheet-connected assistant, or controlled drafting support. The aim is not to let AI guess. The aim is to help the team work faster with its own approved information.

For higher-risk work, the system can include guardrails around source use, answer limits, citation expectations, review steps, and what the AI should refuse to answer.

Connect your document environment to practical retrieval.

Best when teams need fast answers, internal search, and query workflows grounded in their own records and knowledge.

Custom AI work starts by defining what the tool is allowed to know

The source base, questions, output formats, and review rules matter before the tool itself.

Define the task and source boundaries

I clarify what users need to ask, which sources are approved, which material is excluded, and what the AI should produce.

Prepare the knowledge base

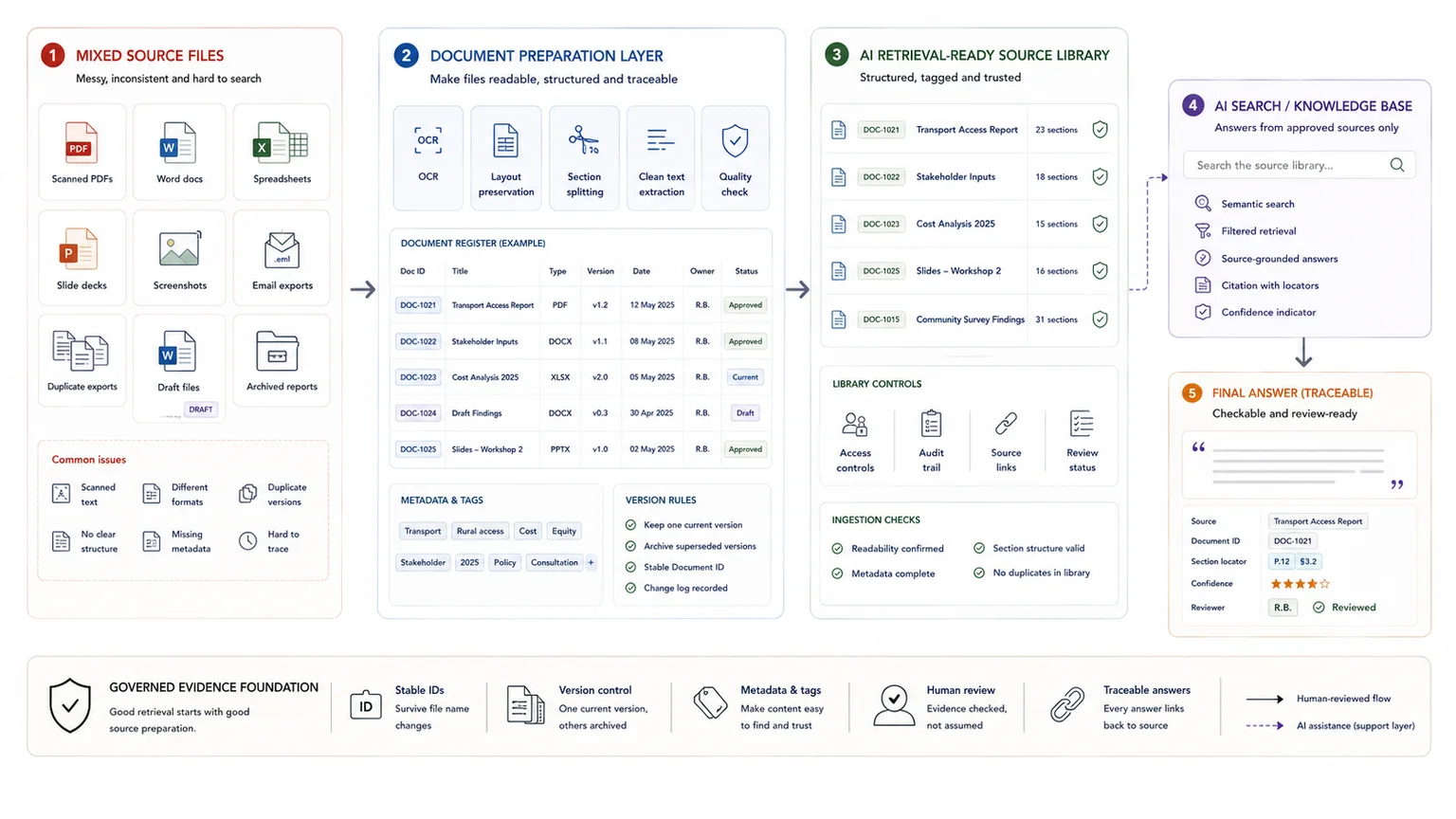

I organise source documents, evidence tables, metadata, and retrieval rules so the AI has a cleaner working environment.

Build prompts and workflows

I create prompts, output templates, retrieval instructions, summary logic, and checking steps around the team's actual workflow.

Test, document, and hand over

I test with real questions, refine weak outputs, document usage rules, and show the team where human review stays in the process.

The output is a controlled AI workflow, not a generic chatbot

The build is scoped around the team's source material, repeat questions, review burden, and reporting outputs.

- Custom GPT or AI assistant

- AI knowledge base structure

- Source library preparation

- Prompt library and output templates

- Retrieval, summary, classification, and drafting prompts

- Evidence checking workflow

- Source traceability and answer-boundary rules

- Review and sign-off process

- User instructions, SOP, training guide, or handover session

Custom AI works best when it sits on top of a clear evidence structure

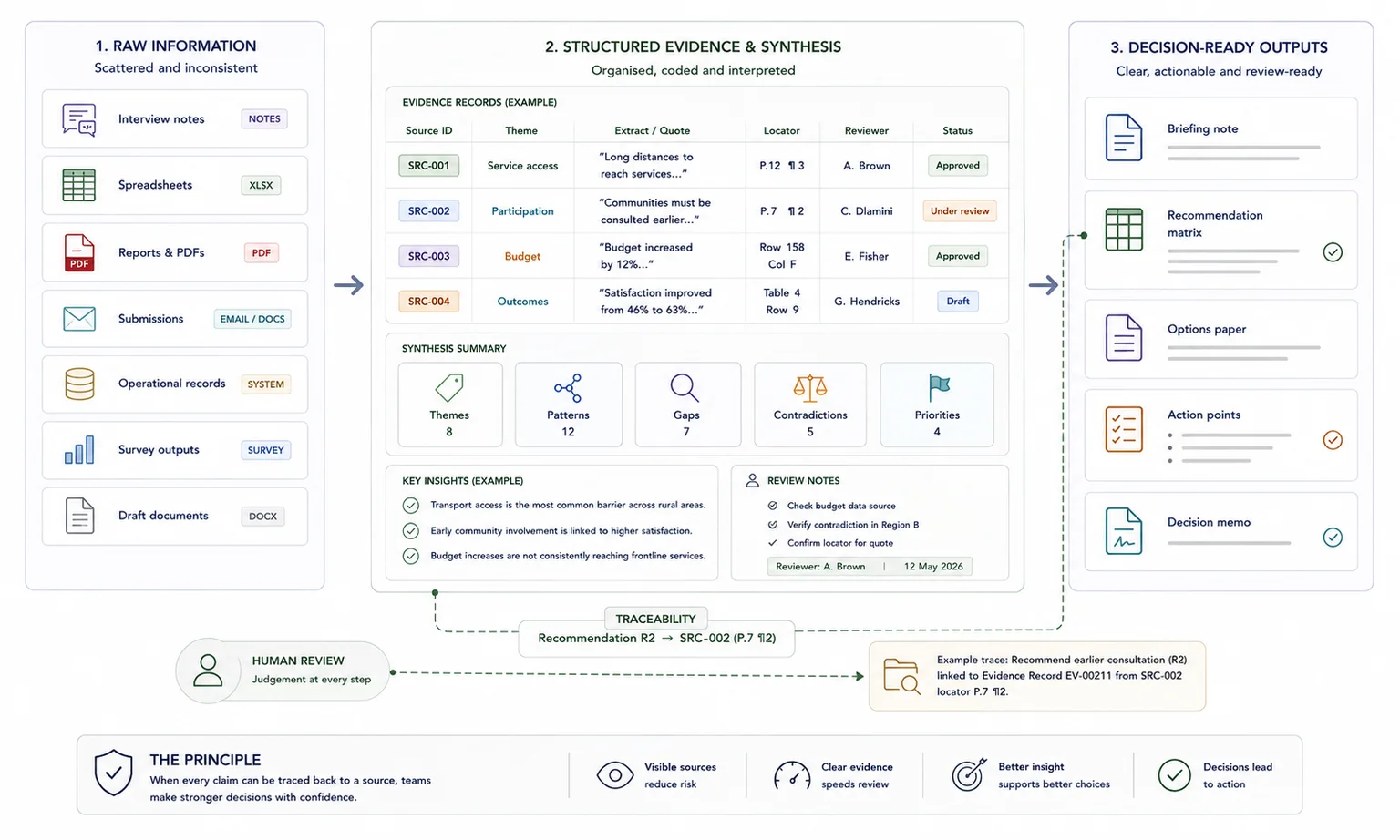

AI can help with retrieval, first-pass summaries, classification, comparison, and drafting, but it still needs a source base people can check.

Research and reporting teams

Create a query layer for coded evidence, case studies, interviews, or report source libraries.

Internal knowledge environments

Help teams retrieve answers from approved documents, reports, policies, and operating records.

Public submissions and review workflows

Use AI carefully to help classify, compare, summarise, and retrieve material while preserving human review.

Related case studies

These examples show AI as a controlled layer on top of structured evidence, not as a replacement for analysis or review.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Do you build AI tools that make final decisions?

No. The safer use is retrieval, first-pass summaries, comparison, classification, and drafting support. Human review stays in the workflow for judgement, claims, and sign-off.

Can you build AI on top of messy documents?

Sometimes, but the first task is usually source preparation. If the documents are duplicated, outdated, badly scanned, or missing metadata, the AI layer will be weaker.

What tools can the build use?

The format depends on the client's environment. It may be a custom GPT, document retrieval system, prompt library, spreadsheet-connected workflow, or another practical setup the team can maintain.

How do you reduce hallucination risk?

By narrowing the source base, setting retrieval rules, using structured prompts, asking for source references where useful, and keeping human review in place for outputs that matter.

What should I send before we scope this?

Send the source types, example documents, questions users need to ask, current tools, output formats, and any privacy or review requirements.

Send the source base and the questions users need answered

If your team wants AI support but needs clear boundaries, source control, and review rules, send the documents involved, current tool setup, repeat questions, and intended outputs.