- There are too many notes, submissions, interviews, or case studies to review manually within the deadline.

- Repeated themes appear across sources, but the team has no clean comparison structure.

- Findings are not clearly linked back to source material.

- Coding varies across reviewers or workstreams.

- Report writers do not know which evidence is strong enough to use.

Data synthesis for submissions, interviews, case studies, and reports

Large evidence sets rarely fail because there is no information. They fail because the team cannot see what the material says as a whole. I turn scattered inputs into clear themes, findings, tables, and synthesis packs that writers and decision-makers can use.

The material has been collected, but the pattern is still hard to see

Data synthesis sits between raw material and the final output. It groups, compares, codes, and interprets evidence without losing source context.

A synthesis layer that turns raw inputs into usable findings

The work can include a coding framework, thematic matrix, source comparison table, evidence table, quote bank, claim table, issue tracker, gap analysis, and synthesis pack for writers or decision-makers.

The goal is not to flatten the evidence into a neat summary. The goal is to preserve enough source context that themes, tensions, gaps, and recommendations can be checked.

Bring multiple evidence streams into one coherent view.

Best when interviews, studies, notes, and submissions need to be compared, grouped, and turned into findings.

Synthesis starts by defining the questions the material must answer

The method should fit the source types, audience, deadline, and final report or decision need.

Set the synthesis frame

I clarify the research questions, themes, source types, coding needs, and final output the synthesis must support.

Build the coding structure

I create or refine codes, themes, source IDs, locators, and comparison fields so evidence can be reviewed consistently.

Group and compare the material

I chart the source material into matrices, evidence tables, quote banks, and summary views that make patterns easier to see.

Prepare findings for use

I turn the synthesis into findings, ranked issues, gap notes, draft sections, or recommendation support tables.

The output is a synthesis pack the team can write or decide from

The deliverables depend on whether the work supports a report, policy review, evaluation, donor output, or internal decision.

- Synthesis plan

- Coding framework

- Thematic matrix

- Source comparison table

- Evidence table and quote bank

- Claim table and issue tracker

- Theme summaries and gap analysis

- Ranked findings and pattern summaries

- Draft findings sections or recommendation support table

Synthesis is the bridge between evidence storage and written output

It can stand alone when the source material is ready, or sit inside a larger evidence, AI, and reporting workflow.

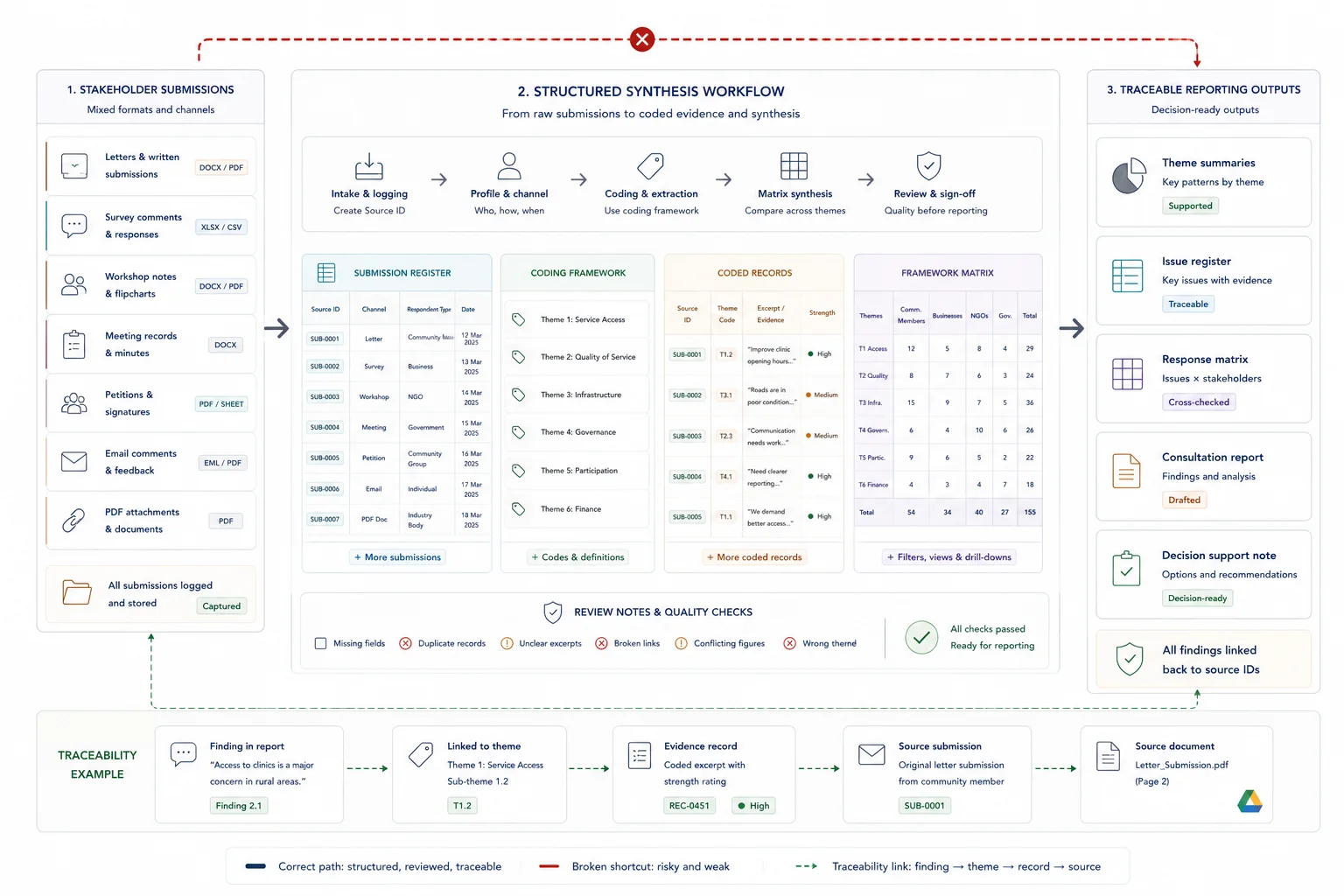

Public submissions and consultation responses

Code, compare, and summarise submissions while keeping source IDs, respondent groups, themes, and review notes visible.

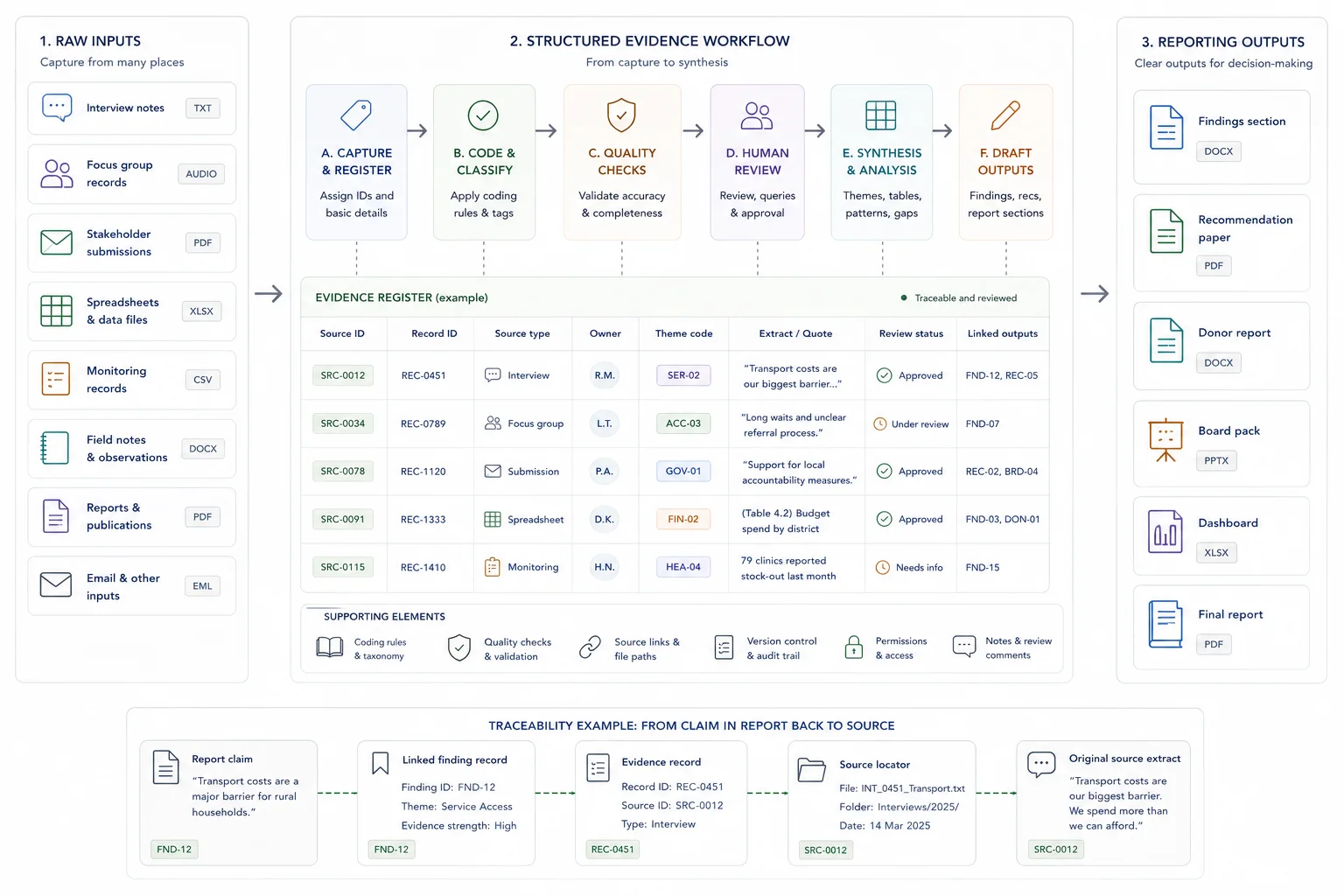

Research and evaluation material

Turn interviews, focus groups, case studies, workshop notes, and fieldwork material into source-linked findings.

Report-ready evidence packs

Prepare thematic summaries, finding sheets, quote banks, and tables that feed directly into report drafting.

Related case studies

These case studies show synthesis work moving from raw evidence to themes, findings, drafting support, and review-ready outputs.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Is data synthesis the same as summarising?

No. Summarising compresses material. Synthesis sorts, compares, groups, interprets, and shows what the material says across sources.

Can you work with qualitative material?

Yes. This service is a strong fit for interviews, focus groups, public submissions, case studies, open-ended survey responses, field notes, workshop notes, and mixed document sets.

What if the material is public submissions or consultation feedback?

Use the Public Submission Analysis System when submissions, stakeholder comments, review inputs, issue matrices, or response notes need a dedicated workflow.

Can synthesis outputs feed straight into a report?

Yes. In report projects, the synthesis is usually designed so findings, tables, quotes, and issue summaries can move into drafting without losing source traceability.

What if we do not have a coding framework yet?

I can help build one from the project questions, source material, audience, and reporting need.

What should I send before a synthesis scoping call?

Send the source types, rough volume, research questions, deadlines, any existing codes or themes, and the final output the synthesis needs to support.

Send the source volume, questions, and output needed

If the team is sitting with interviews, submissions, case studies, notes, or mixed evidence and needs clearer findings, send the source types, deadline, review setup, and final report or decision need.