- Submissions arrive as PDFs, Word files, emails, spreadsheets, form exports, and online comments.

- The same point appears across different stakeholders, but the team has no clean way to compare it.

- Drafters cannot quickly find the evidence behind themes, claims, or response notes.

- Review comments risk getting lost in tracked changes, inboxes, or separate spreadsheets.

- The process is time-sensitive, public-facing, or politically sensitive enough to need a stronger evidence trail.

Public submission analysis system for consultation and policy teams

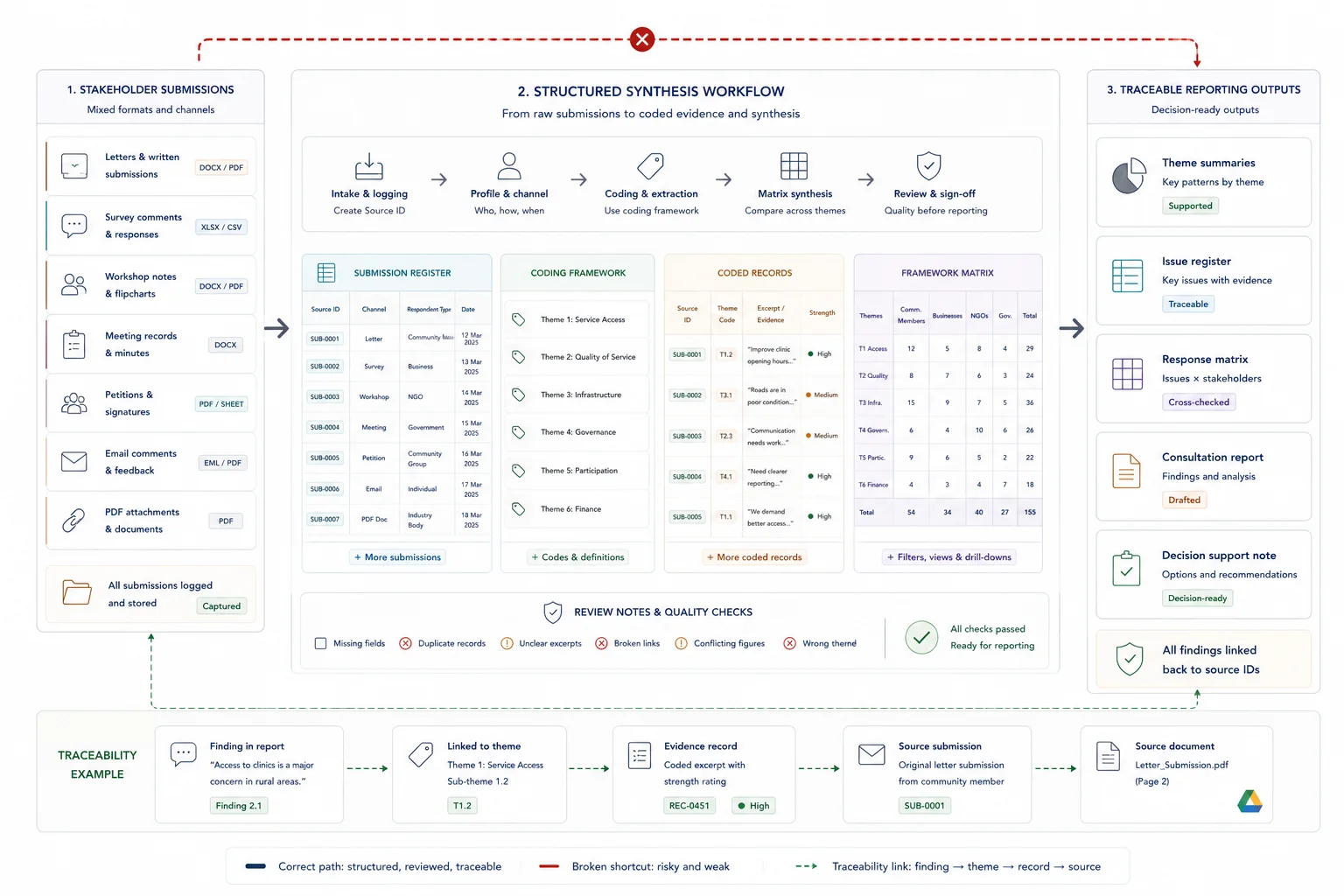

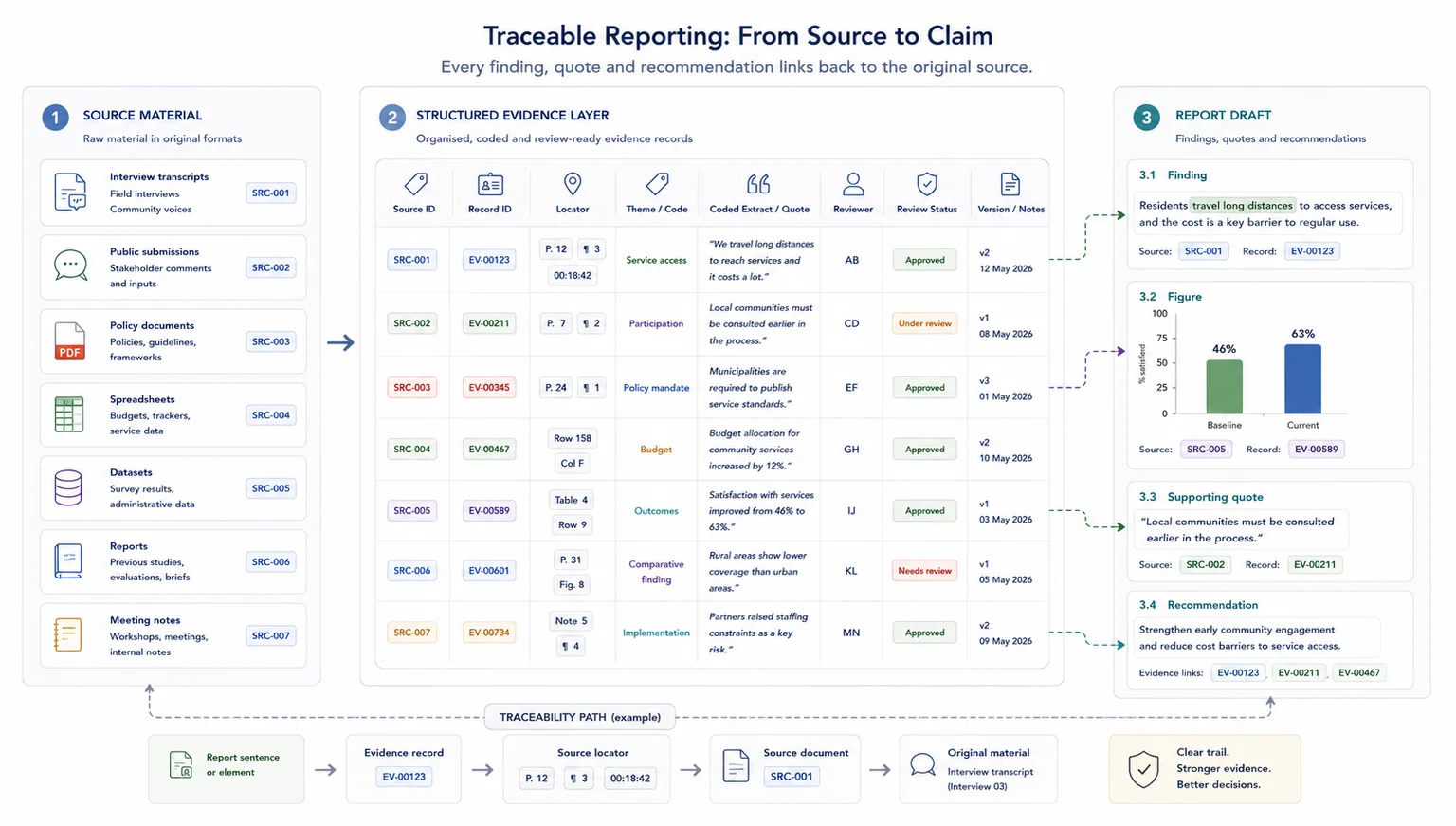

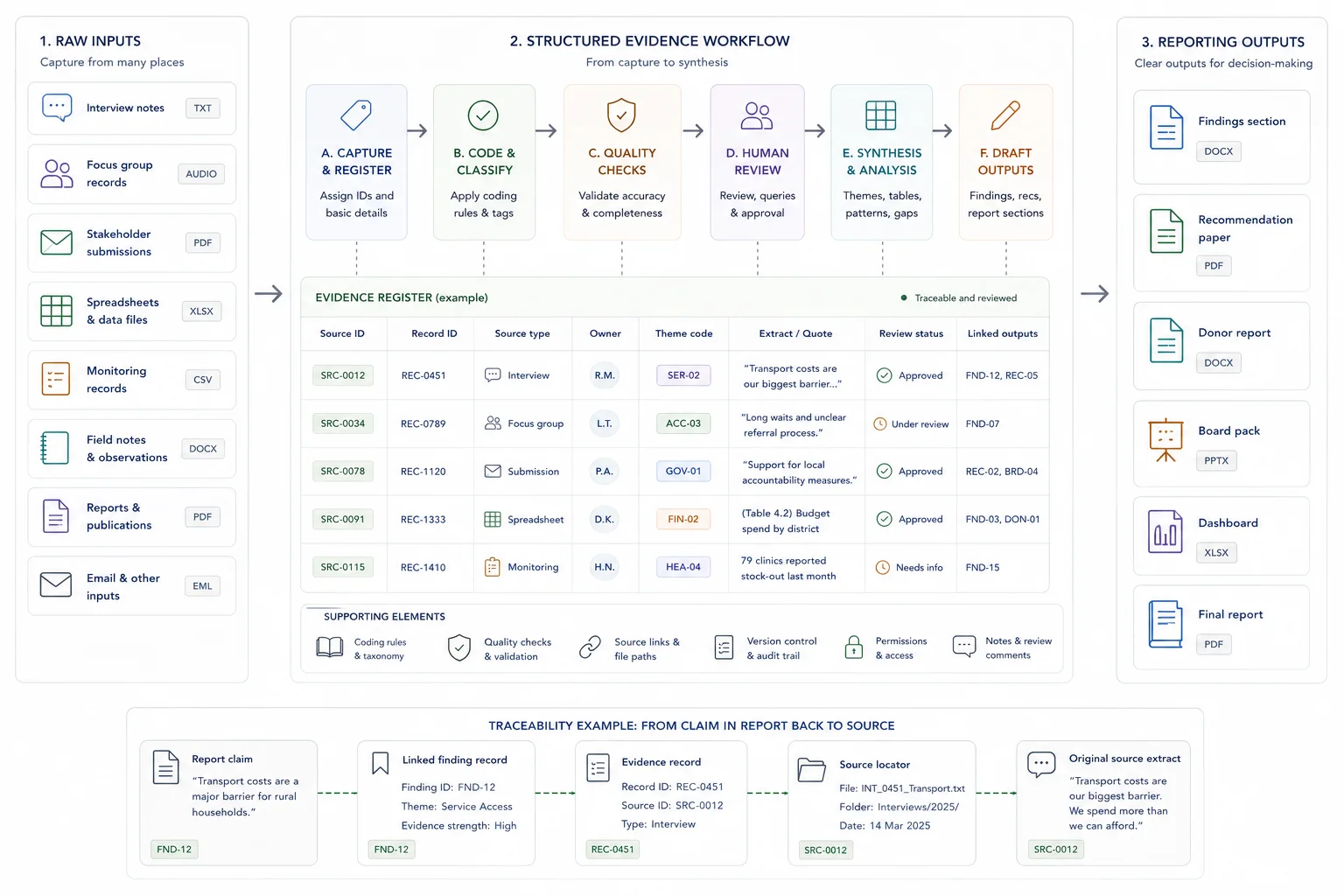

Public submissions often arrive in mixed formats, with repeated points, weak source tracking, and review pressure. I build the system that turns those comments into coded evidence, issue summaries, synthesis outputs, and drafting material that can be checked.

The team needs to show how public input was reviewed, not only summarised

A good submission workflow keeps each useful point connected to its source, theme, review status, and drafting use.

A working route from submission intake to coded evidence, synthesis, and review

The system can start with a submission database, source ID structure, coding schema, stakeholder fields, issue matrix, synthesis tables, and review dashboard. Where useful, AI can help with first-pass summaries, classification, and retrieval, but human review remains in the workflow.

The purpose is to give policy, public-sector, legal, civic, and consultation teams a clearer way to handle input before it feeds a report, response matrix, white paper, briefing note, or final review process.

Bring multiple evidence streams into one coherent view.

Best when interviews, studies, notes, and submissions need to be compared, grouped, and turned into findings.

Submission analysis starts by fixing the source route before coding begins

The system is scoped around the formats, consultation questions, review criteria, deadline, and output the team needs.

Review the material and requirements

I look at the submission formats, consultation questions, stakeholder groups, review criteria, and required outputs before designing the workflow.

Design the source and coding structure

I set up source IDs, metadata fields, stakeholder categories, theme codes, issue fields, quote tracking, review status, and QA checks.

Build the analysis workflow

I prepare the database, import or structure submissions, support coding, create synthesis views, and set up issue or response matrix outputs.

Support drafting and handover

I prepare the synthesis, drafting notes, source-linked review packs, handover notes, and any AI-supported retrieval layer agreed in scope.

The output is a traceable analysis system the team can keep reviewing

The exact deliverables depend on volume, format, review depth, and whether the work must support drafting, response preparation, or final review.

- Submission intake tracker and source ID system

- Public submission database

- Coding schema and stakeholder group fields

- Claim, quote, theme, and issue tracking

- Issue matrix or response matrix support

- Thematic synthesis tables and summary notes

- Review dashboards and QA checklist

- AI-supported retrieval assistant where appropriate

- Drafting support pack and handover notes

Where this system is useful

The page is for consultation work where public or stakeholder input must become structured evidence, not a loose set of summaries.

White paper and policy consultation

Turn public submissions and specialist comments into source-linked themes, issue summaries, drafting notes, and review material.

Stakeholder comment periods

Create a coded database for feedback from citizens, organisations, technical reviewers, consultants, and internal teams.

Legislative or public participation review

Keep comments, source locators, categories, response notes, and status fields visible through the review process.

Related case studies

The strongest proof is the South African White Paper evidence workflow, where public submissions, synthesis, drafting support, and coded review comments stayed linked.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Can this handle hundreds or thousands of submissions?

Yes, but the scope depends on volume, formats, coding depth, stakeholder categories, deadline, and review outputs. A larger volume needs a clearer intake and QA process before analysis begins.

Can submissions stay linked to the original source?

Yes. Source IDs, file links, comment references, quote fields, and locator notes can be built into the database so themes and drafting points remain checkable.

Can AI help code or summarise the submissions?

AI can help with first-pass summaries, classification, comparison, and retrieval. Human review is still needed for public-sector, legal, policy, and consultation work.

Can the system support a response matrix?

Yes. The workflow can include issue fields, response notes, status fields, reviewer comments, and source links so a response matrix can be built from coded material.

What should I send before a scoping call?

Send the submission formats, expected volume, consultation questions, required categories, deadline, output format, tool environment, and whether the material is sensitive.

Send the submission volume, formats, deadline, and output needed

If your team is handling public submissions, stakeholder comments, or consultation review material, send a short brief with the formats, volume, review criteria, deadline, and required output.