- Documents are spread across folders, reports, drives, spreadsheets, and shared links.

- The team does not have a clean inventory of approved source material.

- Users ask broad questions and get answers that are hard to verify.

- Prompts, output formats, and review steps differ from person to person.

- The team needs AI to support retrieval and drafting, not replace expert judgement.

AI knowledge base build for approved documents and reviewable outputs

AI becomes useful when the source layer is clear. I help teams prepare approved material, define retrieval boundaries, build prompt and output rules, and keep human review in the workflow.

AI is only useful when the source layer is ready.

This page is for teams that want AI support, but do not yet trust the outputs because the source material, retrieval rules, and review process are unclear.

Teams that want AI, but need stronger source control first

The strongest fit is a team with useful internal material and a real need to search, compare, summarise, or draft from it more consistently.

Research, policy, and reporting teams

For teams working with evidence libraries, policy material, donor reports, project documents, case studies, transcripts, or review packs.

Internal knowledge teams

For organisations with document libraries, operating records, manuals, or internal knowledge that people struggle to find and reuse.

Teams that do not trust AI outputs yet

For teams that need approved sources, retrieval boundaries, output templates, and human review before AI can be used responsibly.

This offer sits under Custom AI Building

The build depends on source structure, retrieval discipline, and review rules. Related services support the source and reporting layers around the AI assistant.

Custom AI Building

The parent service for controlled AI assistants, prompt libraries, retrieval workflows, and reviewable output formats.

View Custom AI BuildingDatabase Architecture

Useful when the source inventory, metadata table, file index, or evidence structure needs to be cleaned before AI is added.

View Database ArchitectureData Synthesis

Useful when the knowledge base needs to support coding, comparison, theme grouping, or evidence synthesis.

View Data SynthesisReport Writing

Useful when the assistant needs to support summaries, draft sections, source-linked notes, or review-ready report outputs.

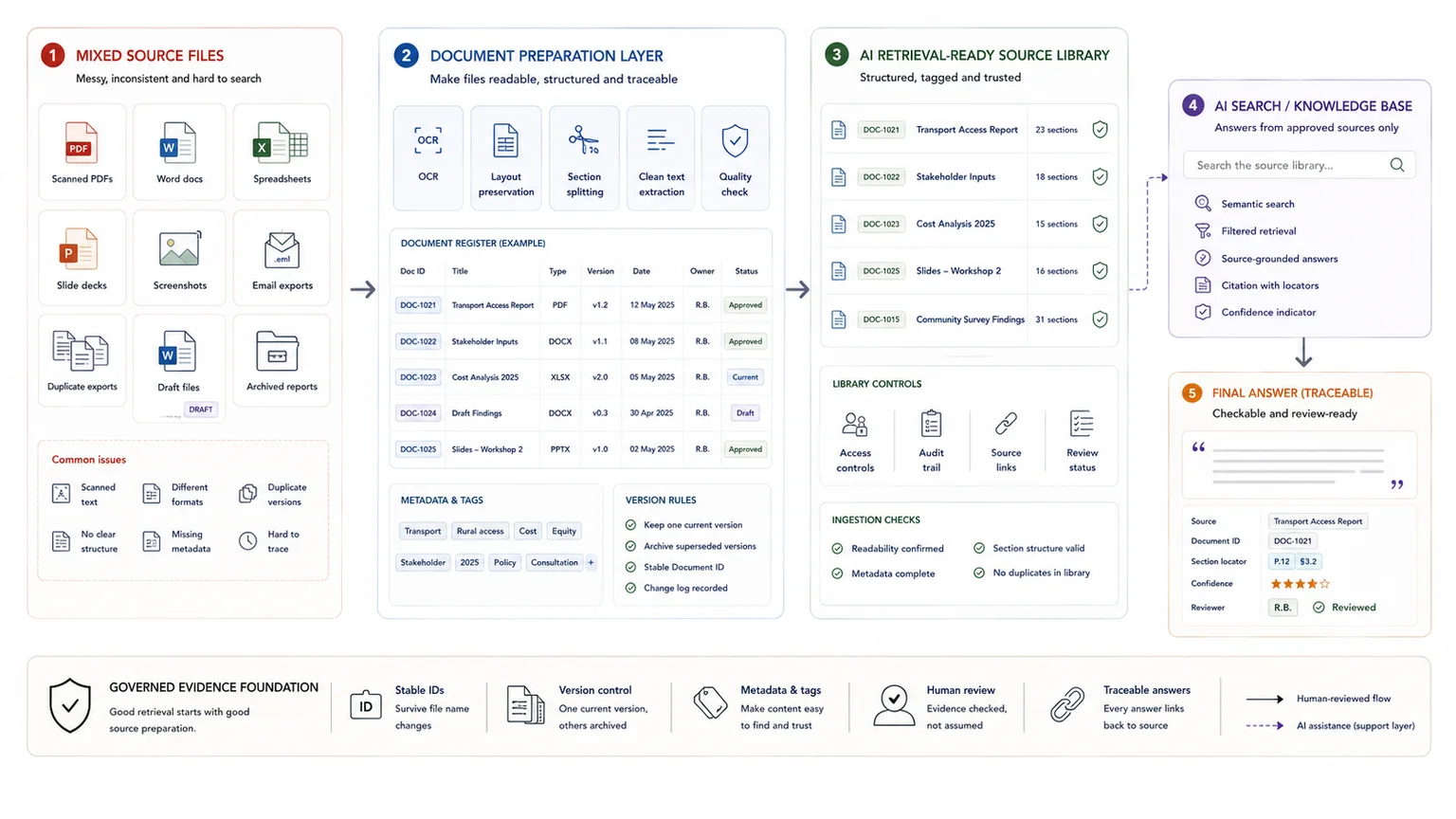

View Report WritingThe build starts with approved source material

The quality of the assistant depends on the quality, structure, and boundaries of the material it is allowed to use.

Source inventory

- Approved documents

- Reports and briefs

- Policy or programme material

- Evidence tables

Metadata and file preparation

- File index

- Source IDs

- Document status

- Access and sensitivity notes

Output rules

- Prompt library

- Output templates

- Source checking rules

- Limitations notes

The assistant should have clear limits

A controlled AI knowledge base should make source material easier to work with. It should not become an unchecked authority.

Not a general chatbot

The assistant is built around approved material, defined tasks, prompt rules, and output formats rather than open-ended guessing.

Not a replacement for review

Outputs still need human checking, especially when they support claims, findings, recommendations, or sensitive decisions.

Not a place for unclear source material

Drafts, duplicates, outdated files, confidential material, and unsupported notes need to be marked clearly or excluded.

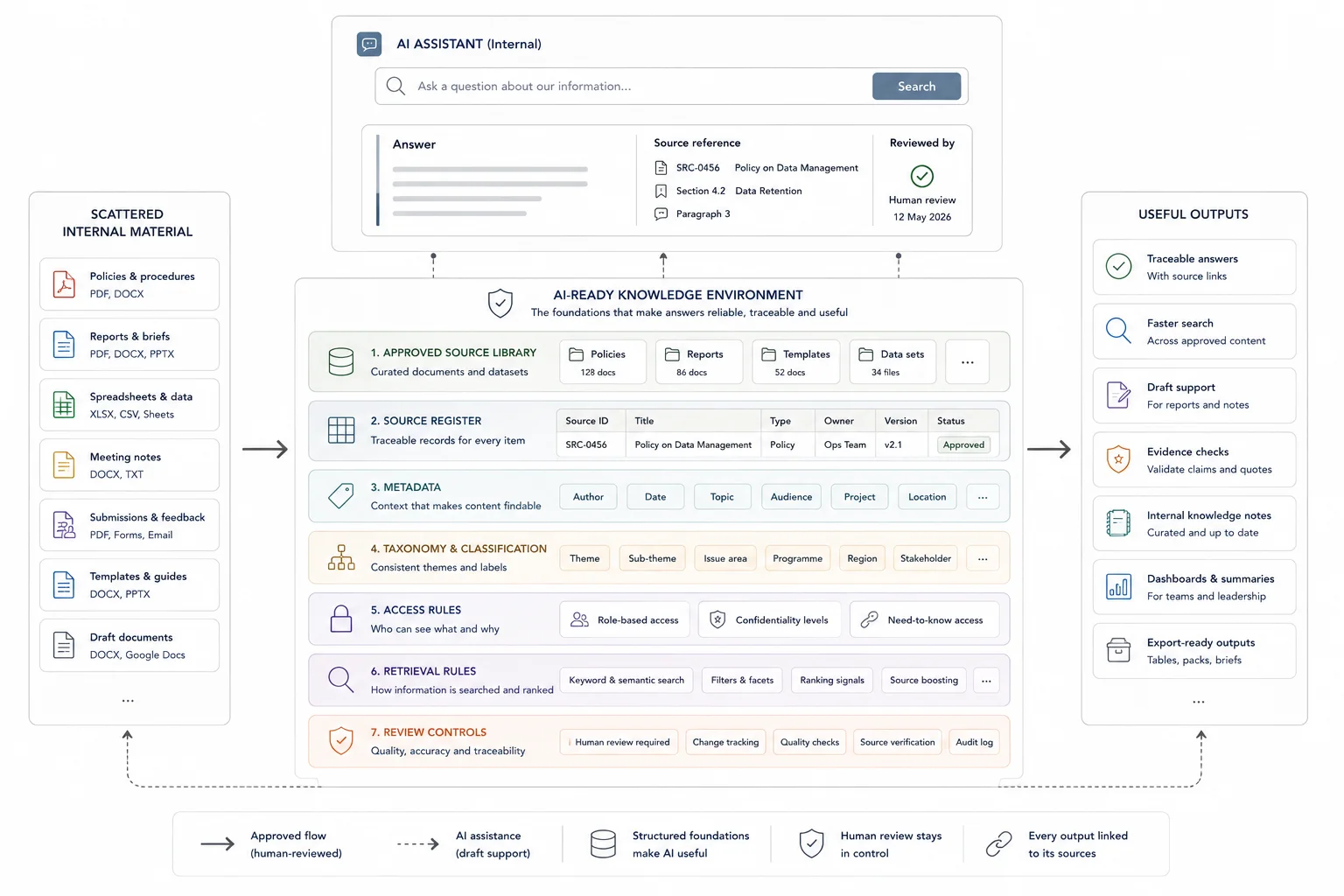

A controlled assistant around your own source material

The build can include an approved source library, metadata table, file index, custom GPT or AI assistant, system instructions, prompt library, output templates, QA test questions, source checking process, user guide, and handover session.

The aim is to help the team find source material faster, compare documents, prepare first-draft notes, and produce outputs in agreed formats while keeping limitations and review steps visible.

Connect your document environment to practical retrieval.

Best when teams need fast answers, internal search, and query workflows grounded in their own records and knowledge.

The work starts by deciding what the AI is allowed to know

The source inventory and use cases come before the assistant. That is where most trust problems are solved.

Define use cases and boundaries

I clarify what users need to ask, which sources are approved, which files are excluded, and what the assistant should not answer.

Prepare the source library

I organise files, metadata, source IDs, access notes, file indexes, and any evidence tables needed for retrieval.

Build prompts and output rules

I set up system instructions, prompt libraries, source checking prompts, and output templates linked to the team's real tasks.

Test, refine, and hand over

I test with real questions, mark limitations, refine weak outputs, document the review process, and hand over the user guide.

The deliverables make the assistant easier to use and easier to check

The exact build depends on tool choice, file volume, sensitivity, and whether the assistant supports retrieval, summaries, drafting, or synthesis.

- Approved source library

- Source inventory and metadata table

- File index and preparation notes

- Custom GPT or AI assistant

- System instructions and prompt library

- Output templates

- Limitations notes and answer-boundary rules

- QA test questions

- Source checking process

- User guide and handover session

Use cases for a controlled AI knowledge base

The assistant should support real work that the team already does, not create another tool that needs extra management.

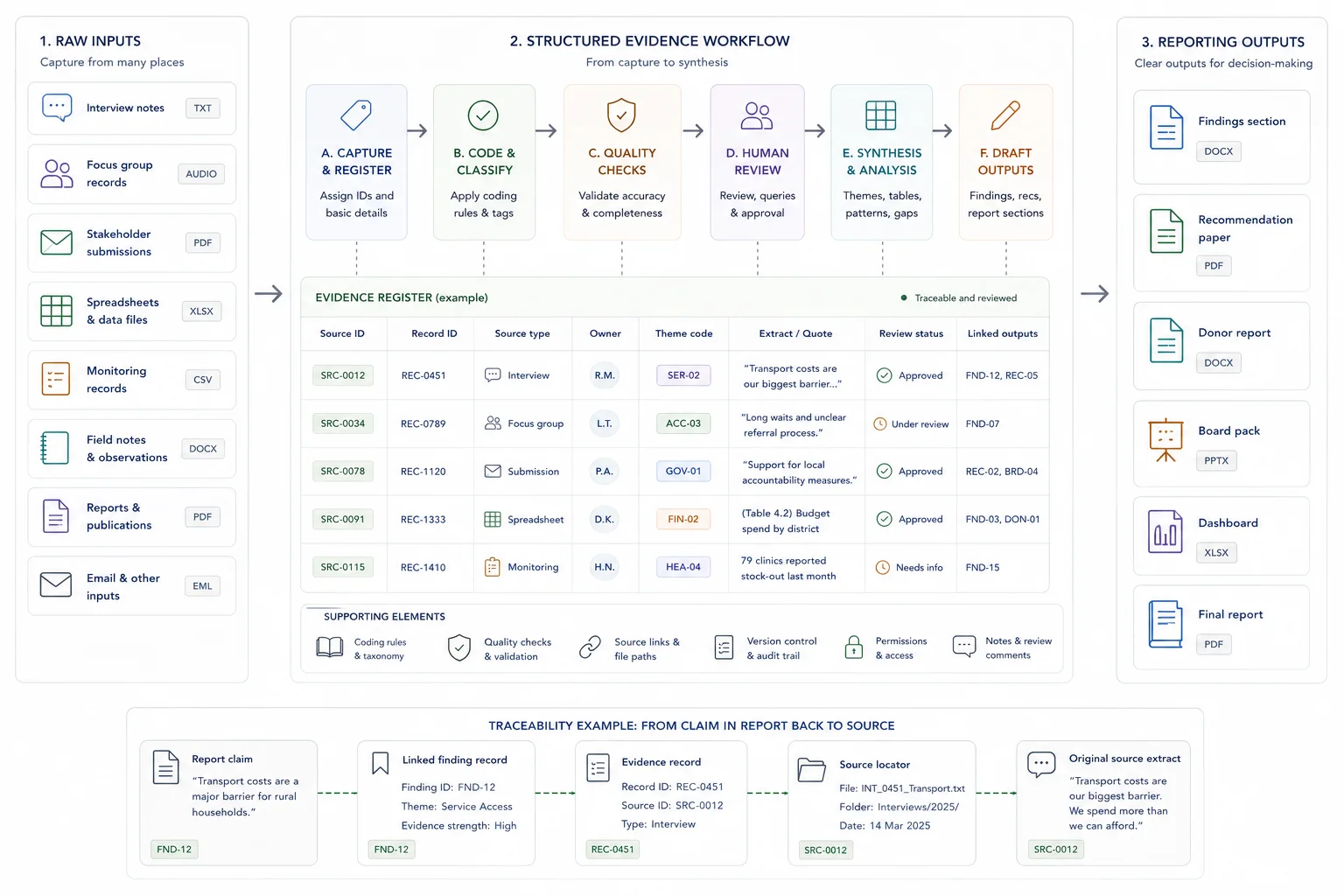

Evidence and report retrieval

Ask source-linked questions across reports, evidence tables, case material, and approved documents before drafting or review.

Document comparison and summaries

Compare documents, prepare first-pass summaries, identify gaps, and gather source notes for human review.

Internal knowledge reuse

Help staff find approved policies, procedures, examples, client material, or project knowledge without searching through folders manually.

Human review is part of the build

The assistant should make review easier, not hide the need for it.

Source checking

Answers should be checked against source files, source IDs, metadata, or evidence tables before they are used in reports or decisions.

Prompt discipline

Prompt libraries and output templates reduce inconsistent user behaviour and make results easier to compare.

Known limitations

The handover should explain what the assistant can support, where it is weak, and when staff should return to the source material.

What the client needs to provide

The source and review rules matter more than the tool choice at the start.

Approved documents and folders

Source documents, folder access, current indexes, evidence tables, past reports, and any files that should be excluded.

Use cases and review rules

Common questions, preferred outputs, examples of good and bad answers, review steps, and sign-off expectations.

Access and privacy requirements

Preferred tools, data handling rules, sensitivity limits, team access rules, and any AI-use restrictions.

Related case studies

These examples show AI retrieval and custom GPT work as a controlled layer on top of structured evidence, source tracking, and human review.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Is this just a custom GPT?

No. A custom GPT may be part of the build, but the work also includes source preparation, metadata, prompt rules, output templates, testing, review guidance, and handover.

Can the assistant answer only from approved sources?

The build can be designed around approved source material and clear answer boundaries. Tool limits still matter, so source checking and human review remain part of the workflow.

Can you work inside our current tools?

Where possible, yes. The tool choice depends on the client's approved environment, data sensitivity, access rules, and what the assistant needs to do.

What should the AI not do?

It should not make final decisions, invent unsupported claims, treat drafts as approved sources, handle sensitive material without agreed safeguards, or replace expert review.

What should I send before a scoping call?

Send the source types, folder structure, rough file volume, intended users, common questions, preferred output formats, review requirements, and any AI-use restrictions.

Send the source library and the questions your team needs to ask

If your team wants AI support but needs stronger source control first, send the source types, current folder setup, expected use cases, approved tools, and review requirements.