- Source material is spread across folders, spreadsheets, PDFs, transcripts, notes, submissions, and draft sections.

- Report writers cannot quickly find the evidence behind claims, findings, or recommendations.

- Themes, quotes, gaps, and review notes live in different files.

- AI tools are being used, but the source layer and review route are not clear enough.

- The team needs defensible outputs without losing human judgement or source traceability.

Evidence, Insight & Reporting Engine for report-ready evidence workflows

Your team may already have the documents, submissions, interviews, case studies, notes, and research inputs. The harder part is turning that material into a source-linked system that writers, reviewers, and decision-makers can use under pressure.

You have the evidence. The hard part is turning it into a report-ready system.

This offer is for teams where evidence exists, but the route from source material to findings, recommendations, review, and final report sections is slow, unclear, or risky.

Teams that need the evidence layer behind the report

The strongest fit is evidence-heavy work where several people need to review, write from, and check the same source base.

UNICEF and UN primary contractors

For contractors carrying evidence-heavy reporting workstreams where qualitative material, donor standards, and review deadlines need one working structure.

Research and evaluation teams

For teams turning interviews, case studies, field notes, survey comments, and source documents into findings, tables, and report-ready sections.

Policy and public consultation teams

For white paper, public consultation, situation analysis, and review teams that need claims, source locators, themes, and drafting points kept together.

This buying route sits underneath the core service pages

The page combines several service areas. The core service pages remain the main capability layer.

Data Synthesis

Use this when raw evidence needs to be grouped, compared, coded, and turned into findings.

View Data SynthesisReport Writing

Use this when structured evidence needs to move into findings sections, summaries, recommendations, or draft report content.

View Report WritingInsight Generation

Use this when the team needs priorities, implications, decision notes, or recommendation support from the evidence base.

View Insight GenerationCustom AI Building

Use this when the evidence base also needs controlled retrieval, custom GPT support, prompt libraries, or output templates.

View Custom AI BuildingDatabase Architecture

Use this when the first problem is the structure of the source tracker, evidence database, data dictionary, or source library.

View Database ArchitectureCommon source material this engine can organise

The source base can be mixed. The job is to create enough structure for review, synthesis, drafting, and handover.

Research and evaluation inputs

- Interviews and focus groups

- Case studies

- Field notes

- Survey comments

Policy and consultation material

- Public submissions

- Specialist inputs

- Review comments

- Draft policy sections

Reporting and evidence files

- Donor reports

- Source documents

- Evidence tables

- Previous analysis packs

A working evidence layer behind the report

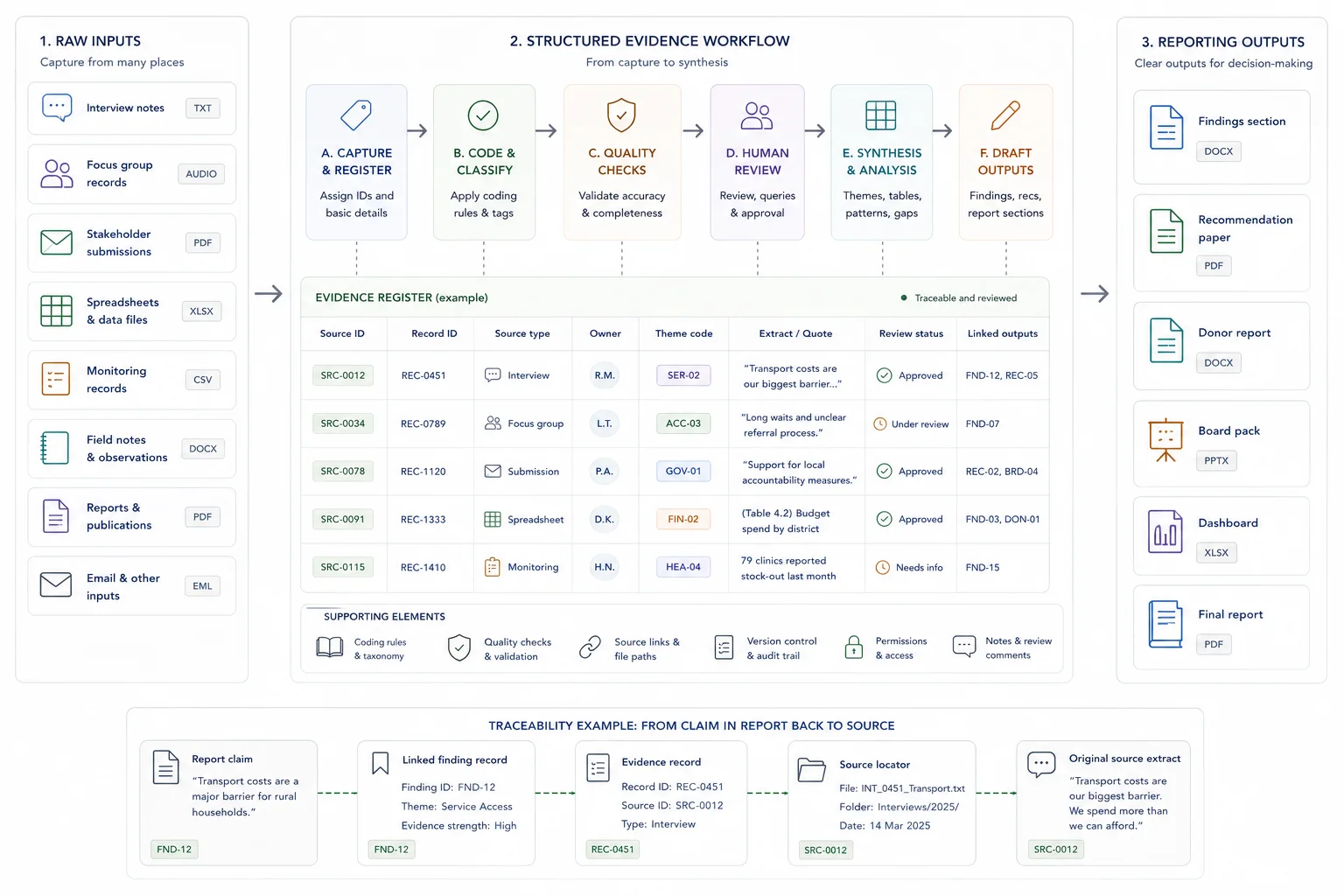

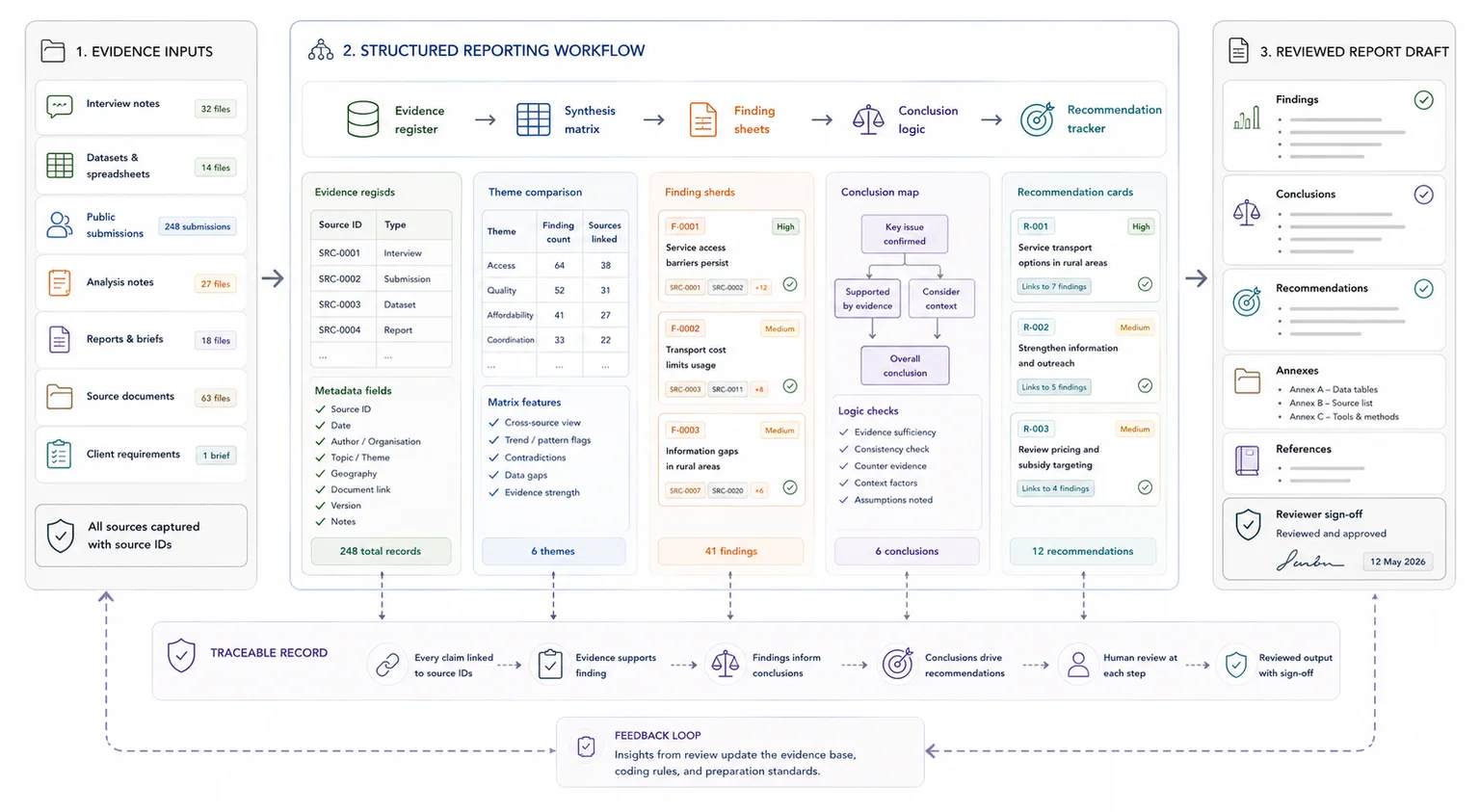

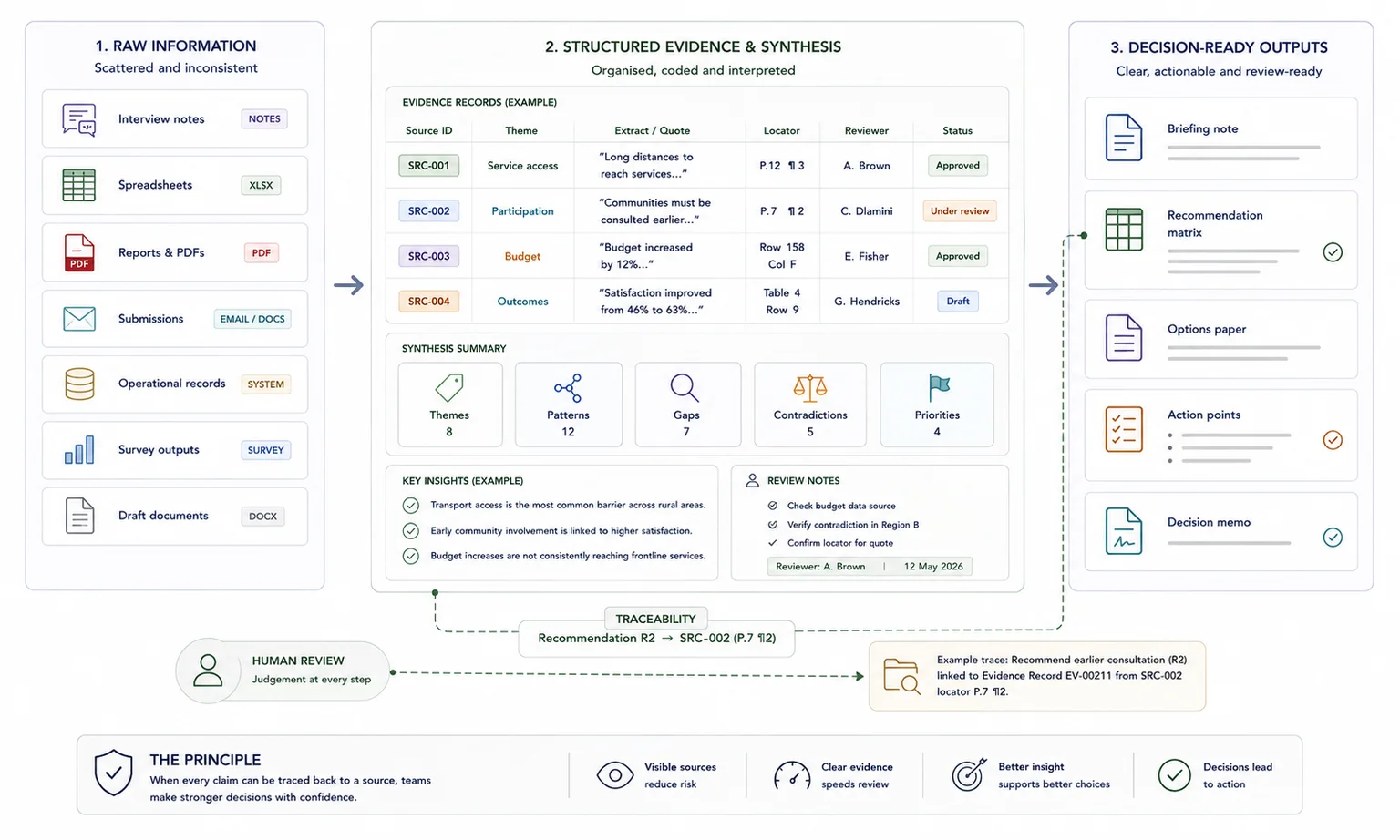

The engine brings source tracking, database architecture, coding, synthesis, reporting support, review notes, and careful AI use into one delivery chain. The exact build depends on the source material, the reporting deadline, and the review process.

The aim is not to automate judgement. It is to make the route from source material to findings, recommendations, report sections, and review decisions easier to follow and easier to check.

Bring multiple evidence streams into one coherent view.

Best when interviews, studies, notes, and submissions need to be compared, grouped, and turned into findings.

The workflow starts with the output and works back to the evidence

The structure should support the report, the review process, and the handover, not only tidy the source files.

Map the source base and report need

I review the material, report brief, questions, required outputs, deadline, tools, and review steps before designing the workflow.

Build the evidence structure

I set up the source tracker, evidence database, coding framework, data dictionary, quote or claim tracker, and source locator rules.

Create synthesis and reporting outputs

I turn the structured evidence into synthesis tables, findings summaries, gap notes, recommendations support, and report-ready sections.

Add QA, AI support, and handover

Where appropriate, I add an AI knowledge base or custom GPT, prompt library, QA checks, source checking process, SOP, and handover notes.

The deliverables give writers and reviewers a clearer path through the evidence

The final scope depends on the source volume, reporting requirements, and sensitivity of the material.

- Source tracker and structured evidence database

- Coding framework and data dictionary

- Quote or claim tracker with source locators

- Synthesis tables and thematic matrices

- Findings summaries and gap notes

- Recommendations matrix or recommendations support table

- Report-ready sections, where scoped

- AI knowledge base or custom GPT where appropriate

- QA and source checking process

- SOP, user guide, or handover notes

Where the engine is most useful

This is the broadest productised offer because it connects structure, synthesis, writing, insight, and retrieval.

Situation analyses and donor reports

Move from qualitative source material to structured evidence, findings, recommendations, and review-ready report sections.

White paper and policy review work

Keep public submissions, specialist inputs, coded claims, synthesis outputs, drafting support, and review comments connected.

Research and evaluation reporting

Create a working route from interviews, case studies, notes, and source documents into findings, tables, and report-ready outputs.

How AI can support the workflow without taking over

AI can be useful when it sits on top of a controlled source layer and remains subject to review.

Useful AI tasks

Retrieval, first-pass summaries, classification, comparison, drafting prompts, and checking questions can reduce manual drag when the source base is ready.

Review rules stay visible

AI outputs should be checked against source locators, quotes, evidence tables, and reviewer judgement before they become findings or recommendations.

Limits are documented

The handover can include prompt notes, answer boundaries, QA checks, and guidance on where AI should not be used.

What the client needs to provide

The first scoping step is easier when the evidence, output, and review process are visible.

Source material

Documents, interviews, notes, submissions, spreadsheets, evidence files, reports, draft sections, and any existing source trackers.

Report and review context

Report brief, research or policy questions, required headings, donor or client standards, review process, deadline, and sign-off route.

Tool and sensitivity constraints

The tools the team is allowed to use, access requirements, confidentiality limits, and whether AI use is approved.

Related case studies

These case studies show the full path from scattered source material to structured evidence, synthesis, reporting support, retrieval, and review.

Use these tools if you need to diagnose the workflow first

The strongest calculator fit depends on where the pressure sits: search, capacity, traceability, reporting drag, or knowledge-base value.

Useful guides before you scope the work

These articles explain the thinking behind the service and the adjacent workflow problems.

Questions before you enquire

Is this a report writing service or a system build?

It can include both, but the main value is the working evidence layer behind the report. Some projects need only the structure and synthesis outputs. Others need draft sections, recommendations support, and review-ready writing.

Can this work with public submissions and consultation material?

Yes. If the project is mainly public submissions or consultation review, the Public Submission Analysis System may be the cleaner route. This page fits broader evidence-to-report workflows that may include submissions, interviews, documents, case studies, and review comments.

Can AI be included?

Yes, where appropriate and approved. AI can support retrieval, summaries, comparison, drafting prompts, and QA checks, but source checking and human review remain part of the process.

What makes this different from a normal synthesis pack?

A synthesis pack may summarise themes. This engine also connects source tracking, evidence structure, findings, recommendations, report sections, review logic, and handover into one workflow.

What should I send before a scoping call?

Send the source types, rough volume, report brief, deadline, review process, current tools, and whether source traceability or AI use matters for the project.

Send the evidence set, reporting need, and deadline

If the team has evidence but the route to findings, recommendations, and report sections is slow or risky, send a short brief with the source types, output needed, review process, deadline, and tool constraints.