Interviews and case studies can produce some of the most useful evidence in a report.

They show what people experienced, what changed, what blocked progress, what worked in practice, and where recommendations should focus.

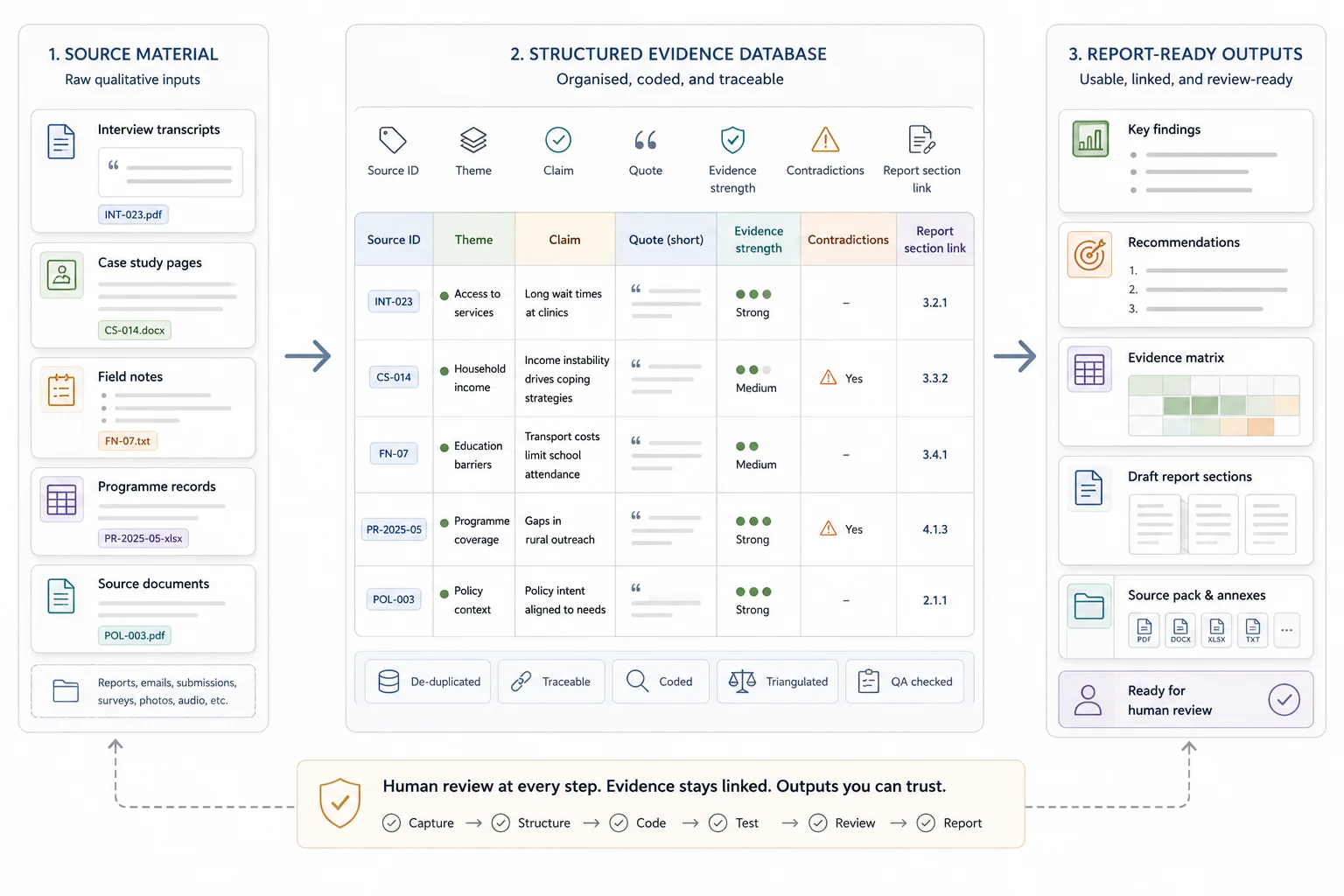

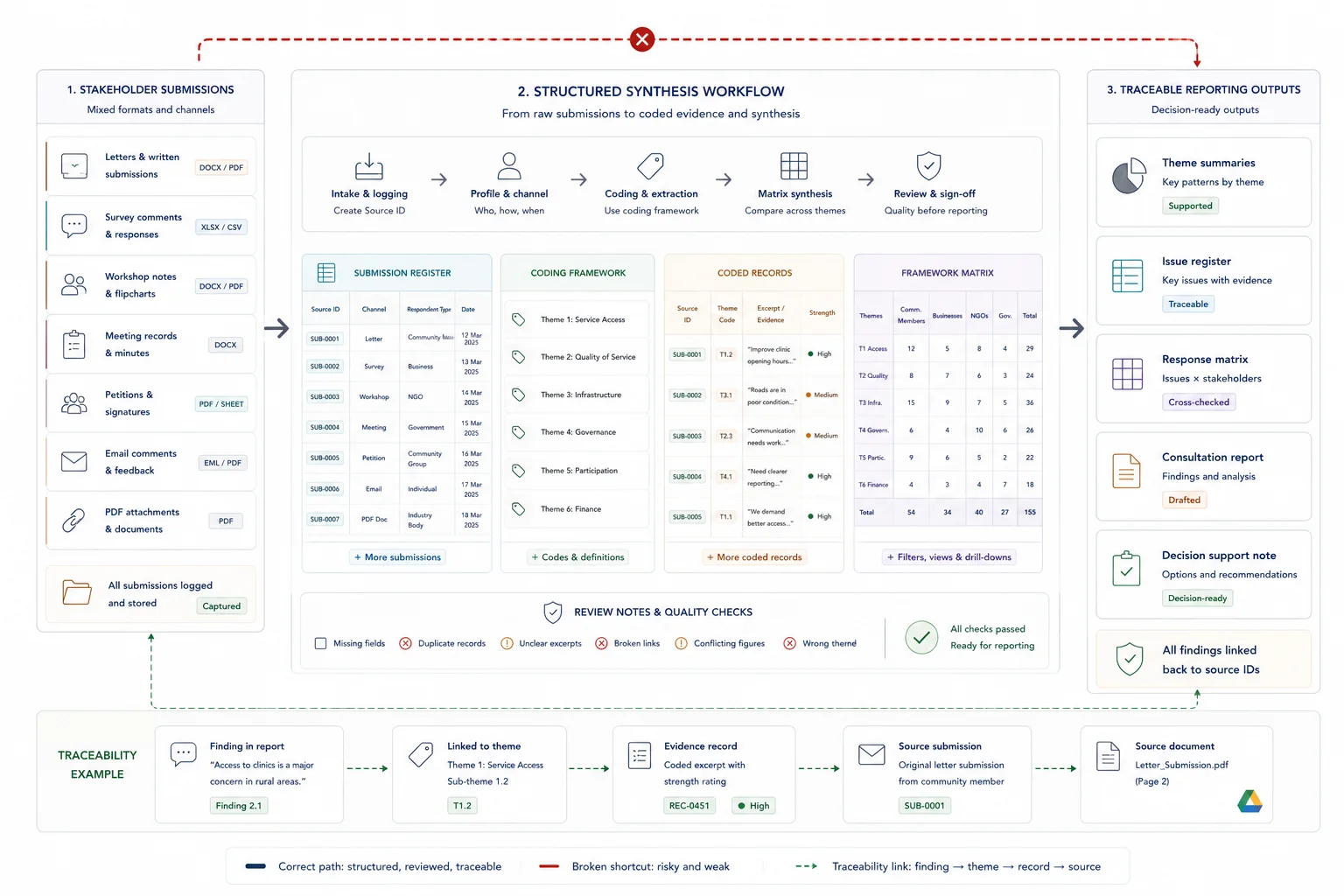

But raw qualitative material is not ready for a report on its own. A folder full of transcripts, field notes, case study drafts, quotes, documents, and project records still needs structure. Without that structure, useful evidence gets lost, findings become vague, and recommendations are harder to defend.

This guide explains how to turn interviews and case studies into report-ready findings using a clear evidence workflow. It covers source tracking, evidence extraction, coding, AI-assisted synthesis, quote-per-claim checks, evidence matrices, finding strength, recommendations, and final report outputs.

Quick answer

Turning interviews and case studies into report-ready findings means moving from raw notes, transcripts, and case material into traceable findings that can support a report. Each finding should show the pattern, the evidence behind it, the implication, and any recommendation that follows. The work is not just summarising interviews. It is building a clean evidence trail from source material to final wording.

Who this guide is for

This guide is for evaluation teams, research contractors, consultants, policy teams, and donor-funded project teams who need to turn qualitative source material into findings, recommendations, and report sections.

It is especially relevant if you are dealing with:

- You are working with interviews, case studies, field notes, programme records, or qualitative documents

- You need findings that can be reviewed against source material

- You are preparing donor reports, evaluation outputs, evidence bases, or recommendation tables

It is less relevant if:

- You only need a short informal summary and source traceability does not matter

Key takeaways

- Quick answer: define the report question, organise the source material, extract evidence into consistent fields, code the material, group codes into themes, test each theme against the evidence, and write findings as clear claims linked to quotes, examples, documents, or records.

- What changes when this is done well: interviews and case studies stop being a pile of notes and become a source-linked evidence base for findings, recommendations, and report-ready sections.

- What to protect: human review, confidentiality, source traceability, finding strength, and the line from evidence to recommendation.

What does report-ready mean?

A report-ready finding is clear enough to place in a draft report and strong enough to survive review. It separates the evidence from the interpretation, shows where the claim came from, and explains why the pattern matters. A finding is not report-ready if it is only a quote, a loose theme, or a note that cannot be traced back to the material.

What report-ready findings need to show

Report-ready does not mean polished wording on top of loose notes. It means the finding can survive review because the source trail, evidence strength, caveats, and implication are visible.

A report-ready finding should answer the report question, show where the evidence came from, make the strength of the finding clear, preserve any limitation that affects interpretation, and point toward a useful implication or recommendation.

This is the working layer behind donor reports, evaluation outputs, synthesis tables, evidence bases, recommendation drafting, and review packs. The final sentence may be short, but the structure underneath it has to be strong enough for someone else to check.

What makes a finding not report-ready yet

A finding is not report-ready if it is only a loose theme, a memorable quote, or a polished sentence without a source trail. The older working note may still be useful, but it needs evidence, context, strength, caveats, and implication before it belongs in the report.

In evidence-heavy projects, the failure point is often not analysis. It is the missing link between the source material and the final claim. Interviews, field notes, case studies, and programme records may all contain useful evidence, but the report only becomes dependable when those inputs are organised into a source-linked evidence base before drafting starts.

What good looks like

| Weak setup | Stronger setup |

|---|---|

| Interview notes are copied into a summary | Interview material is extracted, coded, and linked to source references |

| Findings depend on one strong quote | Findings are supported by a pattern across sources or a clearly justified single case |

| Recommendations appear late in the draft | Recommendations follow from findings, implications, and review notes |

| Reviewers have to ask where claims came from | Each claim can be checked against an evidence matrix or source register |

Where this fits in the wider workflow

| Workflow stage | What happens |

|---|---|

| Input | Interviews, transcripts, case studies, field notes, programme records, and source documents |

| Structure | Source IDs, extraction fields, coding rules, evidence matrices, quote logs, and finding categories |

| Review | Quote-per-claim checks, finding strength review, human judgement, and recommendation checks |

| Output | Report-ready findings, synthesis tables, donor report sections, recommendations, and evidence bases |

Start with the report question

Do not start by coding the transcript. Start with the question the report needs to answer.

What report-ready findings are

Report-ready findings are clear statements drawn from analysed evidence. They explain what the evidence shows, where it came from, how strong it is, why it matters, and what the reader should do with it.

A rough note might say: "Several interviewees mentioned delays."

A report-ready finding is stronger: "Project delays were most often linked to unclear approval points between delivery teams and senior reviewers. Interviewees described repeated rework at handover stages, while project records showed approval gaps in three of the four reviewed cases."

The stronger version gives the reader a clear claim, an evidence base, and a likely cause. The CDC's guidance on qualitative data collection and analysis is useful here because it explains why qualitative methods help teams understand perceptions, values, opinions, context, and why something happened.

Define the analysis focus before reading

Before you code a transcript or summarise a case study, write down the question the report needs to answer.

This might be:

- Why are customers dropping out after onboarding?

- What helped participants complete the programme?

- What barriers affected project delivery?

- How did different sites experience the same intervention?

- What lessons should shape the next phase?

This protects you from building findings around the loudest quote, longest interview, or most recent case study. It also gives your analysis a simple test: does this evidence help answer the report question?

Simple analysis frame

| Report question | Evidence needed | Likely sources |

|---|---|---|

| What slowed delivery? | Repeated blockers, timeline gaps, decision delays | Interviews, project records, meeting notes |

| What worked well? | Positive outcomes, success factors, examples | Case studies, stakeholder interviews, outcome records |

| What should change next? | Pain points, risks, improvement ideas | Interviews, workshops, review documents |

Build the source and extraction layer

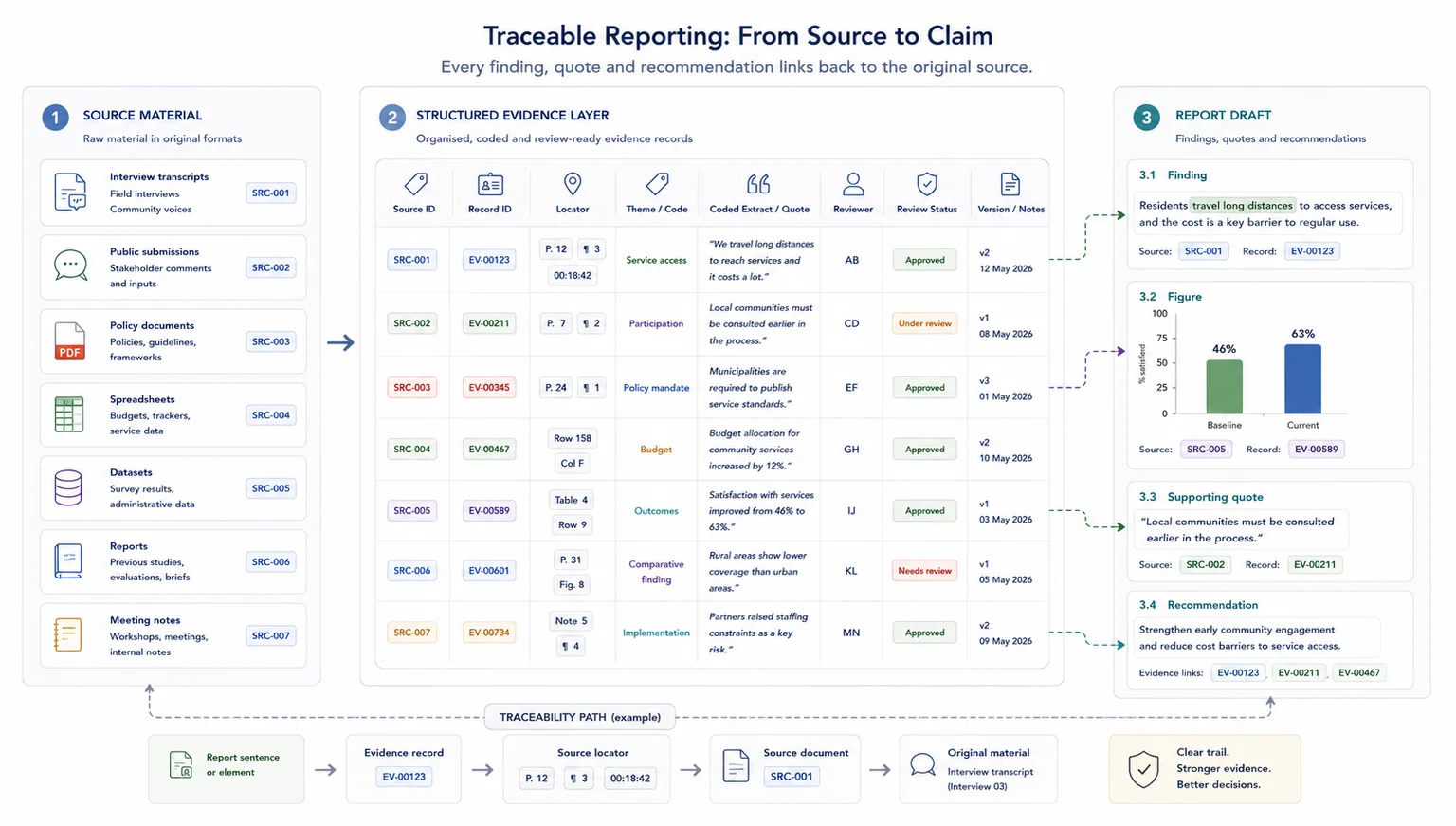

A report-ready finding needs a route back to the interview, case study, document, quote, or record that supports it.

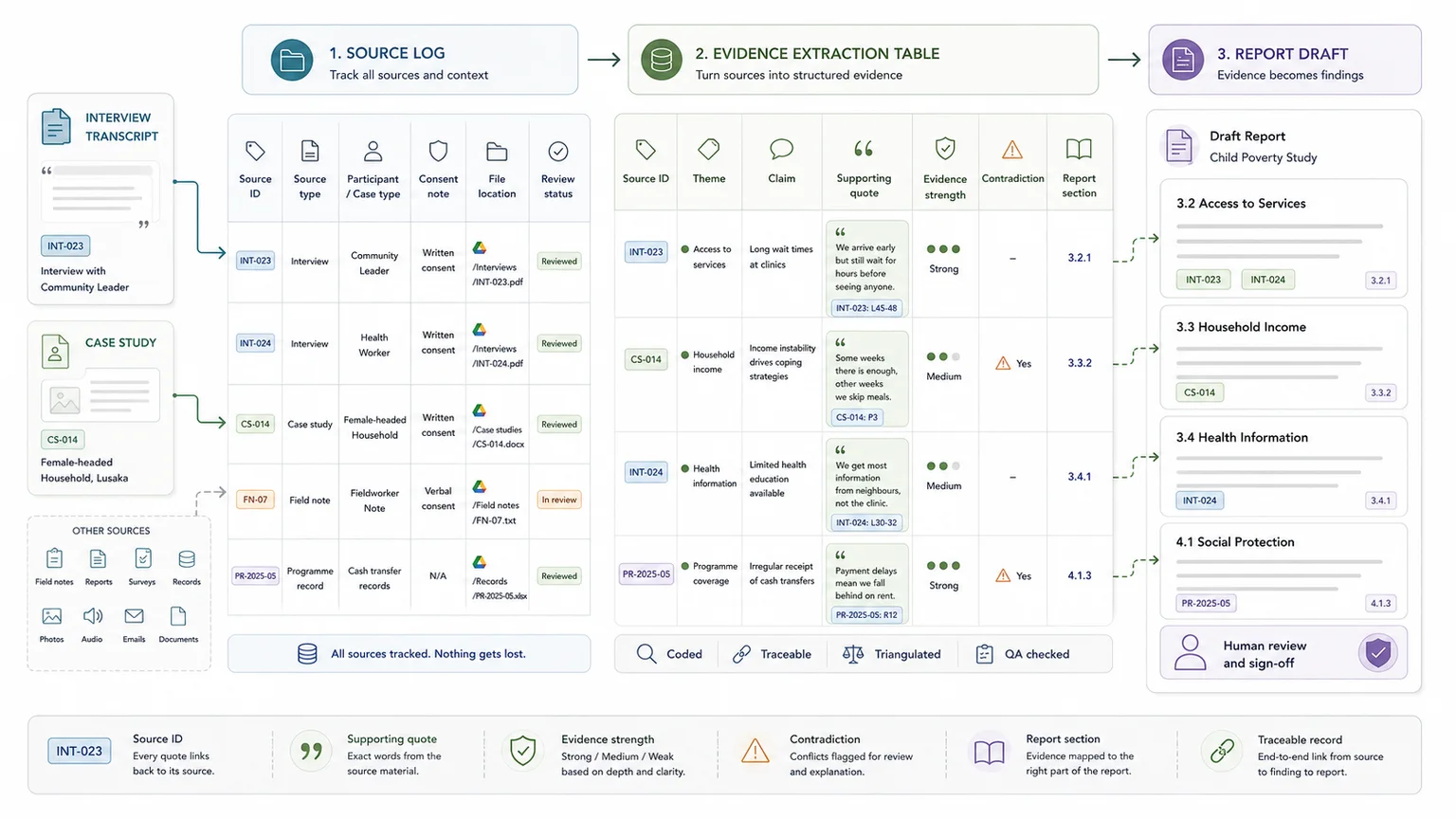

Build a source log before you analyse

Start by creating a source log. Without it, evidence gets scattered across transcripts, folders, notes, spreadsheets, and draft documents. Once that happens, report writers struggle to answer a basic question: where did this finding come from?

The source log does not need to be complicated. It needs to be stable enough for someone else to find the source again.

Source log fields

| Field | Why it matters |

|---|---|

| Source ID | Gives each interview, case study, or document a unique reference |

| Source type | Shows whether the material came from an interview, note, case study, document, or record |

| Participant or case type | Helps compare evidence by role, group, organisation, site, or segment |

| Consent or confidentiality notes | Protects participants and sensitive information |

| File location | Makes the source easy to find later |

| Review status | Shows whether the source has been checked |

Build an evidence extraction table before writing

The evidence extraction table is the working layer between raw material and the final report. For each interview, case study, field note, or source document, capture the fields that will help the report later.

This table can become a source tracker, quote bank, synthesis table, coding sheet, data dictionary, and reporting workflow in one place. Without this layer, quotes stay trapped in transcripts and findings become harder to check.

In the UNICEF Zambia evidence workflow, quote-per-claim guardrails and spreadsheet-friendly traceability made it easier to turn case studies, interviews, notes, and source documents into report-ready outputs.

Evidence extraction fields

| Field | Why it matters |

|---|---|

| Source ID | Keeps the finding linked to the original material |

| Participant or case type | Helps compare views by group or role |

| Theme | Groups related evidence |

| Claim or issue | Captures the specific point being made |

| Supporting quote | Keeps the finding close to the source |

| Evidence strength | Shows whether the point is well-supported or thin |

| Contradiction or exception | Stops the report from flattening the evidence |

| Report section | Shows where the evidence may be used |

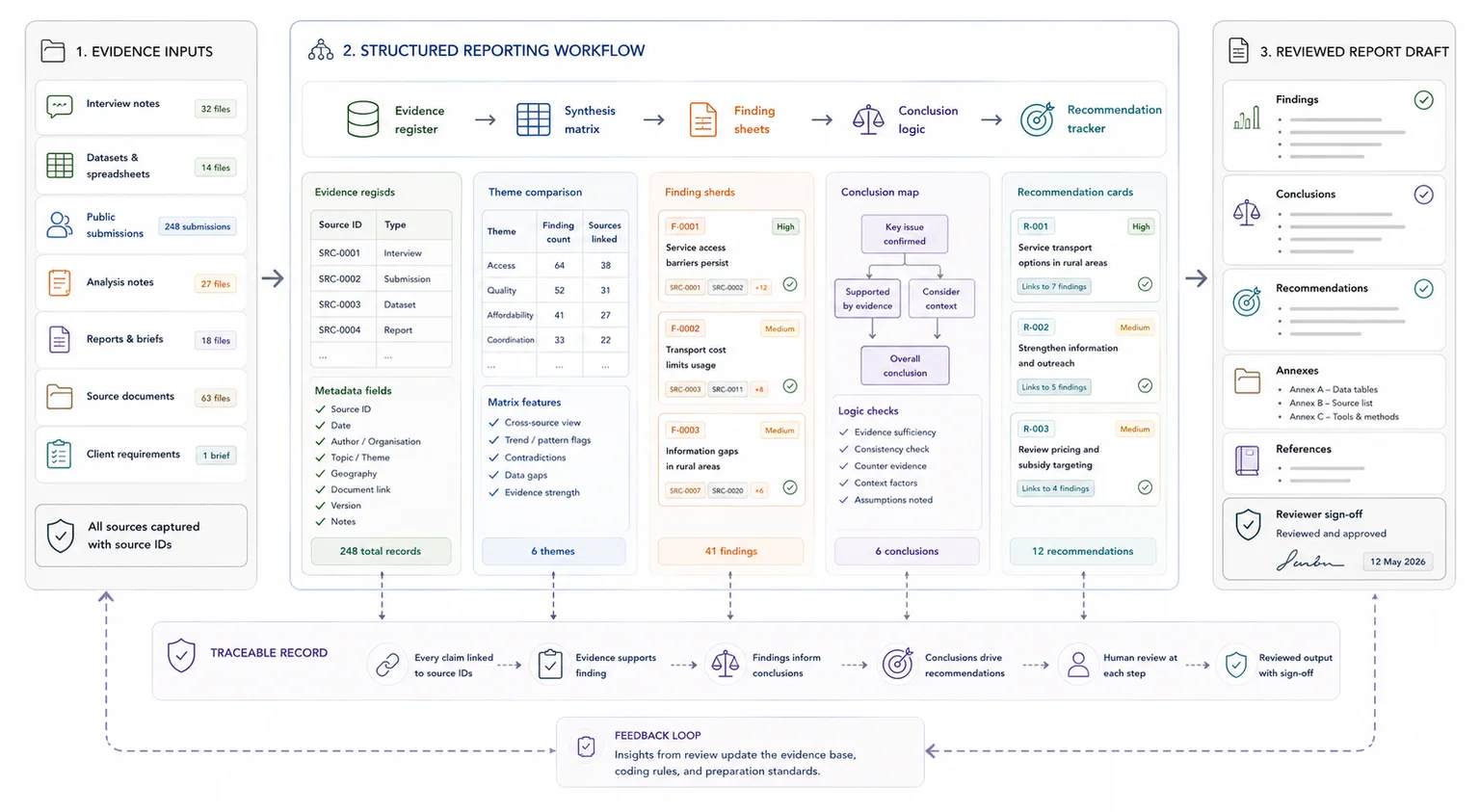

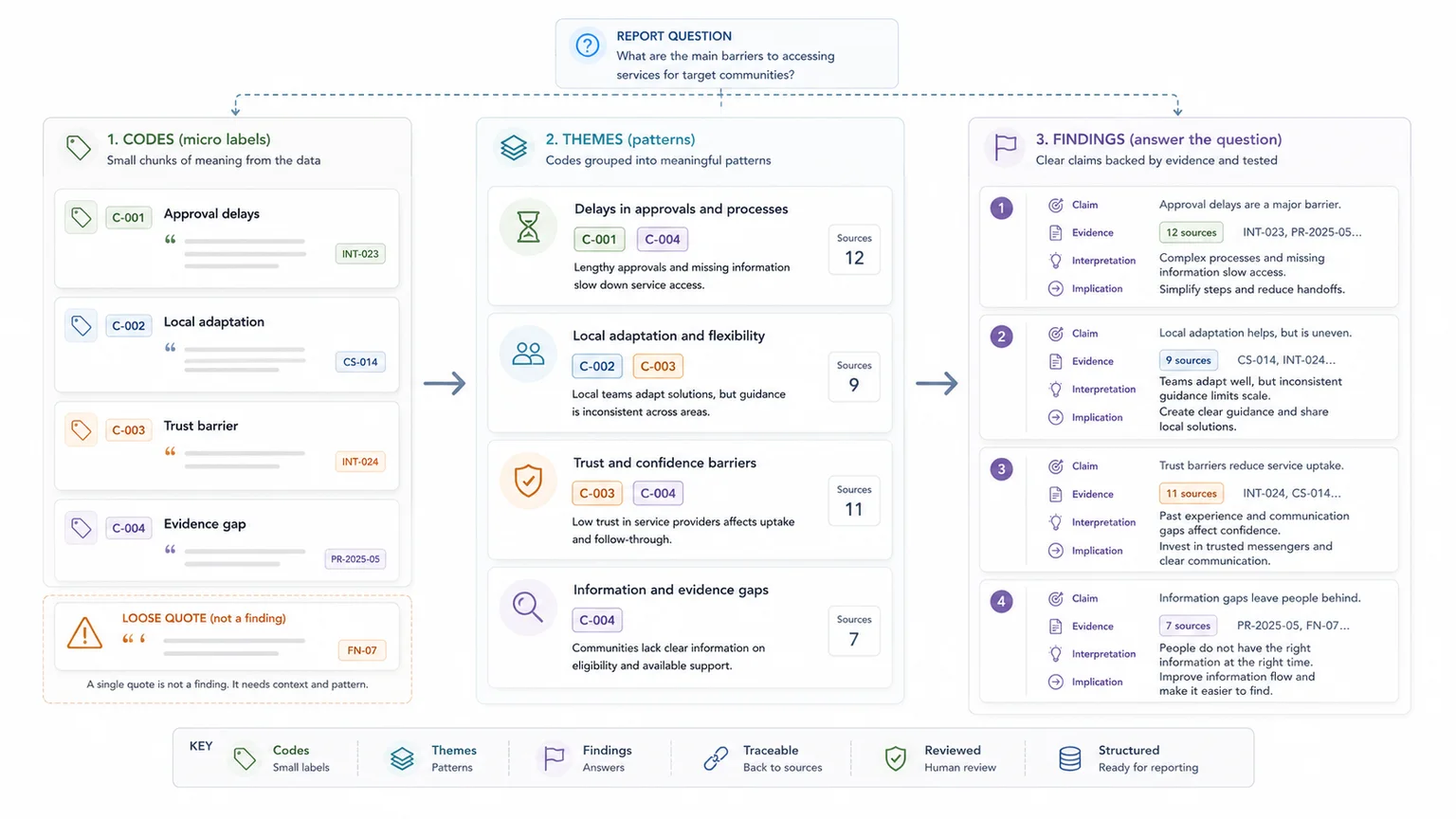

Code, theme, and test the evidence

Coding is useful only if it helps the final report. The aim is to build findings, recommendations, and report sections, not to tag material for its own sake.

Read for meaning before coding

It is tempting to start tagging lines as soon as you open a transcript. A better first pass is to read for overall meaning.

As you read, write short notes about early patterns, questions, contradictions, and possible links to the report brief. Do not try to finalise findings at this stage. The goal is to understand the material before reducing it into codes and themes.

Code around decisions, themes, and evidence

Use both planned and emerging codes. Planned codes come from the brief, research questions, framework, or report structure. Emerging codes come from the material itself.

This balance matters. If you only use the brief, you may miss important findings. If you only follow the data, the final report may drift away from the decision the reader needs to make.

Practical codebook examples

| Code | Meaning | Example evidence |

|---|---|---|

| Approval delays | Slow sign-off or unclear decision ownership | "We waited three weeks for final approval." |

| Local adaptation | Changes made to fit local needs | "We changed the workshop format for rural groups." |

| Trust barrier | Low confidence in the provider, process, or message | "People were unsure who the programme was really for." |

| Evidence gap | Claim that needs checking | "Everyone improved after the training." |

Use AI-assisted synthesis carefully

AI can help with first-pass summaries, theme suggestions, quote extraction, comparison across cases, draft finding language, and preparation of synthesis tables.

But AI should not be treated as the final analyst. Human review still matters. Someone needs to check whether the quote supports the claim, whether the context has been preserved, whether confidentiality has been protected, and whether the finding overreaches.

The better sequence is: structure the evidence first, use AI to support synthesis, then apply human review before anything becomes report-ready.

Group codes into themes that answer the report brief

A code is a label. A theme is a meaningful pattern. A good theme should pass three checks: it should answer the research question, have enough evidence behind it, and have a clear implication for the reader.

This is where reports often become weak. They turn every topic into a finding without checking whether the evidence supports it. A theme is only useful if it helps the reader understand what happened, why it happened, or what should happen next.

From codes to themes

| Codes | Theme |

|---|---|

| Approval delays, unclear ownership, late-stage rework | Decision-making bottlenecks slowed delivery more than technical capacity |

| Peer support, follow-up calls, informal check-ins | Ongoing contact helped participants stay engaged after the first session |

| Local examples, translated materials, site-level adaptation | Locally relevant material made the programme easier to apply |

Turn themes into findings and recommendations

The difference between a summary and a finding is usually specificity. A strong finding explains the issue, shows where it appeared, and points toward a decision.

Use a quote-per-claim rule

A simple way to improve finding quality is to ask: what is the source evidence behind this claim?

For each draft finding, keep at least one supporting quote, excerpt, case example, document reference, or record link nearby. This does not mean every sentence in the final report needs a quote. It means the analysis file should make the evidence trail visible before the finding becomes final.

A useful rule is simple: no finding without a source, no recommendation without a finding, and no claim without something to check.

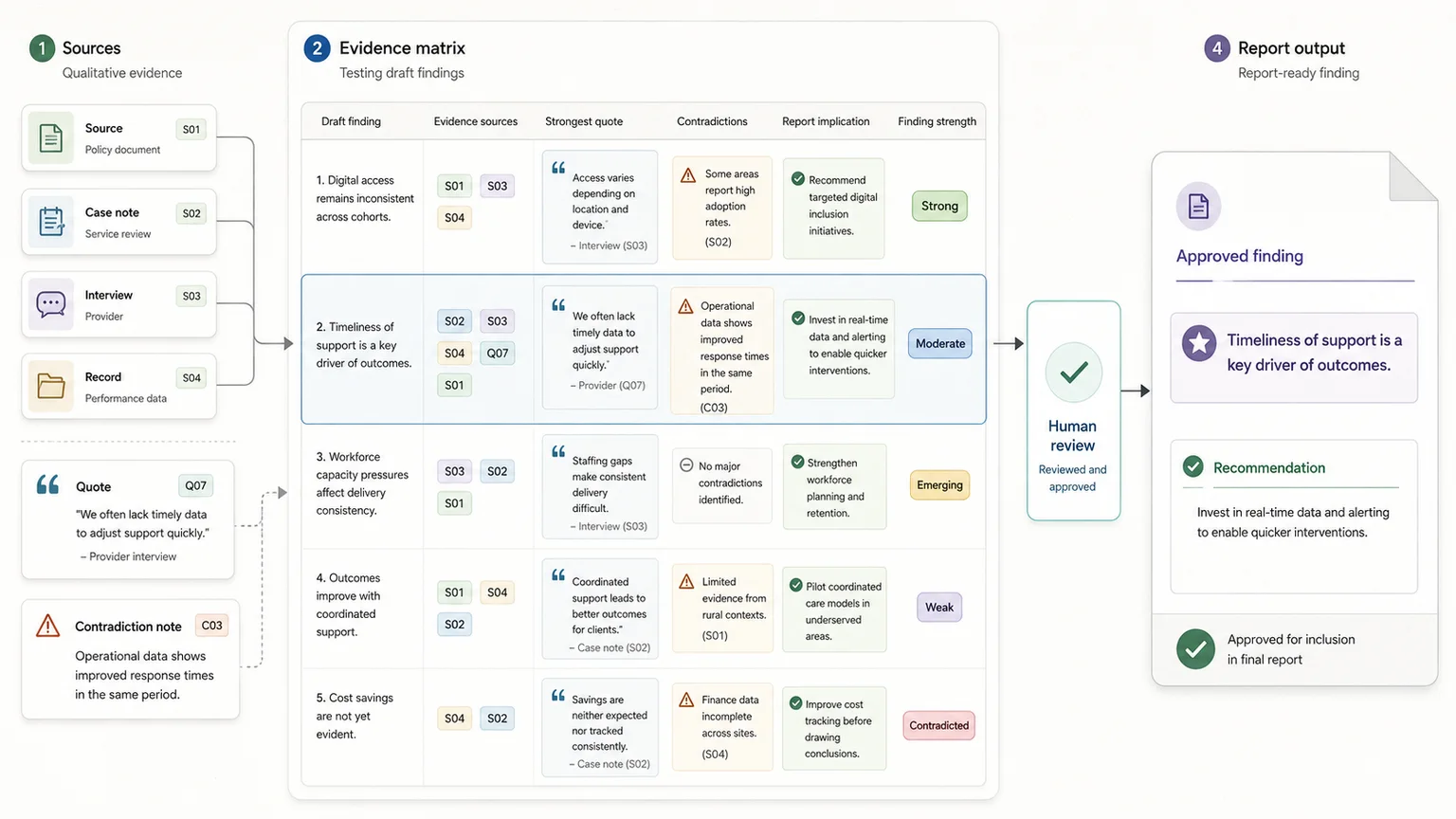

Build an evidence matrix before writing the report

An evidence matrix helps you test each finding before it reaches the report. It also makes review easier because a colleague, client, evaluator, or programme lead can challenge the interpretation without digging through every transcript.

Evidence matrix example

| Draft finding | Evidence sources | Strongest quote or example | Contradictions | Report implication |

|---|---|---|---|---|

| Onboarding worked best when staff had local examples | 6 interviews, 2 case studies, workshop notes | Participant quote from Case C | Case A succeeded without local examples | Add a local scenario bank to the next phase |

| Approval delays slowed delivery | 5 staff interviews, project timeline, meeting notes | Delivery lead quote | Senior team saw delays as quality control | Clarify approval stages and deadlines |

Check how strong each finding is

Not every finding has the same weight. Before writing, label each draft finding by strength. This helps the report avoid overclaiming and helps reviewers see which findings are ready and which need more checking.

Finding strength labels

| Strength | Meaning |

|---|---|

| Strong | Supported by several sources, with clear examples and little contradiction |

| Moderate | Supported by more than one source, but with some gaps or variation |

| Emerging | Appears in the evidence, but needs more checking |

| Weak | Based on one comment, unclear evidence, or unsupported interpretation |

| Contradicted | Some evidence supports it, but other evidence challenges it |

Write findings as claims, evidence, interpretation, and implication

A report-ready finding should usually follow this pattern: finding statement, evidence, interpretation, implication.

This format keeps the writing clear. It also stops the report from becoming a quote dump. Quotes should support the finding. They should not carry the full explanation.

For formal qualitative reporting, the Standards for Reporting Qualitative Research and the COREQ checklist for interviews and focus groups are useful references because they keep transparency, links to empirical data, synthesis, interpretation, limitations, and trustworthiness visible.

Prepare report-ready outputs

The final output should not only be a pile of coded notes. A strong synthesis workflow produces usable artefacts for writing, review, and decision-making.

Useful outputs from the synthesis workflow

Evidence-heavy reporting needs a working layer between raw material and writing. In UNICEF-related evidence workflows, this can mean turning case studies, interviews, notes, and documents into structured evidence tables, coded excerpts, quote banks, findings, recommendations, and report-ready sections.

That working layer makes the report easier to write, easier to review, and easier to defend.

Report-ready outputs

| Output | Purpose |

|---|---|

| Source tracker | Shows what material was reviewed |

| Evidence extraction table | Captures claims, quotes, themes, and source links |

| Codebook | Keeps coding consistent |

| Quote bank | Stores usable excerpts by theme or section |

| Evidence matrix | Tests whether each finding is supported |

| Recommendation matrix | Links findings to actions, owners, priorities, and review notes |

| Report section notes | Turns synthesis into draft-ready content |

Final checklist before findings go into the report

Before sending findings for review, check that each one passes these tests:

- Does it answer the report question?

- Can it be traced back to source evidence?

- Is there at least one quote, excerpt, case example, or document reference behind it?

- Has it been checked for contradictions or exceptions?

- Is the finding strength clear?

- Does the recommendation follow from the finding?

- Is the wording clear enough for a non-research reader?

- Has participant confidentiality been protected?

- Does the report avoid claiming more than the evidence can support?

If a finding cannot pass these checks, keep it in the analysis file until it has been reviewed.

When a simple setup is enough

- The project has a small number of interviews

- The output is an internal learning note rather than a formal report

- Only one reviewer needs to check the evidence trail

When you need a more structured system

- Findings need to withstand client, donor, policy, or public review

- Multiple analysts are extracting or coding the same material

- Recommendations must be linked back to clear evidence

Common mistakes to avoid

Treating strong quotes as findings

A quote can support a finding, but it is not the finding on its own. The better approach is to explain the pattern, then use the quote as evidence.

Losing the link between notes and claims

When notes are copied into draft language too early, the source trail becomes hard to rebuild. Keep stable source references from extraction through to drafting.

Writing recommendations before the evidence is checked

Recommendations should come after evidence review. Otherwise the report can sound confident while resting on weak or incomplete material.

Copyable report-ready finding checklist

Use this before moving a finding into a report draft

| Check | Done |

|---|---|

| The finding is based on more than one weak note or unsupported quote | |

| The source material is traceable | |

| The finding states what changed, matters, or repeats across cases | |

| The finding separates evidence from interpretation | |

| The implication is clear | |

| The recommendation follows from the evidence |

Related resources

Use these next if you need to move from the article into a related workflow, calculator, case study, or service.

- Research Data Synthesis Support - use this if you need help turning qualitative material into structured findings

- Report Writing - use this if findings need to become a clear report

- Data Synthesis - use this if the source material includes mixed documents, datasets, and notes

- UNICEF child poverty study in Zambia - use this to see evidence synthesis in a public-safe case study

- UNICEF Palestine disability situation analysis - use this to see how evidence is organised for reporting

- How to stop losing source traceability - use this if review comments keep asking where claims came from

FAQ

How do you turn interview data into findings?

Start by organising the interview material into a source log and evidence extraction table. Then code the material, group related codes into themes, test each theme against the report question, and write findings as clear claims supported by evidence.

How do you analyse case study evidence for a report?

Define each case clearly, extract evidence into consistent fields, compare patterns across cases, and look for similarities, differences, contradictions, and useful examples. Case study evidence is strongest when it shows how a theme played out in a real setting.

What is a qualitative evidence matrix?

A qualitative evidence matrix is a table that links draft findings to sources, quotes, examples, contradictions, implications, and report sections. It helps keep findings traceable and makes review easier.

What is the difference between a theme and a finding?

A theme is a pattern in the evidence. A finding is a clear statement about what that pattern means for the report question. For example, approval delays is a theme. Unclear approval points between delivery teams and senior reviewers caused avoidable rework is a finding.

Can AI analyse interviews and case studies?

AI can help with summaries, coding suggestions, quote extraction, comparison tables, and draft wording. Human review is still needed to check meaning, context, confidentiality, evidence strength, and whether the finding overreaches.

Need help turning qualitative evidence into report-ready findings?

Turning interviews and case studies into report-ready findings is not just a writing task. It is an evidence workflow.

You need to organise the source material, extract useful evidence, code it consistently, group it into themes, test each finding, check for contradictions, and connect the results to recommendations.

If you need help turning interviews, case studies, field notes, source documents, or messy qualitative material into a structured evidence base, I can help build the workflow, prepare the synthesis tables, and turn the findings into clear outputs for review.

Sources used in this guide

Data Synthesis

Combine and interpret inputs from multiple sources into integrated findings.