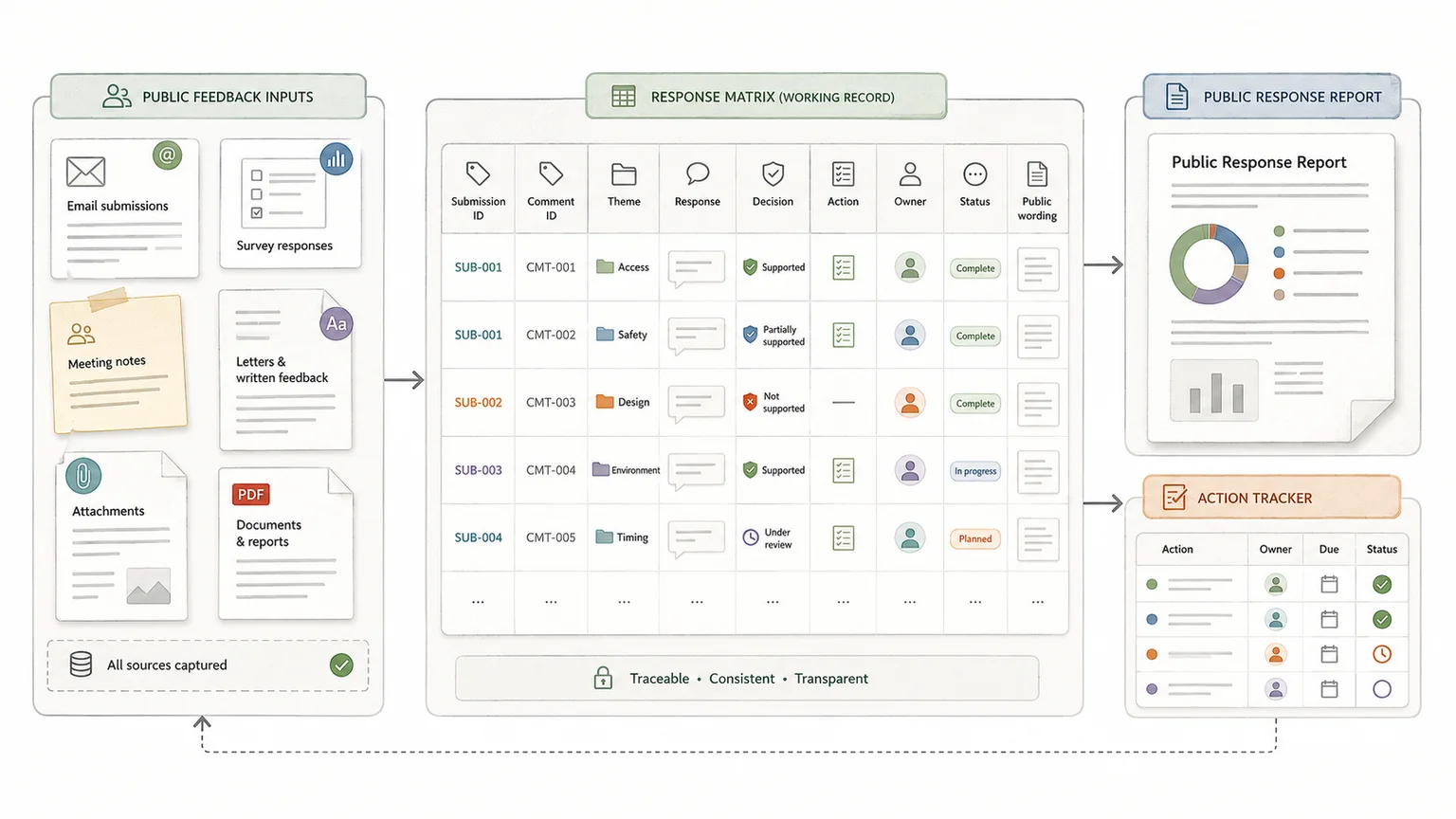

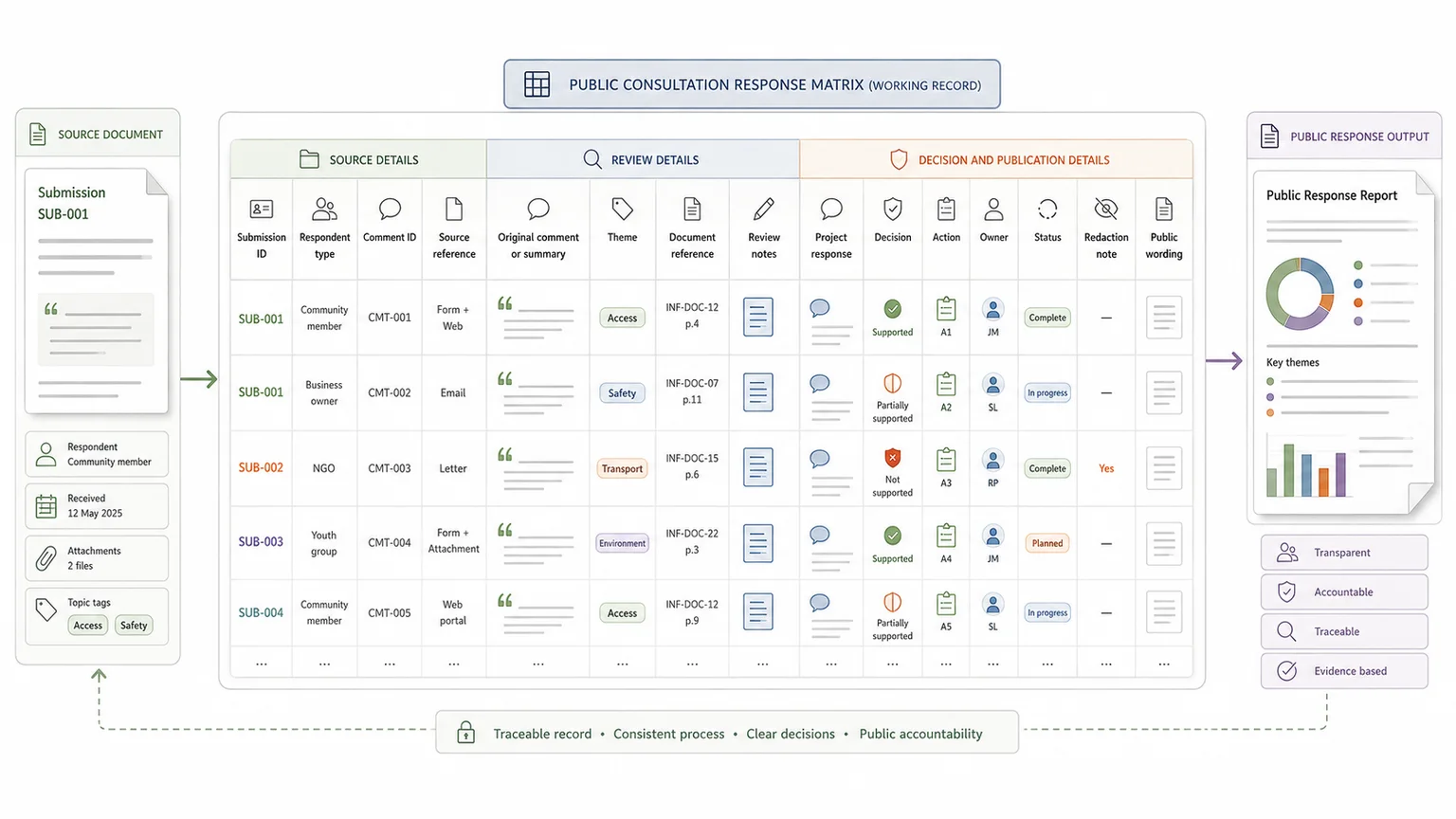

A public consultation response matrix should show what people said, how their feedback was reviewed, what decision was made, and what changed as a result.

It is not just a spreadsheet of comments. It is the working record that links consultation feedback to evidence, decisions, actions, owners, and public reporting.

That matters because public bodies are often expected to show how consultation responses informed their final decision. The UK Government's consultation principles say responses should explain what was received, how it informed the policy, and how many responses were received. They also say responses should normally be published within 12 weeks, or an explanation should be given if that is not possible.

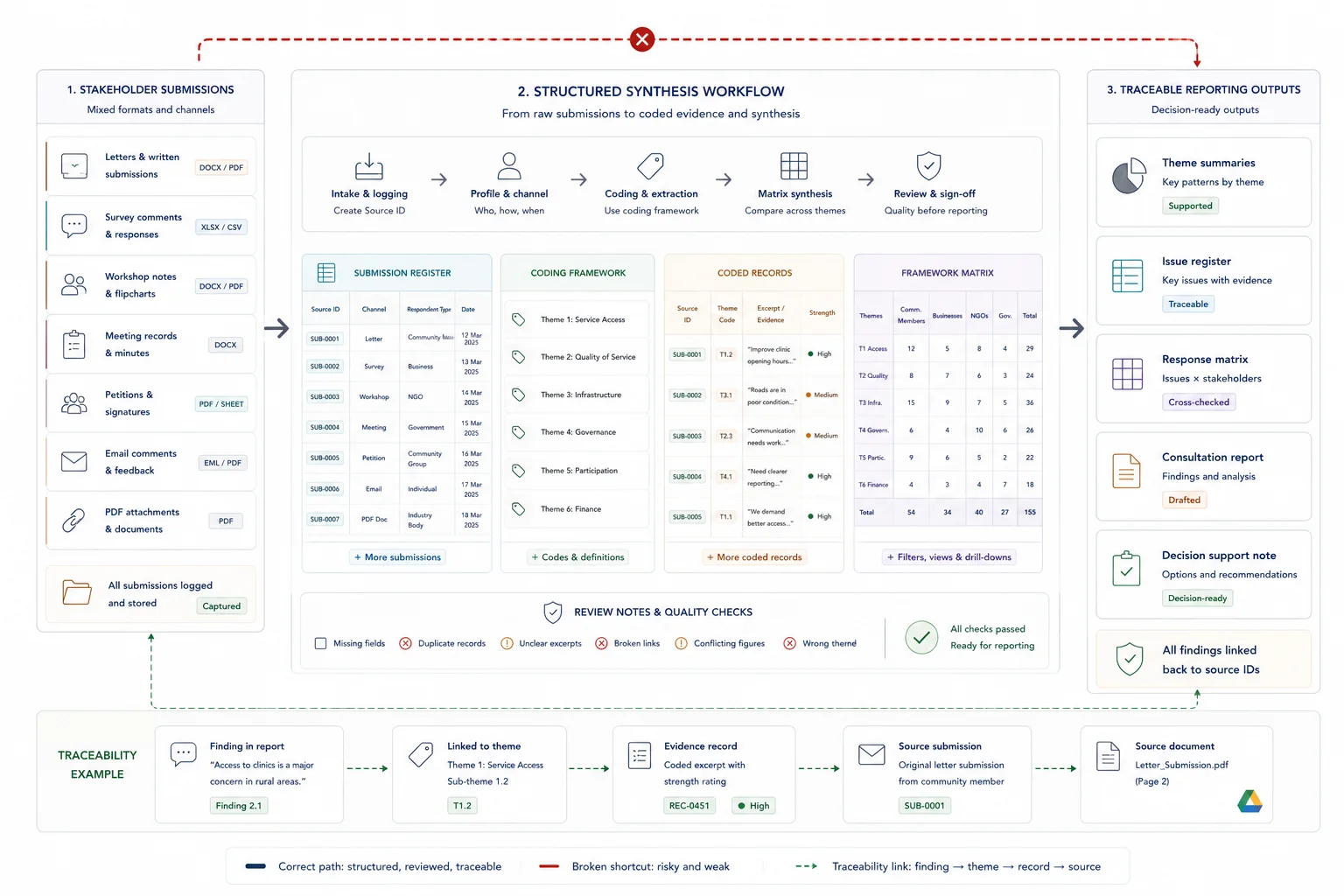

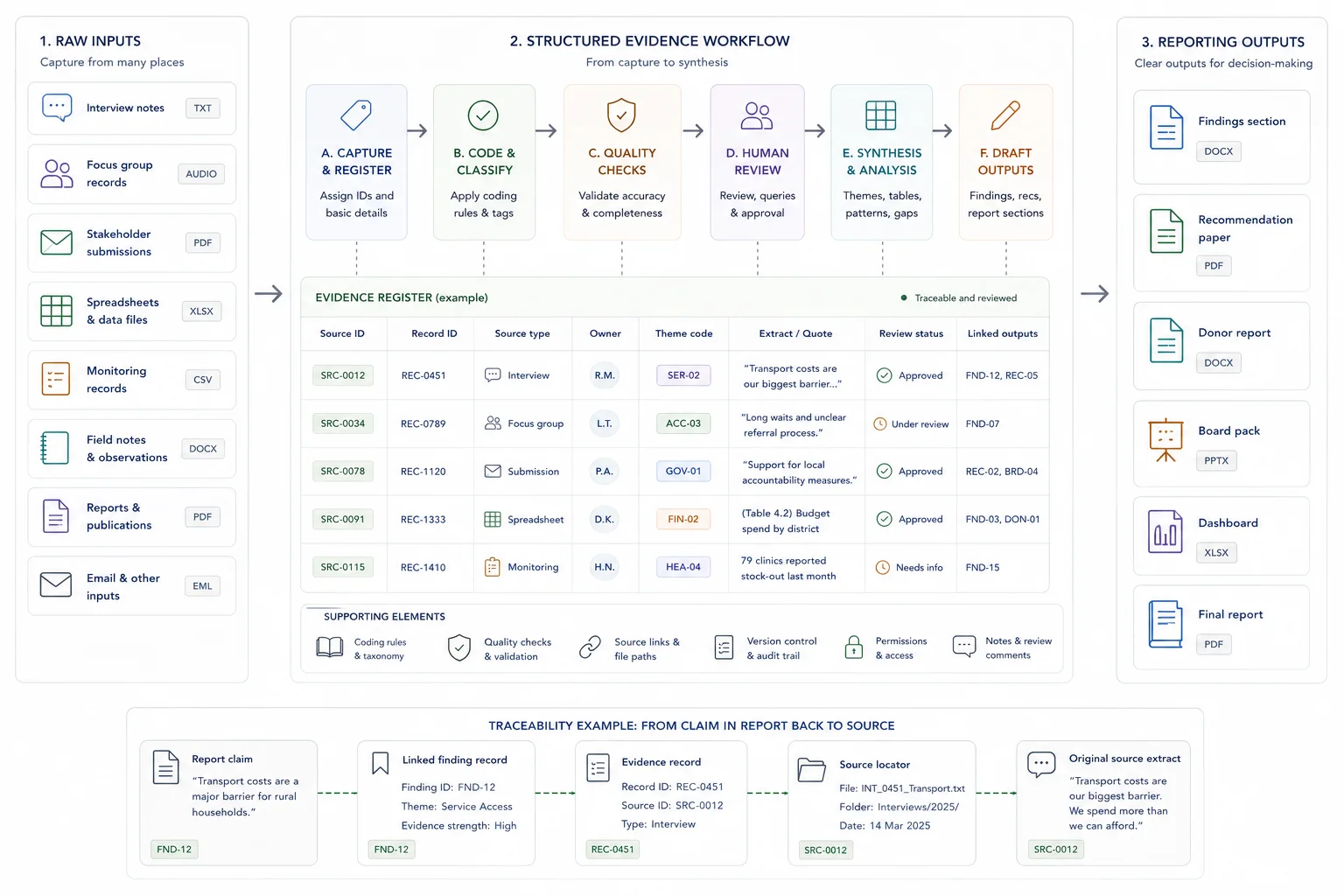

For a small consultation, a well-structured spreadsheet may be enough. For a larger consultation, the response matrix should be part of a wider evidence workflow, with source tracking, coding, review logs, redaction notes, decision records, and report-ready wording.

Quick answer

A public consultation response matrix is a structured table that links public feedback to responses, decisions, actions, owners, and publication notes. It should show what people said, how the issue was reviewed, what changed, and why. For larger consultations, it should also connect to the original source material, coding framework, review status, redaction notes, and final public-facing report.

Who this guide is for

This guide is for policy teams, public-sector project teams, consultation leads, researchers, and consultants who need to turn public feedback into a defensible response record.

It is especially relevant if you are dealing with:

- You have high submission volume or multiple respondent groups

- You need to publish a response that explains how feedback was considered

- You are using AI-assisted analysis but still need source checks and human review

It is less relevant if:

- You only need a short internal summary of a small, informal feedback process

Key takeaways

- Quick answer: a public consultation response matrix should include submission IDs, respondent type, comment references, original comments or summaries, themes, document references, project responses, decisions, actions, owners, statuses, and publication notes.

- The strongest matrices show the route from raw input to review, response, decision, action, and reporting.

- For larger consultations, the matrix should sit inside a controlled evidence workflow rather than operating as a standalone spreadsheet.

What a response matrix should do

A response matrix should connect raw public input to review, response, decision, action, and reporting. If you are dealing with high volume, use the Submission Analysis Capacity Calculator to estimate whether your current setup can handle the workload before changing the process.

What good looks like

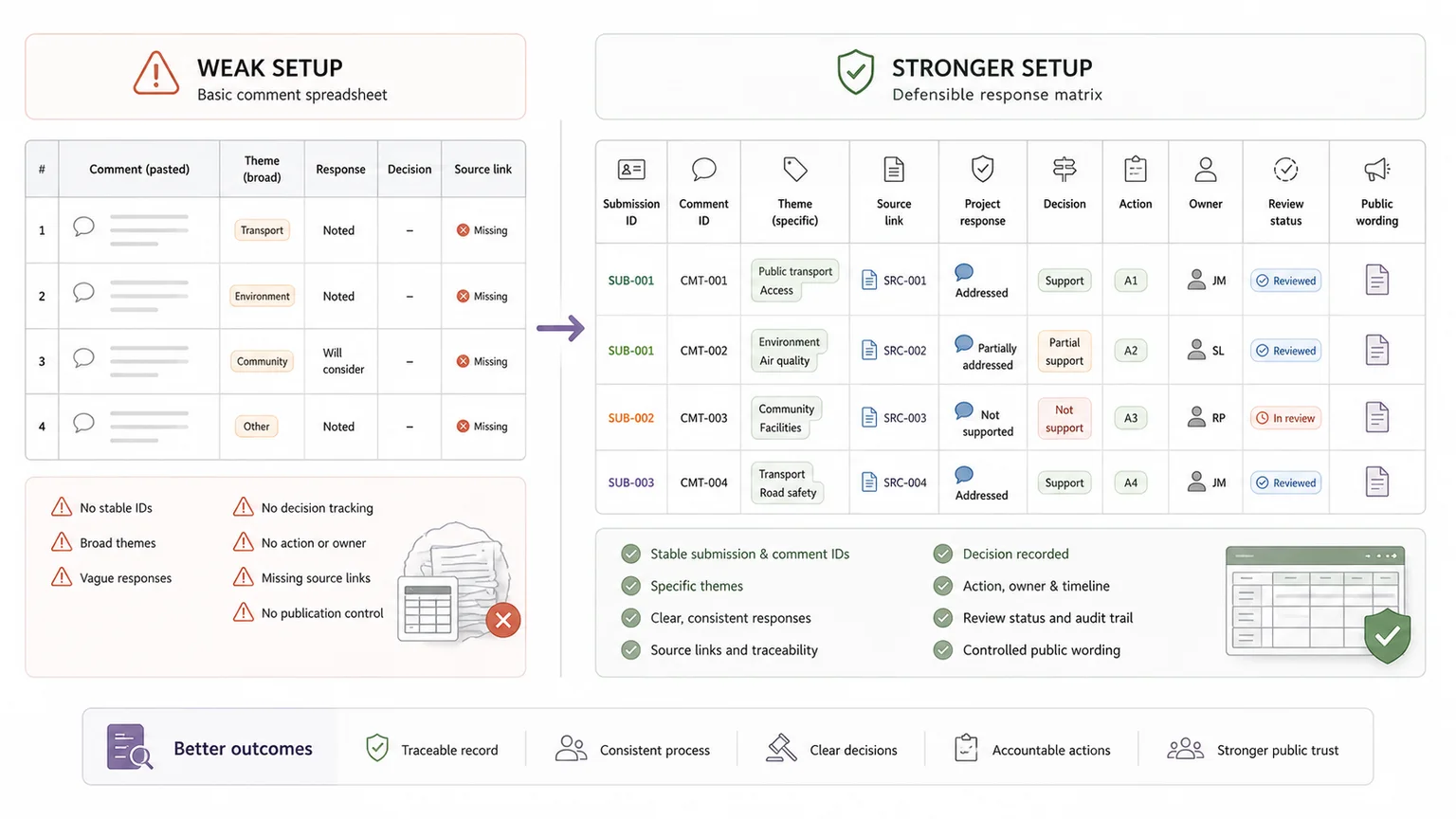

| Weak setup | Stronger setup |

|---|---|

| Comments are pasted into a spreadsheet | Each comment has a submission ID, comment ID, respondent type, theme, source reference, and review status |

| Responses say noted without a reason | Responses explain whether the issue changed a decision, led to an action, or was not adopted |

| Internal notes and public wording are mixed | Internal review notes, redaction notes, and public-facing wording are separated |

| AI summaries are accepted as final | AI-assisted summaries are checked against source material before decisions are recorded |

Where this fits in the wider workflow

| Workflow stage | What happens |

|---|---|

| Input | Public submissions, survey responses, meeting notes, emails, attachments, and consultation records |

| Structure | Submission IDs, respondent groups, themes, coding rules, source links, decision fields, and redaction notes |

| Review | Theme review, response drafting, source checking, legal or policy review, and approval |

| Output | Response matrix, public response report, decision log, action tracker, and evidence base |

The core questions your matrix should answer

After the quick definition, the next question is practical: what should the matrix help reviewers and decision-makers answer? A good matrix should make the route from public input to response, decision, action, and publication visible.

The four questions it should answer

A response matrix is often used in policy development, planning, infrastructure, regulation, service design, public engagement, and donor-funded research. The format can vary, but the purpose is usually the same: create a clear audit trail from consultation input to final decision.

Core matrix questions

| Question | What the matrix records |

|---|---|

| What did people say? | Original comments, submission extracts, or accurate summaries |

| Who raised the issue? | Respondent type, organisation, stakeholder group, or location |

| How was the issue reviewed? | Theme, evidence reference, technical input, policy review, or coding notes |

| What happened next? | Response, decision, action, owner, status, and public wording |

Why a response matrix matters

A weak consultation process often breaks down after responses are received.

Submissions arrive by email, survey, meeting notes, attachments, forms, and letters. Comments are copied into spreadsheets. Technical teams review separate documents. Decisions sit in meeting notes. Redaction happens late. The final report then has to be rebuilt from scattered evidence.

A response matrix reduces that risk by giving the team one structured place to track source material, themes, responses, decisions, actions, owners, and publication wording.

The Texas Department of Transportation's public comment response matrix guidance is a useful example of how formal this process can become. A response matrix should not make every comment look the same. It should show that the project team understood the issue and gave it proper consideration.

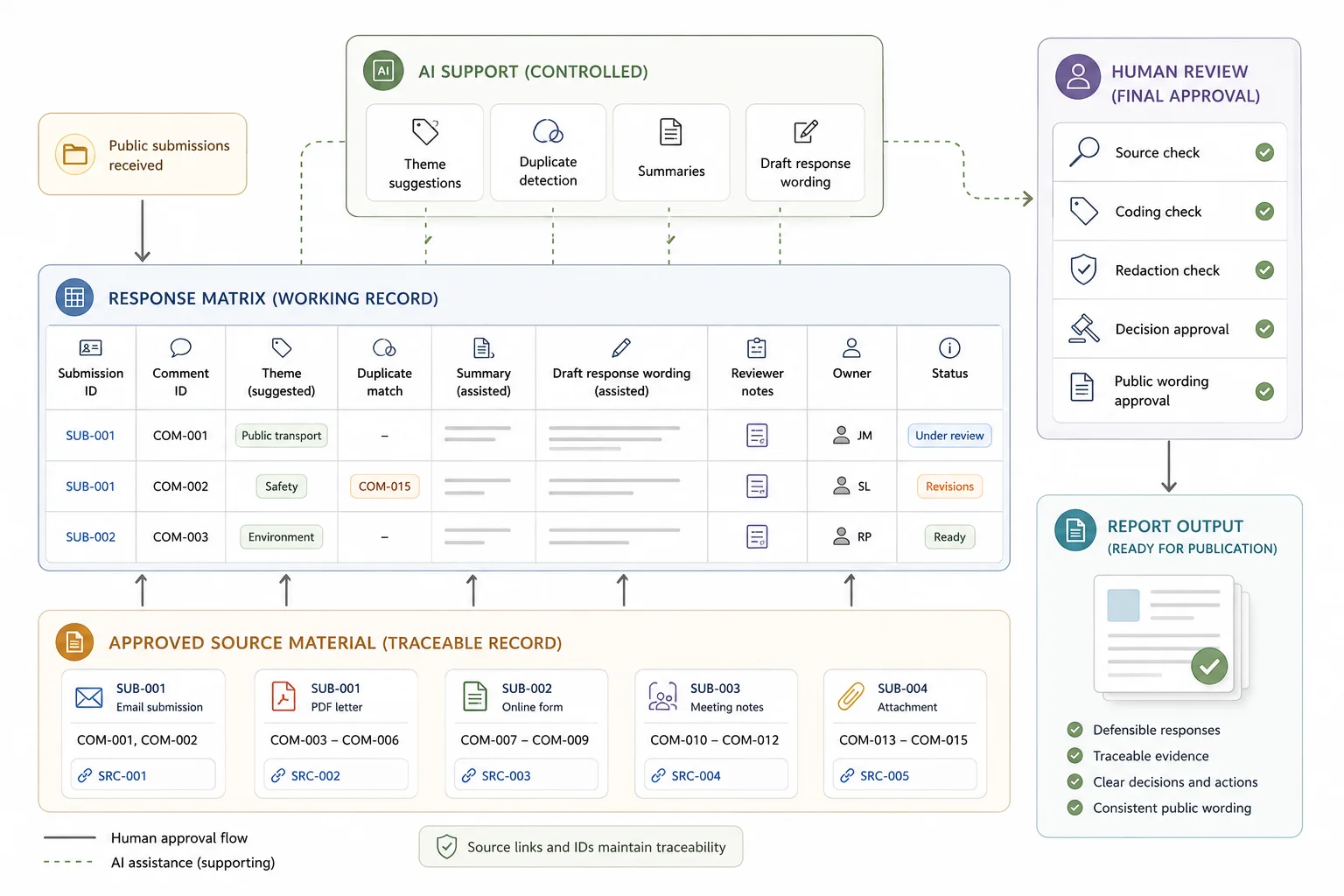

A response matrix is part of an evidence workflow

A response matrix is only useful if the workflow behind it works. If comments are saved in different folders, themes are applied inconsistently, reviewers use different wording, and actions are tracked in separate documents, the final matrix becomes hard to trust.

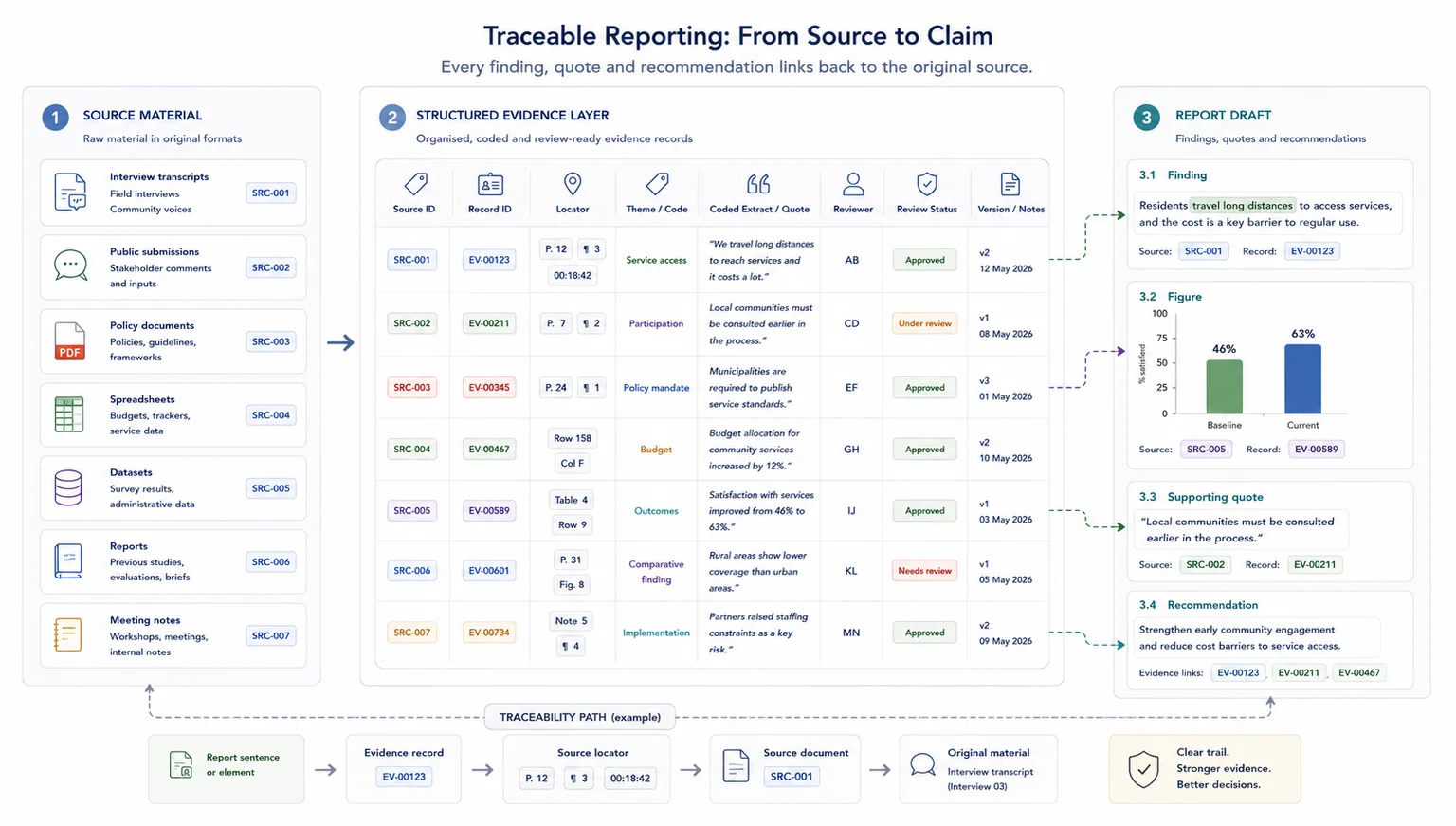

A stronger setup links each original submission to its comment reference, theme, response, decision, action, owner, status, and public wording. This helps policy teams, consultants, reviewers, and decision-makers see how public feedback moved from raw input to analysis, response, and action.

For larger consultations, the matrix should sit alongside a source tracker, coding framework, data dictionary, review log, decision log, redaction tracker, and report framework. That is where the response matrix connects with wider evidence workflows for reporting, Data Synthesis, and the Public Submission Analysis System.

Core fields to include

The matrix should be structured enough to support analysis, review, action, and publication.

Quick field list

The strongest matrices do not only summarise consultation feedback. They show the route from raw input to review, response, decision, and action.

Public consultation response matrix fields: project name, consultation period, submission ID, respondent type, comment ID, original comment, summary, theme, document reference, response, decision, action, owner, status, redaction note, public wording, and source link.

If the immediate problem is volume, you can estimate whether your current setup can handle the submission volume before redesigning the matrix.

What a public consultation response matrix should include

| Field | Purpose |

|---|---|

| Submission ID | Links each comment back to the original submission |

| Respondent type | Shows whether feedback came from residents, organisations, statutory bodies, businesses, or other groups |

| Comment reference | Gives each issue a stable ID for tracking |

| Original comment or summary | Records what was said |

| Theme or issue category | Groups related feedback |

| Document or policy reference | Links the comment to the relevant section, clause, plan, or question |

| Project response | Explains how the issue was reviewed |

| Decision | Records whether the point was accepted, rejected, deferred, referred, or already addressed |

| Action taken | Shows what changed, or why no change was made |

| Owner and status | Tracks who is responsible and whether the issue is open, reviewed, approved, or closed |

| Publication notes | Separates internal review detail from public-facing wording |

Project and consultation details

Start with the basic project information. This gives the matrix context before anyone reads the comment-level detail.

Include the project name, consultation period, consultation stage, responsible body, review team, and decision point. This matters when the matrix becomes part of a public report, committee pack, planning submission, audit file, or internal decision record.

Submission and respondent details

Record enough information to understand the source of the feedback without exposing personal data unnecessarily.

Useful fields include submission ID, anonymised respondent ID, respondent type, organisation where publication rules allow, location, channel, date received, and confidentiality flag. This metadata helps you analyse who responded, where issues came from, and whether certain stakeholder groups raised different concerns.

Comment reference number

Every comment or issue should have a stable reference number. This is especially important when one submission raises several points. A single organisation might comment on definitions, governance, funding, implementation, reporting, and evidence quality. Those points should not be trapped in one row if each needs a separate response.

Stable IDs make the matrix easier to review, filter, update, and cite. They also protect source traceability when comments are summarised or grouped.

Reference examples

| Reference type | Example |

|---|---|

| Full submission | SUB-001 |

| Individual comment | C-001 |

| Organisation comment | ORG-014-C03 |

| Survey question response | Q4-R083 |

| Workshop comment | WS2-C07 |

| Theme-level issue | T05 |

Original comment or accurate summary

The matrix should include the original comment, a faithful summary, or both. For small consultations, the full comment can often sit in the matrix. For larger consultations, it may be better to keep the original submission in a source file and place a clear summary in the matrix.

Weak summary: concerns about access.

Stronger summary: respondents raised concern that the proposed access changes could make it harder for older residents and people with disabilities to reach the service entrance.

The stronger version keeps the meaning of the feedback. It is specific enough for coding, technical review, response drafting, and public reporting.

Response, decision, action, and ownership

The response field is the core of the matrix. It should explain how the comment was considered and what happened next.

Theme or issue category

Themes help the team identify patterns across the consultation. Avoid themes that are too broad. Transport may be useful as a primary category, but school drop-off traffic or disabled parking access gives reviewers more to work with.

For high-volume consultations, use a coding framework with primary theme, secondary theme, sentiment, issue type, and evidence type.

Example themes

| Theme | Possible issues |

|---|---|

| Access and inclusion | Disability access, language access, digital exclusion, service reach |

| Transport and movement | Traffic, parking, walking routes, public transport |

| Policy wording | Definitions, exemptions, unclear clauses, missing terms |

| Evidence quality | Missing data, weak assumptions, unclear sources |

Document, section, clause, or policy reference

Each comment should be linked to the part of the proposal it relates to. That may be a policy number, report section, clause, drawing, consultation question, site area, or draft recommendation.

This helps technical reviewers, policy writers, and report writers update the right part of the document.

Project response

The response should explain how the comment was considered. It does not need to be long, but it should be clear, specific, and proportionate to the issue raised.

Avoid empty wording such as "noted" unless no action is needed and the reason is clear.

Weak response: comment noted.

Stronger response: no change. The requested amendment falls outside the scope of this consultation, but the issue has been referred to the service delivery team for review under the separate access improvement plan.

Decision or recommendation

The response explains the reasoning. The decision field records the outcome. Consistent decision labels help senior reviewers see the overall effect of the consultation without reading every full response.

Decision labels

| Decision label | Meaning |

|---|---|

| Change made | The proposal, wording, plan, or report has been updated |

| Change recommended | A change is proposed for approval |

| No change | The proposal remains as drafted |

| Further review | More analysis or technical input is needed |

| Referred | The issue has been sent to another process or owner |

| Outside scope | The comment is valid but falls outside this consultation |

| Already addressed | The issue is covered in existing text, evidence, or policy |

| Support recorded | The comment supports the proposal and needs no change |

Action taken, owner, status, and approval

The action field records what happened as a result of the comment. A useful response matrix should also help manage the work, not only record it.

Include response owner, action owner, reviewer, status, deadline, and approval status. These fields become important when several teams are involved. They stop the matrix from becoming a static spreadsheet that no one owns.

Publication wording and redaction notes

The public version of a response matrix often needs different wording from the internal version. Internal notes may include legal comments, operational detail, named staff, draft positions, confidential information, or sensitive respondent details.

Public wording should be clear, accurate, and safe to publish. Build publication and redaction fields into the matrix early. Leaving them until the final reporting stage creates avoidable risk and rework.

Basic, advanced, and AI-supported matrices

Not every consultation needs the same level of structure. A small consultation may only need a spreadsheet. A larger one may need a structured evidence base.

Basic vs advanced public consultation response matrix

A basic matrix can work for 20 responses. It can struggle when there are hundreds of comments, multiple reviewers, technical attachments, legal review, repeated issues, confidentiality concerns, and public reporting requirements.

For larger projects, the matrix should be designed as part of a Public Submission Analysis System.

Basic vs advanced matrix

| Basic matrix | Advanced matrix |

|---|---|

| Suitable for small consultations | Suitable for large or high-scrutiny consultations |

| One row per comment or theme | Source-linked comment records with coding and review fields |

| Basic respondent type and response | Respondent type, stakeholder category, geography, issue type, and publication status |

| Manual status tracking | Owner, deadline, approval status, review notes, and action log |

| Often built in a spreadsheet | Better built as a structured evidence base or database |

| Limited redaction controls | Separate internal and public-facing response fields |

Can AI help with a public consultation response matrix?

AI can help with first-pass grouping, theme suggestions, duplicate detection, summary drafting, plain-language rewriting, and draft response wording. It should not be the final reviewer.

Public consultation work depends on source material, review logic, privacy handling, and decision records. AI is more useful when submissions already have stable IDs, metadata, source links, themes, review fields, and approval statuses. Without that structure, AI can produce summaries that sound useful but are hard to check.

The UK Government's AI Playbook advises public-sector teams to understand AI's limitations and manage its risks. For consultation analysis, that means using AI as a support layer, not as an unchecked decision-maker.

AI-supported tasks and human review

| AI-supported task | Human review needed |

|---|---|

| Suggesting themes | Confirming the coding framework |

| Detecting duplicate comments | Checking whether comments are truly the same issue |

| Drafting summaries | Comparing summaries against source submissions |

| Rewriting technical responses | Approving public-facing wording |

| Producing report drafts | Checking claims, evidence, and decisions |

Treating the matrix as a summary table

A summary table tells you what people said. A response matrix should also explain how the team responded and what happened next. If it stops at summaries, it cannot support review, decision-making, or public reporting properly.

Losing the link to the original submission

Once source links are lost, later review becomes slower and less reliable. Keep stable submission IDs, comment IDs, and source locations from the start so every response can be checked against the original material.

Using themes that are too broad

Broad themes hide the real issue. Transport is less useful than school drop-off traffic or disabled parking access. The theme should help reviewers understand the specific concern, not only the general topic.

Recording noted without a reason

"Noted" rarely explains anything. Use it only where no action is needed and the reason is clear. Most rows need a response that explains whether the point was accepted, rejected, deferred, referred, or already addressed.

Mixing internal review notes with public wording

Internal review notes may include legal comments, operational detail, draft positions, or sensitive respondent information. Keep those notes separate from public response wording so redaction, approval, and publication are easier to manage.

Leaving redaction until the end

Privacy, confidentiality, and publication status should be part of the matrix design from the start. If redaction is left until the final reporting stage, the team usually creates avoidable review pressure and publication risk.

Using AI summaries without source checks

AI-supported summaries still need source IDs, review fields, and human sign-off. If the AI groups or summarises comments without a traceable source link, the matrix may sound tidy while becoming harder to defend.

Checklist before sharing or publishing

Before sharing, submitting, or publishing the matrix, check that project dates are recorded, each submission has a unique ID, each comment has a reference, respondent type is captured consistently, original comments are stored or linked, summaries are accurate, themes follow a coding framework, responses explain the reasoning, decisions are recorded, actions are trackable, owners and statuses are assigned, public wording is separated from internal notes, redaction fields are complete, AI-assisted summaries have been checked, and the matrix can support the final consultation report.

When a simple setup is enough

- The consultation has low volume and a narrow set of issues

- One reviewer can check every response against the source material

- No public redaction, multi-team review, or formal decision record is required

When you need a more structured system

- Submissions are high volume, duplicated, or spread across formats

- Several teams need to code, review, redact, and approve responses

- The final response needs to show how feedback informed decisions

Common mistakes to avoid

Treating the matrix as a summary table

A response matrix should do more than summarise comments. It should show the response, decision, action, owner, and source trail behind each issue.

Losing the link to the original submission

If the source link is missing, reviewers cannot check whether a response reflects what was actually submitted. Keep stable submission and comment IDs.

Using themes that are too broad

Broad themes make reporting easier at first but hide important differences. Use themes that are narrow enough to support decisions and public responses.

Recording noted without a reason

Noted is not a response. Explain whether the issue was accepted, rejected, already addressed, out of scope, or carried into an action.

Mixing internal review notes with public wording

Internal notes can include uncertainty and review logic. Public wording needs a controlled version that is clear, approved, and safe to publish.

Leaving redaction until the end

Redaction decisions affect what can be published and quoted. Track them during review, not as a late cleanup task.

Using AI summaries without source checks

AI can help group and summarise feedback, but response wording still needs human review and a source check before publication.

Copyable public consultation response matrix fields

Use these fields as a starting point and adjust them for the consultation rules, publication duties, and review process.

Response matrix fields

| Field | Include? |

|---|---|

| Project name | |

| Consultation period | |

| Submission ID | |

| Respondent type | |

| Comment ID | |

| Original comment or summary | |

| Theme | |

| Source reference | |

| Project response | |

| Decision | |

| Action taken | |

| Owner | |

| Status | |

| Redaction note | |

| Public wording |

Related resources

Use these next if you need to move from the article into a related workflow, calculator, case study, or service.

- Public Submission Analysis System - use this if you need a controlled review system for submissions

- Evidence Insight Reporting Engine - use this if consultation evidence needs to feed reporting and decisions

- Data Synthesis - use this if submissions need to be coded and analysed across themes

- Submission Analysis Capacity Calculator - use this to estimate review workload

- Local government white paper evidence drafting review - use this to see a related workflow in practice

- How to synthesise stakeholder submissions - use this if you need the synthesis step before the response matrix

FAQ

What is the purpose of a public consultation response matrix?

The purpose of a public consultation response matrix is to link public feedback to responses, decisions, and actions. It helps project teams show how consultation feedback was reviewed and how it affected the final proposal, policy, plan, or report.

What should a public consultation response matrix include?

A public consultation response matrix should include submission IDs, respondent type, comment references, original comments or summaries, themes, document references, project responses, decisions, actions, owners, statuses, and publication notes.

Should every consultation comment be included?

For small consultations, every comment can be included as its own row. For larger consultations, similar comments can be grouped into themes, as long as the full submissions remain stored and traceable.

What is the difference between a consultation report and a response matrix?

A consultation report explains the process, participation, findings, themes, and next steps. A response matrix is the structured evidence table that links comments to responses, decisions, and actions.

Can AI create a public consultation response matrix?

AI can help draft summaries, suggest themes, detect duplicates, and rewrite technical responses in plain English. It should not replace human review. The matrix still needs source links, review fields, decision ownership, and approval controls.

When is a spreadsheet not enough?

A spreadsheet can work for a small consultation. A more structured system is better when there are hundreds of comments, multiple reviewers, attachments, technical evidence, confidentiality concerns, public reporting duties, or legal scrutiny.

Need help structuring a consultation response matrix?

A public consultation response matrix should give your team a clear line from public feedback to review, decision, action, and reporting.

For a small consultation, that can be a well-structured spreadsheet. For a larger consultation, the matrix should be part of a wider evidence workflow with source tracking, coding, review logs, redaction controls, and report-ready outputs.

I help policy, research, public-sector, and donor-funded teams turn submissions, comments, and stakeholder feedback into coded evidence bases, response matrices, synthesis outputs, and report-ready material.

Sources used in this guide

Data Synthesis

Combine and interpret inputs from multiple sources into integrated findings.