Many teams can summarise what the evidence says. Fewer can turn that synthesis into a clear decision.

The sticking point is usually not information volume. It is the move from integrated findings to priorities, implications, options, trade-offs, and next steps that somebody can actually use.

This guide is about that final move. It shows how to frame the decision, interpret the evidence in context, surface what matters most, and turn the result into usable action support.

Quick answer

Decision-ready insight is raw information that has been structured, synthesised, interpreted, and framed around a real decision. It does more than describe what the evidence says. It explains what matters, what the trade-offs are, what options are available, and what action can reasonably follow. The key is to preserve the evidence trail while making the decision logic clear.

Who this guide is for

This guide is for research teams, evaluation leads, policy teams, donor-funded programme teams, consultants, and operations leads who need raw information to support decisions.

It is especially relevant if you are dealing with:

- You have evidence, reports, notes, or data but decision-makers still ask what it means

- Findings exist but priorities, trade-offs, and next steps are unclear

- You need recommendations that can be traced back to evidence

It is less relevant if:

- You only need a descriptive summary and no decision, action, or recommendation is expected

Key takeaways

- Main principle: frame the decision first, then interpret the evidence against the action, timeframe, and trade-offs that matter now.

- Decision consequence: good insight work reduces decision effort instead of restating the synthesis in shorter form.

- Review consequence: priorities, implications, options, and next steps stay grounded in the evidence without overstating what the material can support.

What decision-ready insight means

Decision-ready insight is the point where evidence becomes usable for action. It answers a specific decision question, explains the evidence behind the answer, separates facts from interpretation, and shows the implication for choices, priorities, trade-offs, or next steps.

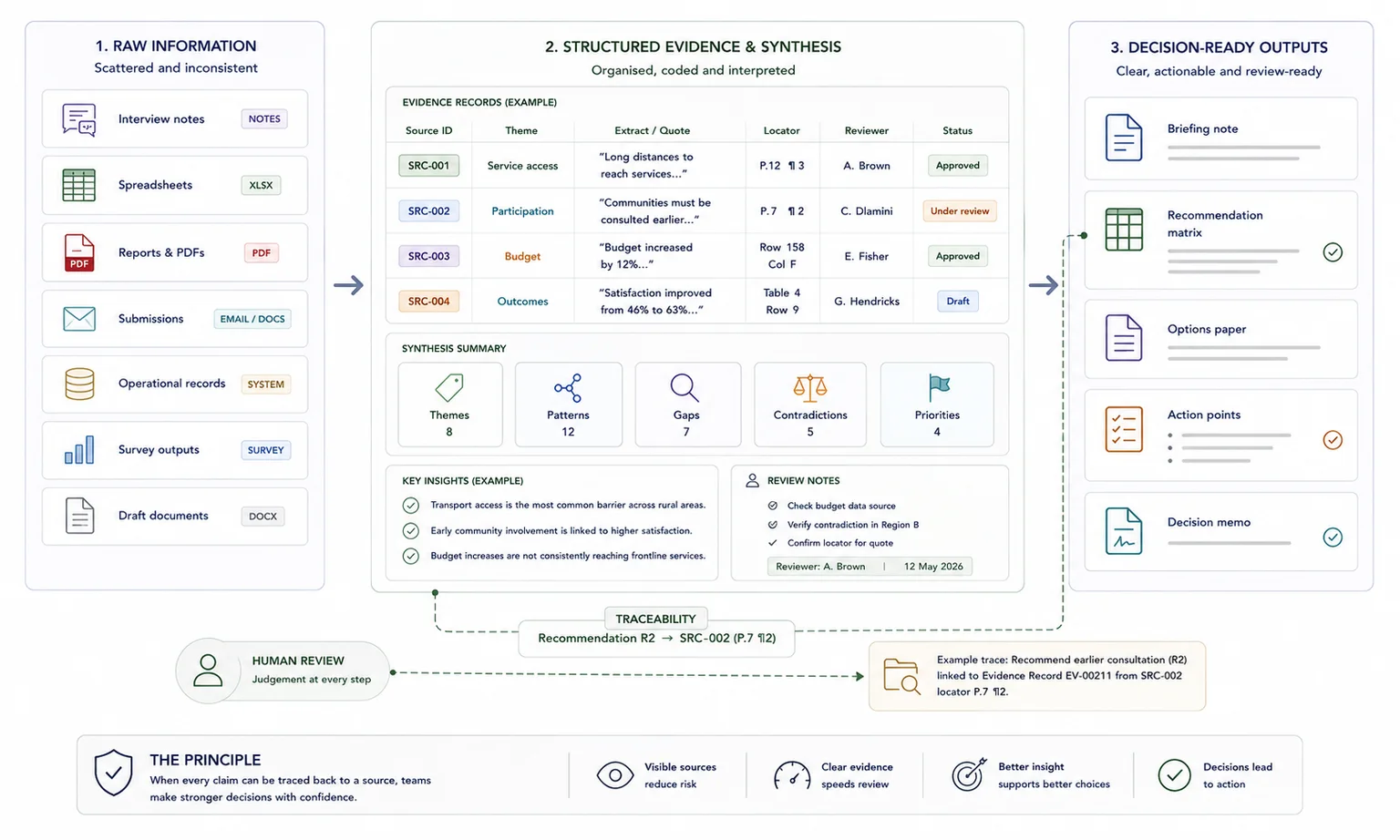

Three stages of the same evidence chain

| Stage | Question answered |

|---|---|

| Raw information | What material do we have? |

| Synthesised evidence | What does the material show? |

| Decision-ready insight | What matters now, and what should be weighed next? |

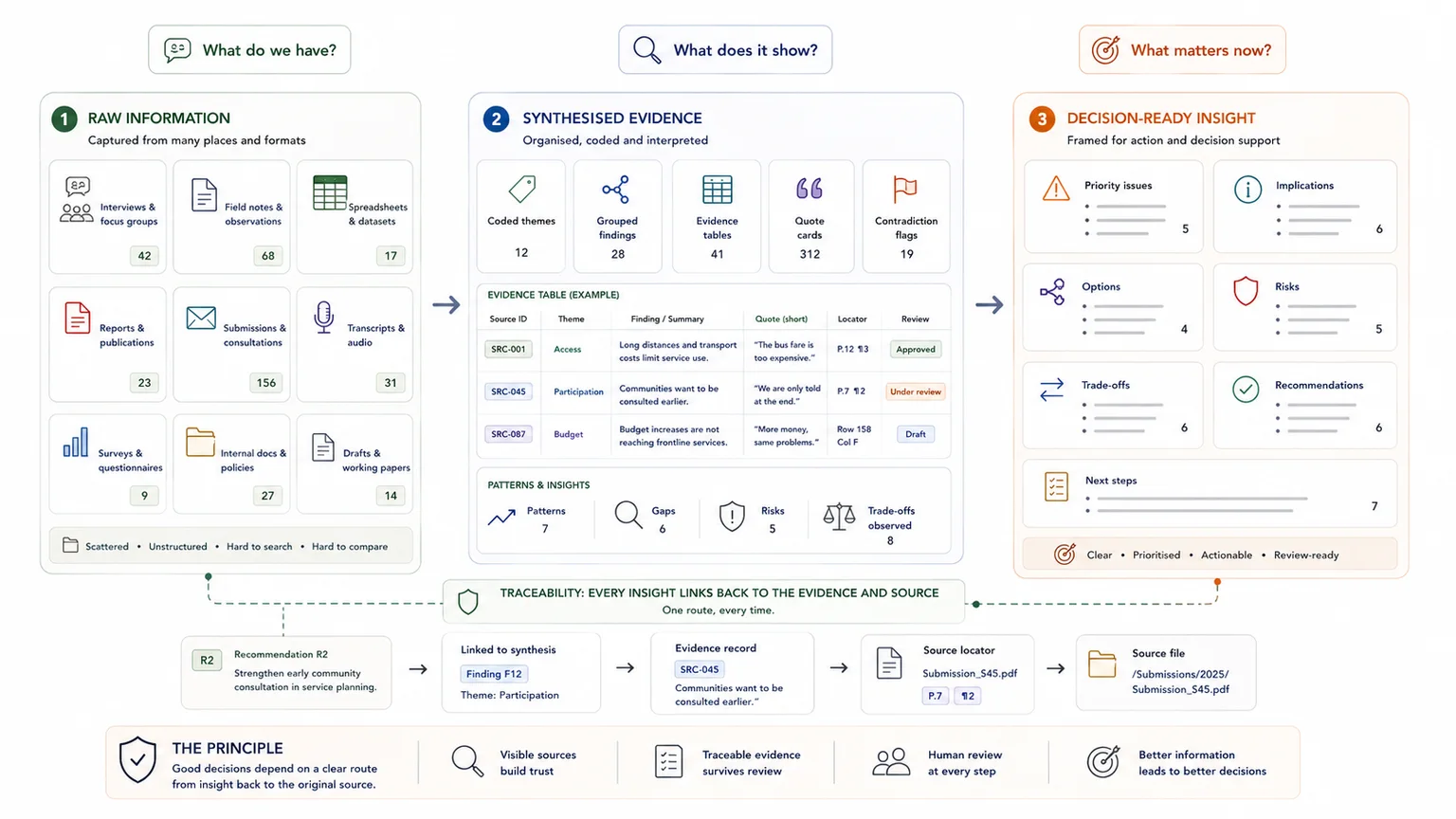

Raw information, synthesis, and decision-ready insight are not the same thing

Raw information is the unworked material: interview notes, survey outputs, submissions, operational records, transcripts, spreadsheets, and draft reports. Synthesised evidence is what you get after those inputs have been grouped, coded, compared, and turned into patterns or integrated findings across sources. Decision-ready insight is the next step again: the evidence has been framed around a live decision, with priority issues, implications, options, risks, and next steps made explicit.

That working definition is an inference from the WHO guide for evidence-informed decision-making, evidence-brief standards, and the GRADE handbook.

Decision support needs more than findings

That distinction matters because a team can have pages of findings and still fail to give decision-makers what they need. GRADE's model is a good reminder: evidence alone is not the full job. Decision work also weighs resource use, equity, acceptability, feasibility, implementation, and monitoring.

In other words, good insight work turns "what the data says" into "what should be weighed next."

What good looks like

A good insight output does not repeat the synthesis in shorter form. It reduces decision effort.

| Weak setup | Stronger setup |

|---|---|

| Raw information is summarised by topic | Information is framed around a decision question |

| Findings describe what happened | Insights explain what matters and what should be weighed next |

| Recommendations are added as opinions | Recommendations are linked to evidence, implication, and feasibility |

| Reviewers debate interpretation late | Interpretation rules and evidence limits are visible during review |

Briefing notes, evidence packs, and recommendation matrices

A briefing note works when a lead needs the issue, the evidence, the implication, and the next move on one page. An evidence pack works when reviewers need to trace a claim back to quotes, cases, or records. A recommendation matrix works when options need to be weighed against cost, feasibility, equity, delivery risk, or stakeholder response.

GRADE's model and evidence-brief guidance both support that wider framing.

Searchable systems and reusable reporting assets

The site’s service and case work point to a practical output set: reports, summaries, briefing notes, findings sections, key insights, action points, strategic implications, priority issues, recommendation matrices, searchable evidence assistants, and coded review databases.

The exact mix changes by project, yet the goal stays the same: make the material easier to search, verify, reuse, and act on.

Where this fits in the wider workflow

| Workflow stage | What happens |

|---|---|

| Input | Data, documents, interview notes, reports, submissions, and evidence summaries |

| Structure | Decision questions, evidence categories, finding logic, implications, options, and recommendation fields |

| Review | Evidence strength checks, interpretation review, trade-off review, and recommendation checks |

| Output | Decision-ready insights, briefing notes, recommendation matrices, options papers, and report sections |

Why teams get stuck between information and action

The failure point is rarely one bad chart or one weak report section. It usually starts much earlier, when the material is fragmented, low-trust, or too dense to use well.

Fragmented inputs create noise

Teams usually get stuck in three places. First, the inputs are fragmented. The services page names the common starting point clearly: information is scattered, manual review is too heavy, the system is weak, reporting takes too long, and source tracking is poor.

That is a workflow problem before it is an analysis problem.

Weak input quality weakens trust

Second, weak input quality makes the eventual output hard to trust. The UK Government Data Quality Framework breaks quality into validity, accuracy, completeness, uniqueness, consistency, and timeliness, and it stresses that data should be judged as fit for purpose.

If those basics are weak, the nicest chart or summary will still rest on shaky ground.

More material does not always improve judgement

Third, more material does not always mean better judgement. OECD work on information disclosure shows that overload and complexity can stop people using information well. When readers get too much or too complex material, they may ignore it.

A good decision memo cuts that noise by bringing forward what is priority, what is uncertain, and what action is possible next.

The workflow that turns raw material into decision-ready insight

Insight generation is not a magic step at the end. It is the result of a sequence that starts with the decision context, then moves through structure, synthesis, and action framing.

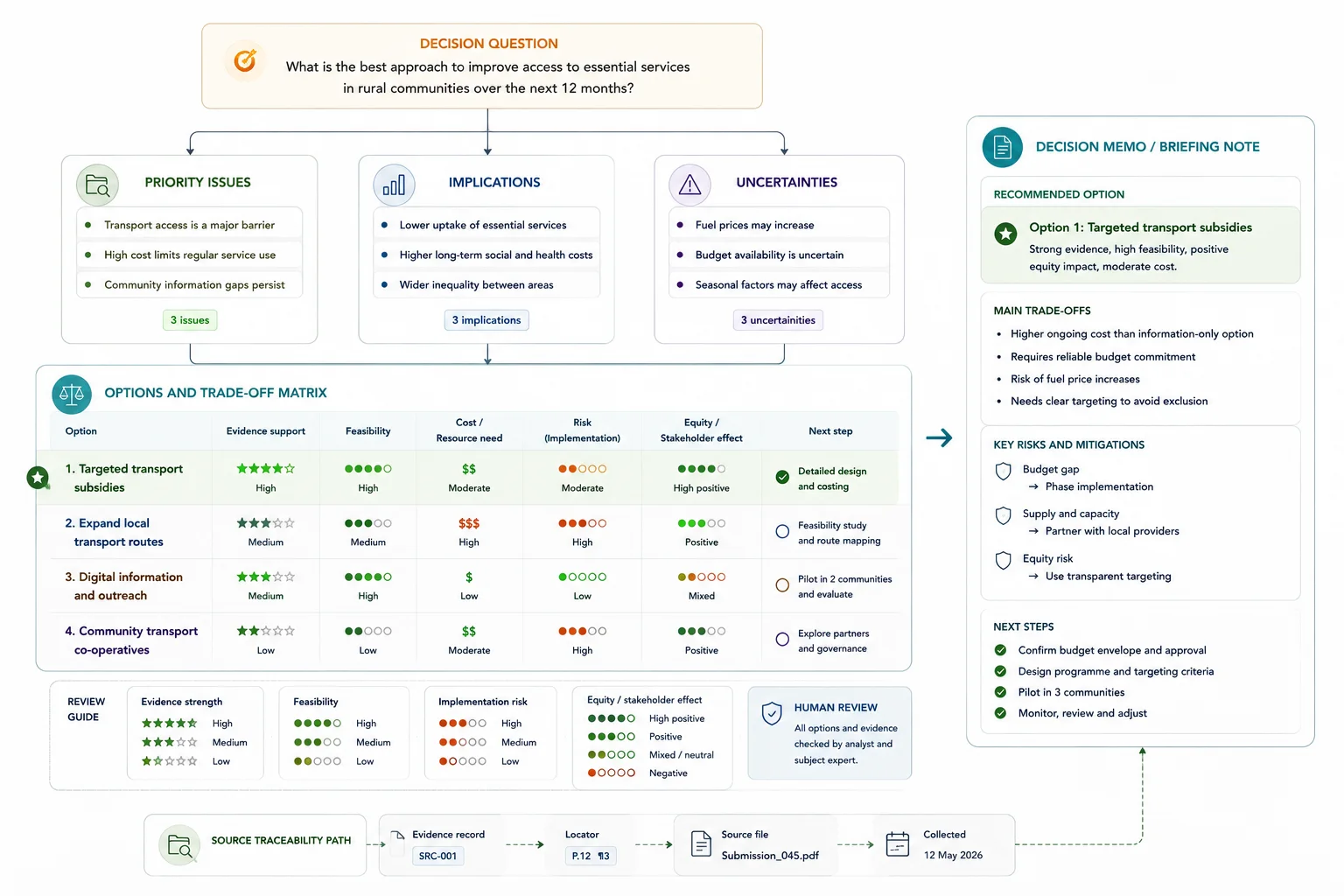

Frame the decision before you interpret the evidence

Do not begin by asking what the dataset contains. Begin by asking what decision needs to be made, who will make it, what timeframe matters, and what kind of action the output needs to support.

- What issue needs a call?

- Who is the decision-maker?

- What timeframe matters?

- What form must the output take?

Policy and evidence-to-decision frameworks use the same logic: start with the priority problem, then move to options, trade-offs, feasibility, and implementation. That step stops analysis from drifting into interesting but unusable detail.

Build a structured evidence base

Next, build a structured evidence base. That means one schema or taxonomy, clear fields, source locators, metadata, and shared rules for extraction or coding. Romanos Boraine's process page puts structure at the centre of the workflow, and the UNICEF Zambia case study shows why: a schema-first model turned 120 narrative case studies into a clean query-ready evidence base with traceable reporting outputs.

Synthesize across sources

From there, synthesise across sources. This is where separate notes, submissions, interviews, surveys, or records get turned into themes, relationships, gaps, contradictions, and cross-cutting patterns. WHO's work on qualitative evidence synthesis shows that this stage matters when decision-makers need more than benefits and harms alone and need to see context, stakeholder views, acceptability, feasibility, and implementation issues.

Translate the synthesis into priorities, implications, and next steps

This is the point where insight generation becomes different from synthesis. Synthesis tells you what the material shows across sources. Insight generation tells you what matters most now, what it changes, what remains uncertain, and what the decision-maker should weigh next.

Then translate the synthesis into decision support. The site’s own framing of Insight Generation is useful here: move from information to priorities, implications, and action.

In practice, that means making the priority issues explicit, surfacing what the evidence points to, stating what is still uncertain, and spelling out the action points or recommendation paths that follow.

Package the work in a form people can actually use

Last, package the work in a form people can actually use. Evidence briefs for policy are a strong reference point because they clarify the policy problem, frame the options, and identify implementation issues in a given setting.

That same logic can sit inside a donor report, a leadership briefing note, an operations memo, a recommendation matrix, or a searchable evidence pack.

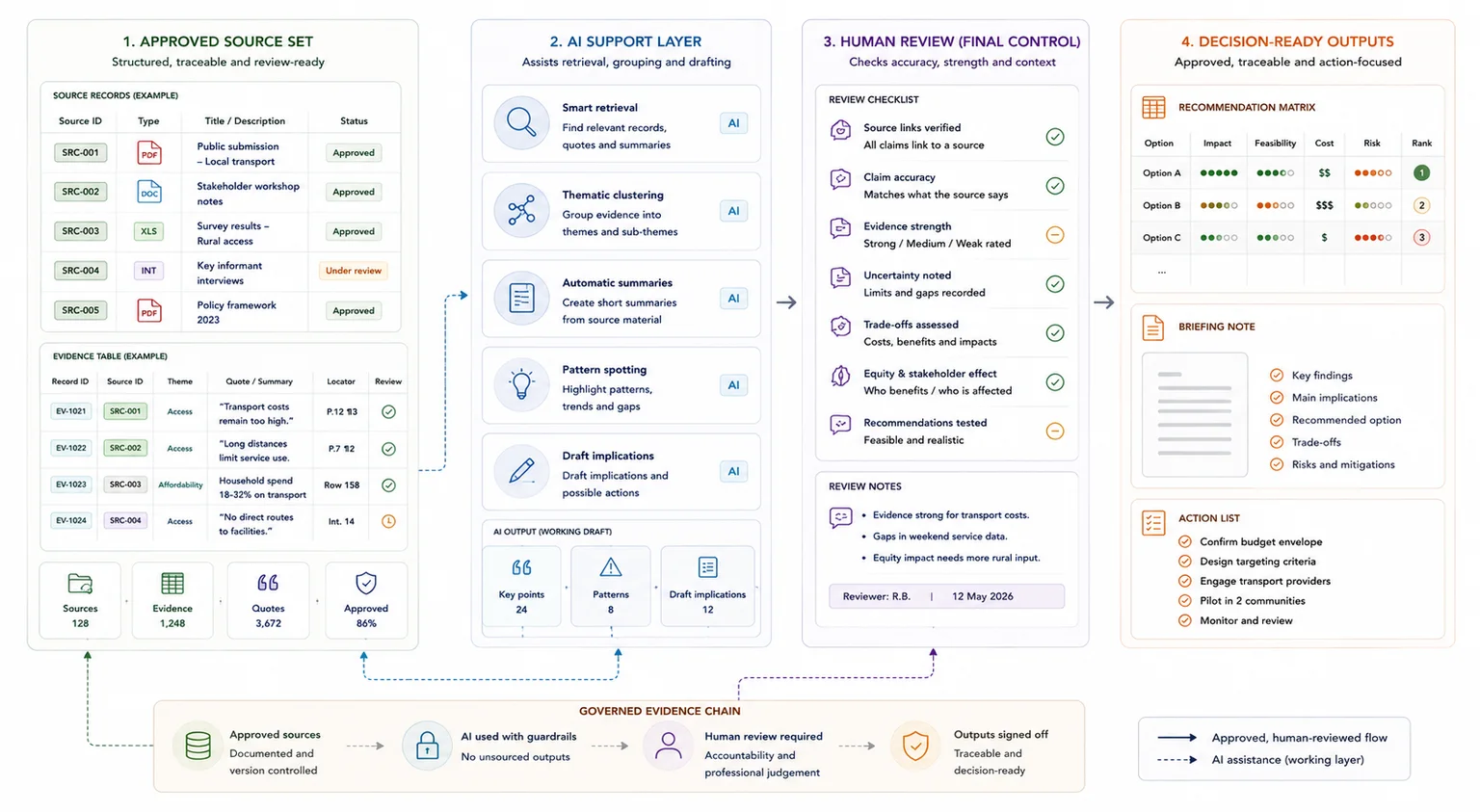

Where AI fits and where human review still matters

AI can speed parts of this workflow, but only when the structure and governance underneath it are strong enough to carry the work safely.

Good uses for AI in evidence-heavy workflows

AI can speed parts of this workflow. The current literature on automation in evidence synthesis points to useful roles in screening, extraction support, theme spotting, and retrieval. The site’s Custom AI Building service fits the strongest use case here: speed up access and pattern spotting inside a structured system tied to a client’s own data environment.

Why unreviewed AI is still a risk

Recent reviews are blunt on the limit: current GenAI should not be used for evidence synthesis without human involvement or oversight, and the NIST AI Risk Management Framework places human-centered risk management at the centre of responsible AI use.

That means AI can draft, sort, cluster, or retrieve, yet humans still need to review source links, test claims, flag ambiguity, and sign off judgement calls.

A safer model: human-reviewed AI inside a governed workflow

A safer model is human-reviewed AI inside a governed workflow: clear schema rules, documented extraction logic, source traceability, sampled quality checks, and a final human pass on implications and recommendations. That approach also fits Google's current guidance better than thin, auto-generated pages that add little value.

What this looks like in practice

The proof layer on the site already shows this pattern in live work: structure first, then synthesis, then drafting or decision support, with traceability kept visible across the chain.

South african local government white paper workflow

Result: Turned a messy submission process into one working evidence system.

The South African Local Government White Paper workflow moved from mixed-format submissions into claims coding, thematic synthesis, a searchable evidence assistant, white paper drafting, and a live coded review-comments database.

The result was a traceable evidence and drafting system that supported a national white paper and an active review workflow.

UNICEF zambia child poverty study

Result: Cut analysis time from 60–90 minutes per case to about 15 minutes.

In the UNICEF Zambia child poverty study, 120 narrative case studies were turned into a governed, spreadsheet-first evidence workflow with AI-assisted coding, reporting-ready tables, and a plain-English retrieval layer.

Analysis time fell from 60–90 minutes per case to about 15 minutes, with an estimated 120 analyst hours saved across the dataset.

UNICEF palestine disability situation analysis

Result: Made a UNICEF-ready draft feasible inside a three-week recovery window.

In the UNICEF Palestine disability situation analysis, a delayed project was rebuilt into a structured qualitative evidence database, recommendation matrix, and UNICEF-ready draft report within a three-week recovery window.

That case is useful for one simple reason: speed mattered, yet source traceability and methodological discipline still had to hold.

When specialist insight generation support makes sense

Outside support is most useful when the internal team can see the problem clearly, yet cannot move the evidence chain forward cleanly enough to produce a decision-useful output.

Signs the internal workflow is breaking

Outside support makes sense when the internal team can see the work but cannot move it forward cleanly. Common signs include scattered inputs, heavy manual review, weak source tracking, slow reporting, inconsistent analysis, or leaders asking for priorities and action when the team can only hand over summaries. If slow reporting is the visible pain, you can check what a reporting bottleneck may be costing before scoping the wider insight workflow.

This is the point where structured evidence, synthesis, report writing, and decision support need to work as one chain, not as separate tasks.

Those are the same starting conditions and use cases named on the services page.

What a strong partner should deliver

A strong partner in this space should bring four things: structure, synthesis, reporting discipline, and decision support. The service mix here is built that way, with connected work across database architecture, data synthesis, report writing, custom AI building, and insight generation.

The aim is not a loose advisory layer. It is a usable workflow and output set that leaves the team with clearer evidence, faster retrieval, stronger reporting, and better action framing.

When a simple setup is enough

- The decision is narrow and low risk

- The evidence base is small and already reviewed

- A short briefing note can answer the question clearly

When you need a more structured system

- The decision depends on mixed evidence, competing priorities, or multiple stakeholders

- Recommendations need to be defended against source material

- The team needs a repeatable way to move from evidence to action

Common mistakes to avoid

Summarising more instead of deciding better

Longer summaries do not create insight. Start with the decision question, then interpret the evidence against that question.

Hiding trade-offs

Decision-ready work should make trade-offs visible. If constraints, risks, equity, feasibility, or timing matter, record them.

Disconnecting recommendations from evidence

A recommendation should show the finding, implication, and evidence limit behind it. Otherwise it becomes an opinion.

Decision-ready insight test

Use this before sharing an insight with decision-makers

| Question | Yes/No |

|---|---|

| Does the insight answer a specific decision question? | |

| Is the evidence behind it traceable? | |

| Does it separate facts from interpretation? | |

| Does it explain what the finding means for action? | |

| Is the recommendation linked to the evidence? | |

| Can a reviewer understand the logic quickly? |

Related resources

Use these next if you need to move from the article into a related workflow, calculator, case study, or service.

- Insight Generation - use this if raw information needs to become decision support

- Data Synthesis - use this if the evidence still needs structuring first

- Evidence Insight Reporting Engine - use this if insights need to sit inside a repeatable reporting workflow

- Evidence workflows for reporting - use this if the upstream workflow is breaking

- The real cost of messy evidence workflows - use this if workflow delays are the visible symptom

- Reporting Bottleneck Cost Calculator - use this to estimate the cost of slow review and reporting

FAQ

What is insight generation?

Insight generation is the stage where structured data or synthesised evidence is turned into priority issues, implications, and action points for decisions, planning, or strategy. It sits after raw collection and after synthesis. The job is to answer what matters, why it matters, and what should happen next.

How is data synthesis different from insight generation?

Data synthesis brings material from multiple sources into one coherent finding set. Insight generation goes one step further and frames those findings for action, trade-offs, and next steps. One gives you the integrated evidence; the other turns that evidence into decision support.

Can AI turn raw information into decision-ready insight on its own?

No. Recent reviews say current GenAI should not be used for evidence synthesis without human involvement or oversight, and NIST places human-centered risk management at the centre of responsible AI use. AI can speed retrieval and draft support, yet human review is still needed for source checks and judgement calls.

What should a decision-ready output include?

It should state the issue, pull together the best available evidence, show the trade-offs, note what is still uncertain, and spell out feasible next steps or options. Evidence-brief and evidence-to-decision models also bring in implementation, resource use, equity, acceptability, and feasibility.

Who usually needs insight generation services?

Research and evaluation leads, policy teams, donor-funded programmes, operations leads, nonprofits, and lead contractors all fit when they are sitting on large, messy, evidence-heavy inputs that need to be turned into credible outputs and action.

Move from information volume to decision use

Decision-ready insight sits late in the chain. It assumes the evidence is already structured well enough to trust and synthesised well enough to compare.

The real job here is to turn that material into priorities, implications, options, and next steps without overstating what the evidence can support.

Sources used in this guide

These are the main external references behind the workflow, quality, AI, and search points described above.

Used for the core evidence-informed decision-making framing.

Read sourceUsed for evidence-to-decision logic and trade-off framing.

Read sourceUsed for evidence-brief structure and decision framing.

Read sourceUsed for contextual, stakeholder, feasibility, and implementation evidence in synthesis.

Read sourceUsed for fit-for-purpose quality dimensions.

Read sourceUsed for overload, complexity, and poor information use.

Read sourceUsed for the human-centered AI governance point.

Read sourceUsed for useful AI roles in evidence-heavy workflows.

Read sourceUsed for the human oversight and review warning.

Read sourceUsed for the SEO and AI Overviews guidance.

Read sourceInsight Generation

Turn raw data and synthesis into practical insights for decisions, planning, and strategy.